AI regulatory policy changes in 2026 are primarily driven by the enforcement of the EU AI Act for high-risk systems starting August 2, 2026, and a surge of targeted U.S. state laws governing employment decisions, algorithmic pricing, and consumer-facing AI. These changes necessitate immediate operational adjustments, including comprehensive AI system inventories, gap analyses against specific regulations, and the establishment of cross-functional governance structures to ensure legal compliance and mitigate significant financial and reputational risks.

AI regulation in 2026 demands immediate action from businesses to navigate strict EU enforcement deadlines and complex U.S. state laws. Key changes include the EU AI Act’s August 2 deadline for high-risk systems and new state-level rules in Colorado, Connecticut, and Maryland impacting employment, pricing, and consumer AI. Companies must inventory all AI tools, conduct gap analyses against these new regulations, implement robust governance, and train their workforce to avoid substantial penalties and operational disruption.

AI Regulatory Policy Changes in 2026: The Operational Playbook You Need Now

AI regulatory policy has transitioned from theoretical principles to enforceable laws and targeted state regulations in 2026. The two most urgent developments are the enforcement of the EU AI Act’s high-risk system requirements, which begins on August 2, 2026, and a wave of new U.S. state laws governing employment decisions, algorithmic pricing, and consumer-facing AI. For business operators, this is no longer a watch topic—it’s a cross-functional operational model requiring immediate action.

Key Takeaways for AI Regulatory Policy in 2026:

- EU AI Act Enforcement: The grace period for high-risk AI systems ends on August 2, 2026, requiring immediate compliance, conformity assessments, and CE marking.

- U.S. State Laws Surge: A patchwork of state-level regulations for Automated Employment-related Decision Processes (AEDPs), algorithmic pricing, and consumer AI is taking effect, notably in Colorado, Connecticut, and Maryland.

- Federal Signals Matter: While no overarching U.S. federal AI law exists, America’s AI Action Plan and potential executive orders direct federal procurement and R&D, influencing industry standards.

- Comprehensive Inventory is Crucial: Businesses must immediately catalog all AI tools, identify their risk categorization, and map them against relevant jurisdictional requirements.

- Cross-Functional Governance: Effective compliance requires collaboration across legal, IT, data science, HR, and business units, supported by robust vendor management and training protocols.

The New State of Global AI Regulation in 2026

As of May 10, 2026, the landscape of AI regulation is defined by enforcement dates and specific application bans. The EU has moved to an enforcement posture, while the U.S. is pursuing a dual-track approach combining federal policy signals with concrete, state-level legislation. The Trump Administration’s release of “The National Policy Framework for Artificial Intelligence (Legislative Recommendations)” on March 20, 2026, adds another layer to the federal conversation. Ignoring these changes now means facing significant legal, financial, and operational risks within months.

Key AI Regulatory Policy Changes for 2026-2027

- EU AI Act Enforcement (August 2, 2026): The grace period is over. High-risk AI systems placed on the EU market must be fully compliant. This is a critical deadline for global businesses operating in Europe.

- U.S. State ADMT Requirements (Effective January 1, 2026): Regulations for contracting opportunities, compensation, and healthcare services using AI are already in effect in several states, requiring immediate review of Automated Decision-Making Tools (ADMTs). These early movers set a precedent for broader AI adoption benchmarks.

- Colorado AEDP Framework (Effective October 1, 2026): A dedicated regulatory framework for Automated Employment-related Decision Processes (AEDPs) becomes effective, with substantive obligations for employers kicking in on October 1, 2027. This framework emphasizes fairness and transparency in AI-assisted hiring.

- Algorithmic Pricing Laws (2025-2026): States like Connecticut, New York, California, and Maryland have passed or proposed laws restricting the use of AI for personalized or “surveillance” pricing. These laws aim to protect consumers from discriminatory pricing practices.

- Explicit Prohibitions: The EU AI Act now explicitly bans “nudifier” applications and AI systems generating child sexual abuse material or sexually explicit deepfakes of identifiable individuals. These prohibitions reflect societal concerns about the ethical implications of advanced AI.

The EU AI Act: From Framework to Enforcement

The EU AI Act is the most comprehensive AI regulatory framework globally, and its enforcement phase for high-risk systems begins on August 2, 2026. This represents a hard deadline for any company operating in the European market. For those interested in the broader context of AI’s global state in 2026, this act is a landmark example of proactive regulation.

Understanding High-Risk AI Systems

High-risk AI systems are those that pose significant risks to health, safety, or fundamental rights. The EU AI Act provides an explicit list. If your AI system falls into any of these categories, mandatory compliance with the Act is required by August 2, 2026.

The EU AI Act provides a list of high-risk systems, which includes:

- AI used in critical infrastructure (e.g., traffic control, energy grid management).

- AI for educational or vocational training that determines access.

- AI used in employment, worker management, and access to self-employment (e.g., CV-sorting software, productivity monitoring tools).

- AI used in essential private and public services (e.g., credit scoring, eligibility for public benefits).

- AI used in law enforcement, migration, asylum, and judicial processes.

- AI used for biometric identification and categorization.

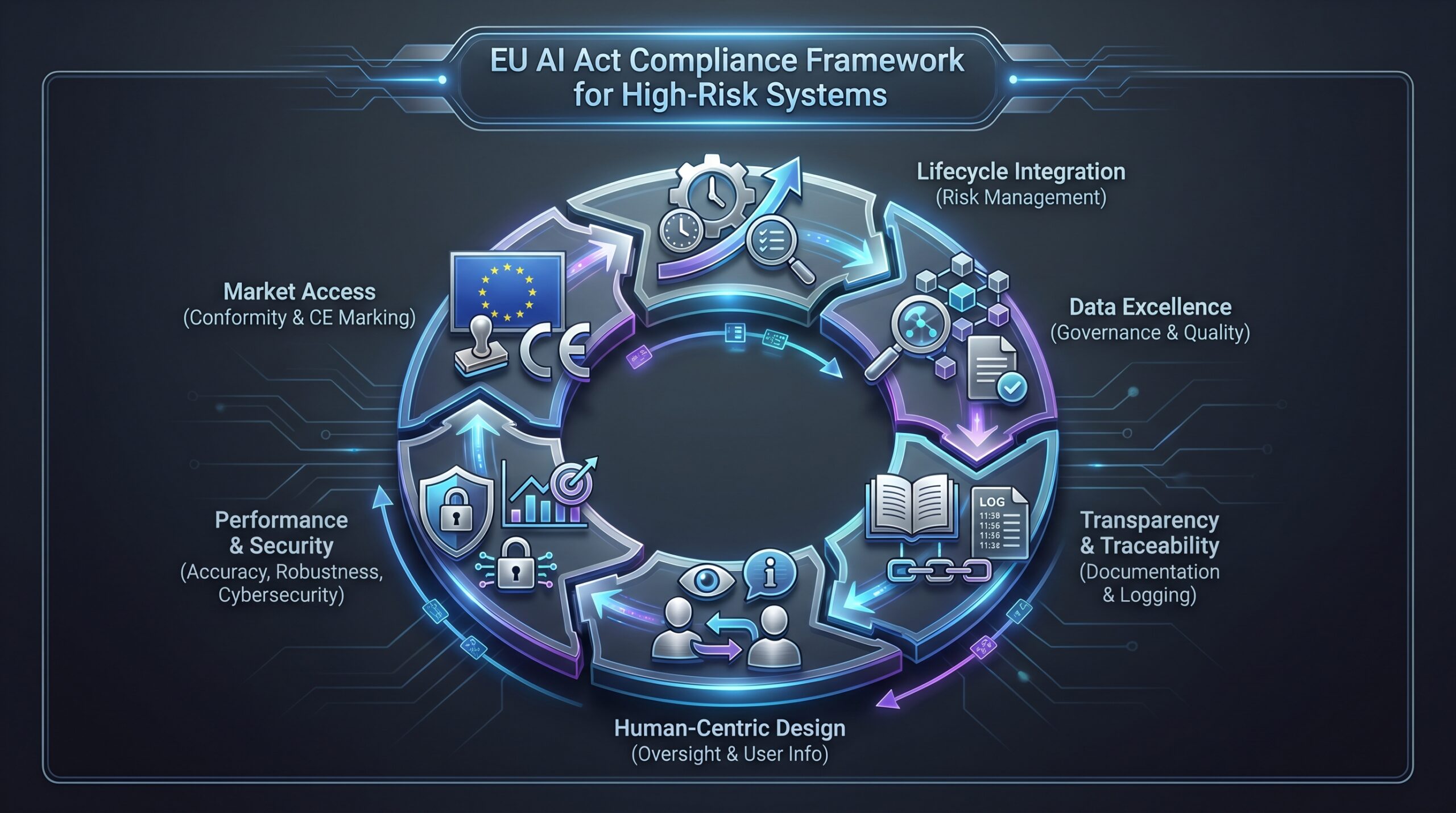

What must high-risk system providers and deployers do by August 2026?

- Establish a Risk Management System: A continuous, iterative process run throughout the AI system’s lifecycle. This system must proactively identify, analyze, evaluate, and mitigate risks.

- Ensure Data Governance and Quality: Use high-quality training, validation, and testing data sets with appropriate data governance measures. This includes ensuring data relevancy, representativeness, and freedom from bias.

- Create Detailed Technical Documentation: For authorities to assess compliance. This documentation should be clear, comprehensive, and kept up-to-date.

- Enable Automatic Recording (Logging): Ensure systems are technically capable of recording their operation (“logs”) to demonstrate traceability and allow for post-market monitoring.

- Provide Clear User Information and Instructions: For deployers to understand the system’s capabilities, limitations, and the necessary human oversight.

- Implement Human Oversight: Design systems to be effectively overseen by humans to minimize risk and allow for intervention or override capabilities.

- Ensure Accuracy, Robustness, and Cybersecurity: These are foundational requirements to ensure the system performs as intended and is resilient to errors or malicious attacks.

- Conformity Assessment & CE Marking: Before placing on the market, high-risk systems must undergo a conformity assessment (often by a Notified Body) and bear the CE marking, signifying compliance.

Case Study: An EU-Based Medical Device Manufacturer

A company using AI to analyze X-rays for fracture detection operates a high-risk system (Annex I, medical devices). By August 2, 2026, it must have a validated risk management system in place, documented proof of the clinical data’s quality and representativeness used to train the algorithm, technical documentation ready for notified body review, clear instructions for radiologists on the tool’s limitations, and a mechanism for human radiologist override. Failure to comply means the product cannot be legally sold in the EU, with potential fines up to 7% of global annual turnover.

This situation underscores the importance of understanding specific AI models’ capabilities and their regulatory context.

U.S. Regulation: The State-Level Surge and Federal Signals

U.S. AI regulation in 2026 is characterized by actionable state laws and directional federal policy, not overarching federal legislation. This decentralized approach creates a complex compliance environment for businesses operating across state lines.

America’s AI Action Plan: The Federal Policy Signal

Released in July 2025, America’s AI Action Plan remains the key federal roadmap. It is not a law but a strong policy signal for a federal “innovation-first” approach. Key themes influencing agency actions include:

- Procurement Expectations: The federal government will use its purchasing power to prioritize secure, ethical AI. This means federal contractors must align with these principles, potentially impacting their B2B AI adoption strategies.

- Infrastructure Priorities: Investment in AI research infrastructure, including testbeds and data resources. This fosters an environment for advanced AI development, such as that seen in AI infrastructure manufacturing.

- Export Posture: Guidance on balancing AI innovation with national security concerns in export controls. Companies dealing with advanced AI models need to be aware of these evolving regulations.

- Potential Executive Order: As of May 2026, the White House is contemplating an executive order to regulate and evaluate high-risk AI models, potentially using a process similar to the FDA’s. This would represent a significant federal enforcement lever, even without new legislation.

The Frontlines: Key State AI Regulatory Policy Changes

State legislatures are not waiting. They are enacting targeted laws for specific AI applications. Businesses operating nationally must comply with a potential patchwork of regulations, making a unified compliance strategy challenging but essential.

| State | Key AI Regulatory Policy Change (2026) | Focus Area | Key Date / Status (as of May 10, 2026) |

|---|---|---|---|

| Colorado | AI Act (SB 24-205) & AEDP Framework | Consumer-facing AI, Employment Decisions | AEDP law effective Oct 1, 2026. Broader AI Act enforcement on hold due to litigation. A replacement bill has been introduced. |

| Connecticut | AI Bill (SB 2) | Algorithmic Discrimination, Consumer AI | Passed legislature as of May 4, 2026; awaiting Governor’s signature. |

| Maryland | Pricing Bill (HB 1209) | Algorithmic "Surveillance" Pricing | Signed into law in 2026. |

| California | Multiple Bills (e.g., AB 2930, SB 942) | Pricing, Government Procurement, AI Provenance | Multiple proposals active in 2026 legislative session. |

| New Jersey | Multiple AI Bills | Insurance, Consumer Protection, Pricing | Several bills introduced in the 2026 session. |

Practical Implications of State Laws:

- Automated Employment-related Decision Processes (AEDPs): Colorado’s framework is the most advanced. It requires employers using AI for hiring, promotion, or compensation to conduct annual disparate impact analyses, notify candidates/employees of AEDP use, and allow for human review of adverse decisions. This highlights a shift towards ethical AI in employment decisions.

- Algorithmic Pricing Laws: These laws typically prohibit using AI to implement “surveillance pricing”—where a seller uses personal data to offer different prices to different consumers for the same good. This impacts e-commerce, travel, and dynamic pricing platforms, ensuring fairer consumer practices.

- Consumer-Facing AI: Several proposed laws require clear and conspicuous disclosure when a consumer is interacting with AI, not a human. This aims to increase transparency and consumer trust in AI workflow automation that directly interacts with the public.

- Insurance & Healthcare: Regulations for AI in claims assessment, fraud detection, policy pricing, and healthcare services went into effect on January 1, 2026, in certain states. These sector-specific rules address the unique risks associated with AI in sensitive industries.

Warning: Interoperability Risks for Insurers and Healthcare Providers

The January 1, 2026, effective date for AI regulations in insurance and healthcare in some states means that ADMTs used in these sectors must already be compliant. This includes systems for claims processing, risk assessment, and patient care. Businesses are advised to audit all such tools immediately to ensure ongoing compliance, particularly for models that might be subject to new evaluation standards.

Case Study: A National Retailer Using AI for Hiring and Pricing

A retailer uses an AI tool to screen resumes and a dynamic pricing engine on its website. As of October 2026, its Colorado hiring practices must comply with AEDP rules, including impact assessment and candidate notification. Its pricing engine must be reviewed to ensure it does not use personal data for personalized surveillance pricing, which may be illegal in Maryland and under consideration in California. The legal and IT teams must map tool usage to specific state laws—a national policy is insufficient.

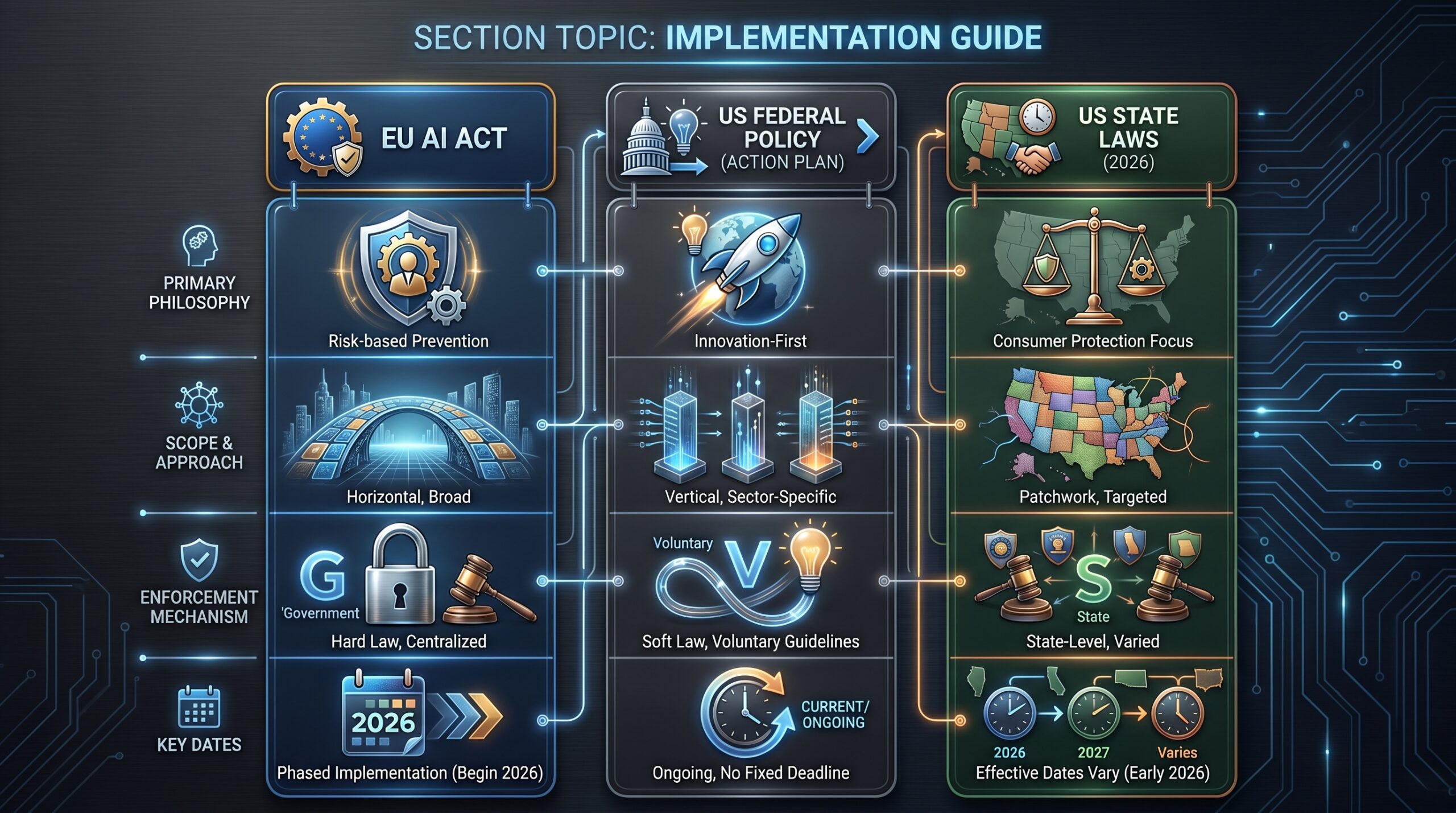

Critical Comparison: EU vs. U.S. AI Regulatory Approaches

Understanding the philosophical and practical differences is key to building a global compliance strategy for AI regulatory policy changes. While the EU favors a centralized, risk-based approach, the U.S. demonstrates a fragmented, application-specific regulatory push, alongside federal policy signals.

| Decision Criteria | EU AI Act | U.S. Federal (Action Plan) | U.S. States (2026 Laws) |

|---|---|---|---|

| Primary Philosophy | Risk-based Prevention & Fundamental Rights | Innovation-First & Competitive Advantage | Consumer Protection & Anti-Discrimination |

| Scope & Approach | Comprehensive, horizontal regulation based on system risk. | Directional policy signals, potential sector-specific EO. | Targeted, vertical laws focused on specific applications (jobs, pricing, health). |

| Enforcement Mechanism | Hard law with CE marking, audits, and high fines (up to 7% global turnover). | Soft law via procurement, funding, and agency guidance. | Hard law with state attorney general enforcement, private rights of action (varies). |

| Key Date | August 2, 2026 (High-Risk Enforcement) | July 2025 (Plan Release), Potential 2026 EO. | Jan 1, 2026 (ADMT), Oct 1, 2026 (CO AEDPs). |

| Best For Compliance If… | You produce or deploy defined high-risk systems in the EU. | You are a federal contractor or innovator seeking R&D alignment. | You use AI in employment, consumer pricing, or specific regulated sectors like insurance. |

Implementation Checklist: Building Your 2026 AI Compliance Program

Treat this as a project plan. Start yesterday. The complexity of AI regulatory policy changes necessitates a structured and proactive approach, akin to managing other critical business functions.

- Inventory & Map Your AI Systems:

- Catalog every AI tool, API, and model in use (vendor and in-house).

- Tag each system by: Jurisdiction(s) of use, Purpose (hiring, pricing, healthcare, etc.), EU AI Act Risk Category (prohibited, high-risk, etc.).

- Assign a system owner from the business unit.

- Conduct a Gap Analysis Against Key Laws:

- For EU High-Risk Systems: Measure against the 8 requirements (risk management, data governance, etc.). Identify missing documentation and processes.

- For U.S. State Laws: Check each AI application against relevant state bills. Does your hiring tool need an impact assessment for Colorado? Does your pricing engine violate Maryland’s new law?

- Establish Governance:

- Form a cross-functional AI Governance Committee with members from Legal, Compliance, Risk, IT/Engineering, Data Science, and Business Units.

- Define clear roles: Who approves new AI procurement? Who manages incident response? This structure is vital for handling issues like AI alignment collapse.

- Develop Core Processes:

- Vendor Management: Update procurement contracts to require AI compliance attestations and audit rights from vendors.

- Impact Assessment Template: Create a standardized process for conducting and documenting disparate impact assessments (for AEDPs) and fundamental rights impact assessments (for EU).

- Documentation & Logging: Implement systems to generate and maintain technical documentation and operational logs as required.

- User Communication: Draft templates for notifying candidates (for AEDPs) and consumers (for interactive AI) about AI use.

- Train Your Workforce:

- Train developers on “regulation by design” principles.

- Train HR and managers on lawful AEDP use and human oversight requirements.

- Train the executive team on liability and reporting obligations.

Risk Mitigation Checklist: Avoiding the Major Pitfalls

Navigating the complex landscape of AI regulatory policy changes requires a strategic approach to risk mitigation. Avoiding common pitfalls can save significant financial and reputational costs.

- Do Not Underestimate the August 2026 Deadline:

- Risk: Catastrophic. Non-compliant high-risk AI systems cannot be sold or deployed in the EU, leading to market exclusion and heavy fines.

- Mitigation: Prioritize your EU high-risk system inventory. Begin conformity assessment procedures now. Engage a notified body if required and conduct regular internal audits.

- Avoid a “Big Tech Only” Mindset:

- Risk: Believing only AI giants are regulated. Laws target users in insurance, healthcare, hiring, and retail. Many businesses, regardless of size, use AI in these regulated sectors.

- Mitigation: Assume your industry is in scope. Proactively apply the inventory and gap analysis to all business units, even if you are not a primary AI developer.

- Prepare for Contested and Dynamic Regulations:

- Risk: Colorado’s law being put on hold shows the political and legal volatility. A law you prepared for may change, or new ones may emerge, such as those related to AI-powered threats to financial stability.

- Mitigation: Monitor legal challenges. Build flexible compliance frameworks that can adapt to new requirements or stays. Subscribe to a legal tracker like Troutman Privacy or IAPP.

- Don’t Silo Responsibility in Legal or IT:

- Risk: Ineffective, checkbox compliance that fails under scrutiny. AI compliance is an enterprise-wide issue.

- Mitigation: Implement the cross-functional governance model outlined above. Compliance must be an operational, business-led function that integrates legal and technical expertise.

- Neglect Provenance and Watermarking:

- Risk: Future laws (like California SB 942) may require AI-generated content disclosure. Being unprepared requires costly retrofitting of systems and could lead to non-compliance.

- Mitigation: Evaluate tools for embedding provenance information (e.g., C2PA standards) into AI-generated outputs now. This proactive step ensures your AI-generated content meets future transparency requirements.

AI Regulatory Risk Mitigation Checklist

- Urgent EU Deadline Prep: Confirm systems, initiate conformity, engage notified bodies.

- Broad Scope Awareness: Recognize AI regulation affects all industries, not just big tech.

- Dynamic Monitoring: Track legal challenges and emerging regulations; build flexible frameworks.

- Cross-Functional Ownership: Distribute compliance responsibility beyond legal/IT.

- Provenance & Watermarking: Implement content disclosure standards proactively.

The Expanding Regulatory Universe: DMA and Sector-Specific Rules

AI regulatory policy changes are not happening in a vacuum. Existing frameworks are expanding and new sector-specific rules are emerging, creating an increasingly complex regulatory ecosystem. Businesses need to understand how these interconnected regulations impact their AI operations.

- EU Digital Markets Act (DMA): The upcoming review will explicitly expand its scope to cover new technologies like AI. This means “gatekeeper” platforms will face new obligations regarding how they integrate and offer AI tools to users and business customers, potentially affecting choice and interoperability. This could impact how foundational models, such as those discussed in benchmarks for agentic AI progress, are deployed.

- Sector-Specific Rules: The insurance and healthcare regulations effective January 1, 2026, are prime examples. They often amend existing state insurance codes or healthcare statutes to include AI-specific requirements for fairness, transparency, and human review, rather than creating brand-new AI laws. This approach is similar to how multi-agent RL systems are being tailored for specific applications like urban airspace management.

FAQ

Q: Our company is based in the U.S. and doesn’t sell in the EU. Do we need to care about the EU AI Act?

A: Yes, if any of your AI systems are used by your subsidiaries, clients, or distributors within the EU. The AI Act applies to providers (those who develop and market the system) and deployers (those who use it) within the EU. If a U.S.-based SaaS company’s AI tool is used by an EU company for recruiting (a high-risk use), the U.S. provider has obligations. Proximity or direct sales are not the sole determinants of applicability.

Q: What is the single most urgent thing to do before August 2026?

A: Complete your AI system inventory and risk categorization. You cannot assess gaps, allocate resources, or start conformity assessments until you know exactly what AI you have and whether the EU classifies it as high-risk. This is a foundational data exercise that most companies have not done rigorously. Failing at this initial step cascades into broader non-compliance.

Q: Colorado’s AI Act is on hold. Should we stop our compliance work for it?

A: Absolutely not. The litigation may delay enforcement, but the law’s obligations represent a clear regulatory direction. Other states are proposing similar rules. Furthermore, Colorado’s separate AEDP framework for employment AI is not on hold and takes effect October 1, 2026. Compliance work for these laws is prudent risk management and prepares you for similar legislation, like those impacting AI in ad creation decisions.

Q: How does America’s AI Action Plan actually affect my business if it’s not a law?

A: It affects you in three key ways: 1) Procurement: If you are a federal contractor, you will see AI ethics and security requirements in future RFPs, which can be critical for securing government contracts. 2) Investment & R&D: Federal grants and research priorities will align with the Plan’s goals, shaping the ecosystem and directing where public and private capital flows in AI innovation. 3) Export Controls: The Plan guides the Commerce Department on balancing innovation with security, potentially impacting your ability to sell or share advanced AI models internationally. This policy framework indicates the government’s long-term vision for U.S. AI stock investment and development.

Q: What are ‘nudifier’ applications and why are they banned?

A: The EU AI Act explicitly prohibits AI systems that create or manipulate “realistic” nude or sexually explicit images of individuals without their consent (“nudifiers”). This ban, along with the prohibition on AI generating child sexual abuse material, shows regulators drawing clear, enforceable ethical lines against non-consensual intimate imagery and child exploitation, moving beyond voluntary ethical codes. This underlines the growing concern over the misuse of AI, a topic that has generated warnings from figures like Stephen Hawking.

What to Do Next: Your 90-Day Action Plan

Days 1-30: Foundation & Assessment

- Convene the AI Governance Committee. Hold a kickoff meeting with key stakeholders from legal, compliance, IT, data science, HR, and marketing. Establish clear lines of communication and responsibility.

- Launch the AI System Inventory. Use a centralized spreadsheet or dedicated tool. Document system name, vendor, purpose, data inputs, jurisdictions, and owner. This comprehensive list is the backbone of your compliance strategy.

- Categorize for EU AI Act Risk. Tag each system as prohibited, high-risk, limited-risk, or minimal risk based on the Act’s Annexes. This prioritization step is crucial for allocating compliance resources effectively.

- Subscribe to a Regulatory Tracker. Ensure you have a dedicated source for state and federal legislative updates. This could include legal news alerts or specialized compliance platforms for AI regulatory policy changes.

Days 31-60: Gap Analysis & Planning

- Perform Detailed Gap Analyses. For high-risk EU systems, audit against the 8 requirements. For U.S. operations, check against Colorado AEDP rules and relevant state pricing/consumer laws. Document all discrepancies.

- Prioritize Remediation Projects. Rank gaps by risk (likelihood of use in a regulated jurisdiction) and effort. Create project charters for the top 3-5 most critical items, outlining scope, resources, and timelines.

- Engage with Vendors. Contact your AI software vendors. Demand their compliance roadmap for the EU AI Act and key state laws. Review contract amendments to ensure vendor indemnification and compliance guarantees.

Days 61-90: Process Implementation

- Draft Key Documents. Create templates for: AI Impact Assessments, Vendor Compliance Questionnaires, User Notification Language (for candidates/consumers). Standardized documents streamline future compliance efforts.

- Begin a Pilot Conformity Assessment. Select one high-risk EU system and walk through the full conformity assessment process to identify internal process bottlenecks and refine your approach. This hands-on experience is invaluable.

- Schedule Mandatory Training. Develop and schedule role-specific training for developers, HR professionals, and business unit leaders on the new regulatory obligations and internal processes. This ensures organization-wide awareness and capability.

AI regulation in 2026 is operational reality. The deadlines are not hypothetical. The laws are not tentative. The time for strategic planning has passed; the time for execution is now. Treat your AI compliance program with the same rigor as your cybersecurity or financial controls—because the regulators and courts certainly will.