Direct Answer: What are the IMF’s concerns about AI-powered financial system threats?

The International Monetary Fund (IMF) warns that AI-powered tools are creating ‘inevitable’ and sophisticated cybersecurity threats to global financial stability. The core concerns center on AI’s ability to supercharge cyberattacks, leading to extreme financial losses that could trigger funding strains, solvency concerns, and widespread market disruptions. The IMF specifically highlights the risk of ‘correlated failures’ – where a single AI-amplified breach cascades across interconnected financial institutions, potentially causing a macro-financial shock. IMF Chief Kristalina Georgieva has explicitly stated the global financial system is currently unprepared for these evolving threats, urging immediate regulatory adaptation and international cooperation.

TL;DR: AI’s Impact on Finance – Key Takeaways from the IMF

- The IMF warns that AI-powered cyberattacks are an “inevitable” and growing threat to global financial stability

- These attacks can cause extreme cyber-incident losses, leading to funding strains, solvency issues, and broader market disruptions

- The interconnected nature of the financial system means a single breach escalated by AI could have a macro-financial impact, creating “correlated failures”

- Advanced AI tools, including generative AI like Anthropic’s Claude Mythos, can exploit vulnerabilities across operating systems and web services

- IMF Chief Kristalina Georgieva emphasizes the global financial system’s current unpreparedness for these AI-driven cybersecurity threats

- Policymakers must treat cybersecurity as a core financial stability issue, implement stronger resilience standards, and enhance cross-border coordination

- The technology has the capacity to cause a macro-financial shock, necessitating urgent attention to defenses and AI-powered countermeasures

On May 7-8, 2026, the International Monetary Fund issued one of its most direct warnings about emerging technological risks to global financial stability. The IMF, as the guardian of international monetary cooperation and financial stability, possesses unique authority to assess systemic threats. Their analysis reveals a critical vulnerability: the rapid acceleration of AI adoption within finance has outpaced both defensive capabilities and regulatory frameworks.

IMF Chief Kristalina Georgieva stated last month (April 2026) that the global financial system was “not ready” for cybersecurity threats posed by AI. This warning comes as financial institutions increasingly deploy AI for trading algorithms, fraud detection, customer service, and risk management. While AI offers immense opportunities for efficiency and innovation, the IMF’s focus remains squarely on the growing threats that could undermine the entire global financial architecture.

The timing is particularly urgent because of recent advancements in generative AI models. The IMF specifically cited Anthropic’s Claude Mythos as an example of technology that could threaten financial stability by exploiting vulnerabilities across major operating systems and web services. This represents a shift from viewing cyber risk as isolated incidents to recognizing it as a systemic, macro-financial threat capable of causing correlated failures across borders.

What are AI-Powered Financial System Threats?

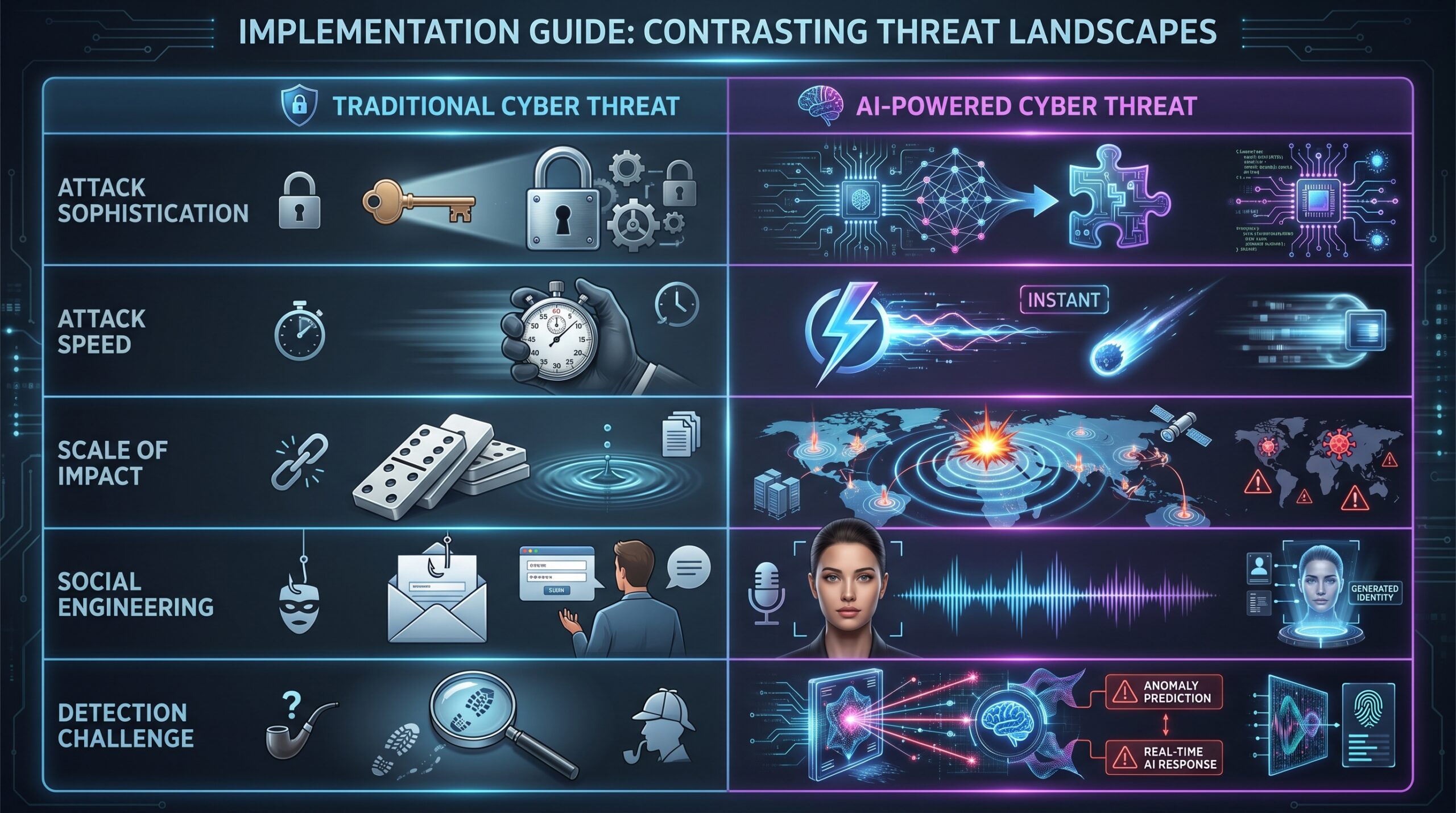

AI-powered financial system threats refer to cybersecurity risks amplified or created by artificial intelligence technologies that specifically target financial institutions, markets, and infrastructure. Unlike traditional cyber threats, AI-powered threats possess three distinguishing characteristics: unprecedented speed, adaptive sophistication, and systemic scale.

Traditional cyberattacks typically follow predictable patterns – malware with static signatures, phishing emails with recognizable errors, and manual exploitation of vulnerabilities. AI-powered threats evolve in real-time, using machine learning to bypass defenses, generate convincing fake content, and identify novel attack vectors. The IMF emphasizes that these aren’t merely enhanced versions of existing threats but represent fundamentally new challenges to financial stability.

The threat categories identified by the IMF and financial security experts include:

- AI-enhanced cyberattacks: Sophisticated phishing, malware, and system intrusion powered by generative AI

- Systemic risk amplification: AI-driven correlated failures across interconnected financial institutions

- Operational vulnerabilities: Algorithmic bias, data poisoning, and black-box decision making in critical financial functions

- Market manipulation: AI-powered trading strategies that can destabilize markets through spoofing, flash crashes, or illicit coordination

- Ethical and governance challenges: Accountability gaps, regulatory arbitrage, and privacy concerns in AI-driven finance

Why AI Threats to Financial Stability Matter Now

Three converging factors make AI threats to financial stability particularly urgent in 2026. First, the exponential advancement of generative AI models has dramatically lowered the barrier to launching sophisticated attacks. Where previously creating convincing phishing campaigns required skilled human effort, AI can now generate thousands of personalized malicious communications in seconds.

Second, financial system interconnectedness has reached unprecedented levels. The proliferation of APIs, cloud services, and real-time payment systems means a vulnerability in one institution can rapidly propagate across the global network. The IMF warns this interconnectedness creates pathways for AI-amplified threats to cause cascading failures that could overwhelm existing safeguards.

Third, the stakes are higher than ever. Financial systems now process over $5 trillion in daily foreign exchange transactions alone, with algorithmic trading representing approximately 60-70% of equity market volume. An AI-driven disruption could trigger liquidity crises, counterparty failures, and loss of public confidence in financial institutions – exactly the scenarios central banks and regulators work to prevent.

The IMF characterizes these threats as “inevitable” not because they’re unstoppable, but because the pace of AI advancement exceeds current defensive capabilities. Financial institutions that haven’t upgraded their cybersecurity frameworks to address AI-specific threats are operating with critical vulnerabilities that could be exploited at any time.

How AI Amplifies Financial System Vulnerabilities: Deconstructing the Threats

AI-Enhanced Cyberattacks: The New Frontier of Threat

Generative AI fundamentally transforms cyberattack capabilities in financial contexts. Large Language Models (LLMs) like Claude Mythos can create highly personalized phishing emails that mimic writing styles of specific executives, complete with context-aware content that bypasses traditional spam filters. These aren’t the poorly written “Nigerian prince” emails of the past – they’re convincing communications that could trick even security-conscious professionals.

AI-powered malware represents another leap in threat sophistication. Traditional malware relies on static code signatures that security software can detect. AI-generated malware can continuously evolve its characteristics, testing thousands of variations to identify those that bypass detection systems. This creates adaptive threats that learn from defensive responses, becoming more effective with each iteration.

Deepfake technology poses particularly severe risks for financial institutions. AI can now generate convincing video or audio impersonations of executives authorizing fraudulent transactions. Several banks have already reported attempted deepfake fraud cases where AI-generated voices of CEOs requested urgent wire transfers. The technology has advanced to the point where real-time deepfake video calls are becoming feasible, creating unprecedented authentication challenges.

The automation scale AI enables is equally concerning. A single attacker can deploy AI systems that simultaneously target thousands of financial institutions, identifying and exploiting vulnerabilities at speeds impossible for human operators. This creates a force multiplier effect that could overwhelm cybersecurity teams through sheer volume and velocity of attacks.

Systemic Risk and ‘Correlated Failures’ in an AI-Driven Landscape

The IMF’s concept of “correlated failures” represents perhaps the most significant AI-powered financial system threat. This occurs when multiple financial institutions suffer simultaneous disruptions due to common vulnerabilities in AI systems or infrastructure. Unlike traditional isolated breaches, correlated failures can trigger system-wide crises.

Homogenization of AI risk models creates one pathway to correlated failures. If multiple banks use similar AI algorithms for trading, risk assessment, or fraud detection, they may develop similar blind spots. An attacker who discovers how to exploit one institution’s AI system might simultaneously compromise dozens of others using the same technology.

Cloud concentration risk amplifies this threat. As financial institutions increasingly rely on a handful of cloud providers (AWS, Microsoft Azure, Google Cloud), a successful attack on cloud infrastructure could disrupt multiple institutions simultaneously. The 2026 Capital One breach demonstrated how cloud misconfigurations can affect numerous financial entities, and AI could exploit such vulnerabilities at scale.

Algorithmic trading networks create another correlation vector. AI-powered trading algorithms can interact in unexpected ways, potentially triggering flash crashes or liquidity evaporation. The 2010 Flash Crash showed how automated systems can amplify disruptions, and AI makes such events more likely through increased trading speed and complexity.

The interconnectedness of payment systems means a disruption at one node can cascade across the network. Real-time gross settlement systems, cross-border payment networks, and digital wallets create numerous interdependencies where AI-powered attacks could cause widespread payment failures.

Operational Risks and Algorithmic Bias in AI-Powered Finance

AI introduces novel operational risks that financial institutions are only beginning to understand. Algorithmic bias in lending and insurance algorithms can lead to discriminatory outcomes that trigger regulatory penalties and reputational damage. In 2025, the European Central Bank fined three major banks €85 million collectively for biased AI algorithms that unfairly denied loans to demographic groups.

The black-box nature of complex AI models creates accountability challenges. When an AI system makes a erroneous trading decision or incorrectly denies a mortgage application, institutions may struggle to explain why the error occurred. This lack of interpretability complicates risk management, regulatory compliance, and customer relations.

Data integrity vulnerabilities represent another operational risk. Adversarial attacks can poison training data by inserting subtle patterns that cause AI models to make systematic errors. An attacker might manipulate historical market data to cause trading algorithms to make losing trades, or alter customer data to disrupt credit scoring models.

AI system dependencies create single points of failure. Many financial institutions rely on third-party AI vendors for critical functions. If a vendor experiences service disruptions or security breaches, multiple institutions could simultaneously lose access to essential AI capabilities for fraud detection, customer service, or compliance monitoring.

AI-Driven Market Manipulation and Ethical Dilemmas

AI enables sophisticated market manipulation strategies that evade traditional detection methods. Spoofing algorithms can place and cancel orders across multiple venues simultaneously, creating false market signals that trick other traders. AI-powered spoofing can adapt in real-time to avoid pattern detection, making it more effective and harder to prosecute.

Pump-and-dump schemes have become increasingly sophisticated with AI. Instead of boiler room operations manually calling victims, AI can generate convincing investment advice across social media, forums, and personalized messages. These AI-generated promotions can target vulnerable investors with psychologically tailored messages that bypass spam filters and skepticism.

Insider trading risks evolve with AI’s predictive capabilities. Algorithms analyzing non-public information might identify trading opportunities that appear legitimate but actually derive from illicit data sources. The complexity of proving AI-enabled insider trading creates enforcement challenges for regulators.

Ethical dilemmas emerge around AI’s role in financial exclusion. As institutions increasingly rely on AI for credit decisions, concerns grow about “algorithmic redlining” – where AI systems effectively discriminate against communities through seemingly neutral factors that correlate with protected characteristics. The 2026 Consumer Financial Protection Bureau guidelines specifically address these AI fairness concerns.

Real-World Examples & Hypotheticals of AI Financial Threats

Case Study 1: The AI-Powered Bank Run Scenario

Consider a coordinated AI attack on regional banks. Attackers use generative AI to create convincing news articles about liquidity problems at several institutions simultaneously. Deepfake audio of regulators discussing concerns spreads on social media. AI-generated customer service messages from the banks themselves inadvertently confirm fears.

Within hours, mobile banking apps experience unprecedented load as worried customers attempt withdrawals. AI-powered distributed denial-of-service (DDoS) attacks overwhelm digital banking infrastructure precisely when it’s most needed. The combination of psychological manipulation and technical disruption triggers actual liquidity problems at targeted institutions.

This scenario demonstrates how AI could transform localized issues into systemic crises. The speed and coordination possible with AI tools could outpace regulatory response mechanisms designed for more traditional bank runs.

Case Study 2: AI-Enhanced Correspondent Banking Attack

Correspondent banking relationships create vulnerabilities where AI attacks could have international consequences. Imagine attackers use AI to identify patterns in SWIFT message formats between institutions. They generate fraudulent payment orders that bypass traditional verification methods because they perfectly mimic legitimate communication patterns.

The AI system tests thousands of variations on payment messages across multiple correspondent relationships simultaneously. When one variation succeeds, the system immediately scales the attack across all vulnerable relationships. Before institutions recognize the pattern, hundreds of millions of dollars have been diverted through multiple jurisdictions.

This scenario illustrates how AI could exploit financial globalization. The cross-border nature of such attacks creates coordination challenges for law enforcement and regulators across different legal systems.

Case Study 3: Algorithmic Trading Cascade

AI-powered trading algorithms interacting across markets create potential for catastrophic feedback loops. Consider a scenario where multiple institutional trading algorithms simultaneously detect a potential arbitrage opportunity between related derivatives. As they attempt to exploit this opportunity, their collective action actually creates the price discrepancy they identified.

The algorithms interpret the growing discrepancy as confirmation of their trading thesis, increasing position sizes. Other AI systems detect the unusual volume and volatility, triggering risk-off behavior that further distorts prices. Within minutes, what began as a minor pricing anomaly escalates into a full-scale liquidity crisis across multiple asset classes.

This hypothetical builds on actual flash crash events but incorporates AI’s ability to process more data and execute trades faster than human traders or traditional algorithms could. The 2024 Nasdaq “microflash” event, where AI trading algorithms contributed to a 2% index swing in 15 milliseconds, shows such scenarios are already emerging.

Case Study 4: AI-Compromised Central Bank Digital Currency (CBDC)

As central banks develop digital currencies, AI threats take on existential implications. Imagine attackers find a vulnerability in the smart contract governing a CBDC’s distribution mechanism. AI tools automatically exploit this vulnerability to create inflationary currency issuance.

The attack doesn’t require stealing existing currency but instead creates new currency outside authorized channels. By the time central bankers recognize the issue, significant inflationary pressure has entered the system. Public confidence in the digital currency collapses, potentially undermining the entire monetary system.

This extreme scenario demonstrates how AI could threaten the most fundamental levels of financial infrastructure. It also illustrates the particular vulnerabilities of programmable money systems where exploits can scale instantly across entire networks.

Mitigating the Threat: Regulatory Frameworks vs. Industry Best Practices

Current Regulatory Landscape & Urgent Gaps for AI

The existing financial regulatory framework is poorly equipped for AI-powered threats. Basel III focuses on capital adequacy and liquidity requirements but doesn’t specifically address AI cybersecurity risks. GDPR and similar privacy regulations govern data usage but don’t mitigate threats from AI-powered data poisoning or model manipulation.

The SEC’s Regulation Systems Compliance and Integrity (Reg SCI) requires certain market participants to ensure system security but doesn’t mandate specific AI governance standards. The fundamental challenge is that most financial regulations were designed for human-driven risks and traditional technology vulnerabilities, not adaptive AI threats.

Emerging AI-specific regulations include the EU AI Act, which classifies financial AI systems as high-risk applications requiring strict governance. However, these regulations focus primarily on ethical AI use rather than cybersecurity dimensions. The EU’s Digital Operations Resilience Act (DORA) addresses some cybersecurity concerns but doesn’t specifically regulate AI-powered attack defense.

Cross-border regulatory coordination remains particularly challenging. The Financial Stability Board has issued principles for AI governance, but implementation varies significantly across jurisdictions. The Basel Committee on Banking Supervision has incorporated some technology risk considerations into its frameworks but hasn’t developed AI-specific standards.

The IMF urges policymakers to treat cybersecurity as a core financial stability issue rather than a technical concern. This requires integrating cyber risk assessments into financial stability monitoring, stress testing for AI-powered attack scenarios, and developing international response protocols for cross-border cyber incidents.

Industry Best Practices & Proactive Measures Against AI Financial Threats

Financial institutions are developing AI-specific security practices that exceed regulatory requirements. Leading banks implement AI governance frameworks that include:

- AI ethics committees with cross-functional representation from technology, risk, legal, and business units

- Model risk management specifically adapted for AI systems, including continuous monitoring for drift, bias, and adversarial manipulation

- Secure AI development lifecycles that incorporate security testing at each stage of model development and deployment

- Red teaming exercises where ethical hackers use AI tools to attempt to compromise the institution’s defenses

- Explainable AI (XAI) requirements for critical financial applications where understanding model decisions is essential for risk management and regulatory compliance

Cybersecurity practices specifically addressing AI threats include:

- AI-powered defense systems that use machine learning to detect novel attack patterns and adapt defenses in real-time

- Behavioral analytics that establish baselines for normal system activity and flag anomalies that might indicate AI-powered attacks

- Zero-trust architectures that assume breach and verify every access request regardless of origin

- Multi-factor authentication systems enhanced with AI-based behavioral biometrics that analyze how users interact with devices

- Data integrity protections including cryptographic verification of training data and continuous monitoring for data poisoning attempts

Information sharing has become increasingly important. Financial Institutions Information Sharing and Analysis Centers (FS-ISACs) now include AI threat intelligence sharing. Institutions share anonymized data about attack attempts, successful defenses, and vulnerability discoveries to collectively improve security.

Tools & Technologies for AI Threat Detection and Response in Finance

Financial institutions are deploying specialized tools to address AI-powered threats:

AI-powered cybersecurity platforms like Darktrace’s Antigena use unsupervised machine learning to detect and respond to novel threats in real-time. These systems establish behavioral baselines for networks and automatically intervene when detecting anomalous activity that could indicate compromise.

Cloud security posture management tools such as Wiz and Lacework help financial institutions manage vulnerabilities in cloud environments where AI systems often operate. These tools identify misconfigurations, excessive permissions, and other cloud-specific risks that AI attackers might exploit.

Explainable AI (XAI) frameworks including SHAP (SHapley Additive exPlanations) and LIME (Local Interpretable Model-agnostic Explanations) help demystify black-box AI decisions. Regulators increasingly require XAI for critical financial applications like credit scoring and fraud detection.

Homomorphic encryption allows computation on encrypted data without decryption, enabling secure AI training on sensitive financial information. Companies like Duality Technologies and IBM offer homomorphic encryption solutions specifically designed for financial services applications.

Federated learning approaches enable multiple institutions to collaboratively train AI models without sharing raw data. This helps address data scarcity while maintaining privacy and security. NVIDIA’s Clara and Google’s TensorFlow Federated are leading frameworks in this space.

Blockchain-based audit trails provide immutable records of AI system decisions and data inputs. This supports forensic investigation after incidents and helps demonstrate regulatory compliance. Companies like Chainalysis and Elliptic offer blockchain analytics specifically for financial applications.

AI bias detection tools such as Fairlearn, Aequitas, and IBM’s AI Fairness 360 help identify and mitigate discriminatory patterns in AI systems. These are becoming essential for compliance with evolving regulations around algorithmic fairness.

Regulatory technology (RegTech) solutions leverage AI for continuous compliance monitoring. Companies like Actimize and Napier use AI to detect money laundering, fraud, and other financial crimes while ensuring compliance with complex regulations across jurisdictions.

Checklist: Proactive Measures Against AI Financial Threats

- Establish cross-functional AI ethics committees.

- Adapt model risk management for AI systems (drift, bias, adversarial attacks).

- Implement secure AI development lifecycles with continuous security testing.

- Conduct regular AI-powered red teaming exercises.

- Enforce Explainable AI (XAI) for critical applications.

- Deploy AI-powered defense systems for real-time threat detection.

- Utilize behavioral analytics to identify anomalies.

- Adopt zero-trust architectures.

- Enhance multi-factor authentication with AI-based biometrics.

- Implement strong data integrity protections.

- Participate in AI threat intelligence sharing (e.g., FS-ISACs).

Costs, ROI, and Investment in AI Security: A Financial Imperative

The financial implications of AI security investment are significant but necessary. IBM’s 2025 Cost of a Data Breach Report found that financial institutions face an average cost of $5.97 million per breach, with AI-powered attacks likely exceeding this average due to their sophistication and scale.

Regulatory penalties for inadequate cybersecurity have increased dramatically. The European Central Bank’s 2025 fine of €85 million for AI bias violations signaled regulators’ willingness to impose substantial penalties for AI-related failures. GDPR violations can reach 4% of global annual revenue, creating potentially existential financial risks for institutions that neglect AI security.

Reputational damage from AI incidents can have long-term financial consequences. A 2025 Deloitte study found that financial institutions experiencing publicly disclosed AI failures saw an average 15% decline in customer trust metrics, resulting in measurable deposit outflows and increased customer acquisition costs.

The ROI equation for AI security investment has shifted from cost center to strategic imperative. Institutions calculating the business case must consider:

- Direct breach costs: Regulatory fines, legal settlements, forensic investigation expenses

- Indirect costs: Business disruption, lost productivity, increased insurance premiums

- Strategic costs: Loss of competitive advantage, reduced innovation capacity due to risk aversion

- Systemic risks: Potential contributions to broader financial instability that could affect even well-protected institutions

Leading institutions now allocate 10-15% of their technology budgets specifically to AI security and governance, up from less than 5% in 2023. This investment includes specialized AI security personnel, advanced detection tools, and comprehensive testing frameworks.

The long-term economic impact of AI-driven financial instability could dwarf individual institutional investments. The IMF estimates that a major AI-powered financial crisis could cause global GDP losses of 2-5% in the first year alone, making preventive investment clearly justified from a systemic perspective.

Risks, Pitfalls, and Myths vs. Facts About AI Financial Threats

What Most People Get Wrong About AI Powered Financial System Threats

Financial institutions frequently underestimate several critical aspects of AI-powered threats:

Underestimating speed and scale: Many security teams still think in terms of human-paced attacks rather than AI-driven campaigns that can target thousands of institutions simultaneously. Response plans designed for isolated incidents may collapse under coordinated AI attacks.

Over-reliance on black-box AI: Institutions deploying AI for critical functions without ensuring explainability create accountability gaps. When AI systems fail or make erroneous decisions, the lack of transparency complicates remediation and regulatory response.

Regulatory complacency: Some institutions assume existing compliance frameworks adequately address AI risks. In reality, most financial regulations predate advanced AI threats and require significant adaptation to remain effective.

Technical focus over governance: Many institutions treat AI security as purely a technical challenge rather than a comprehensive governance issue requiring board-level oversight, cross-functional collaboration, and strategic prioritization.

Myth vs. Facts:

- Myth: Our existing cybersecurity is sufficient for AI threats

Fact: AI adds fundamentally new attack vectors and amplifies old ones, demanding dedicated AI-aware defenses - Myth: AI is only a defensive tool

Fact: AI can significantly enhance both offensive cyberattack capabilities and defensive strategies - Myth: Financial institutions are largely immune due to heavy regulation

Fact: Kristalina Georgieva stated the global financial system is ‘not ready,’ indicating existing regulations are insufficient - Myth: AI cybersecurity is primarily an IT department responsibility

Fact: AI security requires engagement from risk management, legal, compliance, business leadership, and board oversight - Myth: AI threats are future concerns rather than present dangers

Fact: The IMF warns of ‘inevitable’ AI-enabled breaches, indicating threats are already operational and escalating

FAQ

- What is the primary concern of the IMF regarding AI in the financial system?

-

The IMF’s primary concern is that AI will significantly escalate cyber threats, leading to ‘inevitable’ and more sophisticated attacks capable of causing ‘correlated failures’ and systemic instability across the interconnected global financial system.

- What does the IMF mean by ‘correlated failures’ in relation to AI?

-

‘Correlated failures’ refer to the risk that a vulnerability or attack, amplified by AI, could simultaneously affect multiple financial institutions or critical market functions due to their reliance on similar AI tools, data, or interconnected systems, leading to widespread disruption.

- How can Generative AI contribute to financial system threats?

-

Generative AI can create highly convincing phishing emails, deepfake videos/audio for corporate fraud, and sophisticated, adaptive malware. It lowers the barrier for attackers to launch personalized and potent cyberattacks, making traditional defenses less effective.

- Is the global financial system prepared for AI-powered cyberattacks?

-

IMF Chief Kristalina Georgieva has explicitly stated that the global financial system is currently ‘not ready’ for the sophisticated, AI-driven cyber threats, highlighting a significant gap in existing defenses and regulatory frameworks.

- What role does Anthropic’s Claude Mythos play in these IMF warnings?

-

The IMF cited advanced AI models like Anthropic’s Claude Mythos as examples of tools with the capacity to exploit vulnerabilities across major operating systems and web services, underscoring the advanced nature of potential AI-powered attacks.

- What are the IMF’s recommendations for addressing AI financial threats?

-

The IMF urges policymakers to prioritize cybersecurity as a core financial stability issue, implement stronger resilience standards, develop robust AI governance frameworks, and enhance cross-border coordination to address these escalating AI threats.

- How can financial institutions defend against AI-powered threats?

-

Defense strategies include implementing strong AI governance, adopting AI-powered cybersecurity solutions, emphasizing Explainable AI (XAI) for transparency, ensuring data integrity, and fostering international collaboration on threat intelligence and regulatory responses.

Glossary of Key Terms for AI Powered Financial System Threats

- Artificial Intelligence (AI)

-

Broad field of computer science that gives computers the ability to imitate human intelligence, enabling them to learn from data, identify patterns, and make decisions.

- Machine Learning (ML)

-

A subset of AI that allows systems to learn from data without explicit programming, improving performance over time through experience.

- Generative AI

-

A type of AI that can create new content, such as text, images, or code, often based on patterns learned from vast datasets (e.g., Large Language Models).

- Large Language Models (LLMs)

-

Advanced generative AI models trained on massive text datasets, capable of understanding, generating, and manipulating human language.

- Cybersecurity

-

The practice of protecting systems, networks, and programs from digital attacks aimed at accessing, changing, or destroying information, extorting money, or interrupting business processes.

- Systemic Risk

-

The risk of collapse of an entire financial system or market, as opposed to the collapse of individual entities or components within that system.

- Correlated Failures

-

Simultaneous or cascading failures across multiple interconnected entities or systems, often triggered by a single event, which can lead to systemic risk.

- Algorithmic Trading

-

The use of computer programs to execute trades at speeds and frequencies impossible for human traders, often driven by complex algorithms.

- Deepfakes

-

Synthetic media in which a person in an existing image or video is replaced with someone else’s likeness using AI techniques, often used for deceptive purposes.

- Phishing

-

A type of social engineering attack often used to steal user data, including login credentials and credit card numbers, by disguising as a trustworthy entity.

- Malware

-

Short for ‘malicious software,’ designed to gain unauthorized access to a system or to cause damage.

- Regulatory Technology (RegTech)

-

The use of technology to enhance regulatory processes and compliance, particularly in the financial sector.

- Explainable AI (XAI)

-

An emerging field of AI research that focuses on making AI models more transparent and understandable to humans, crucial for trust and accountability in critical applications.

- Central Banks

-

Governmental institutions that manage a state’s currency, money supply, and interest rates, and often oversee the banking system.

- Financial Institutions

-

Organizations that provide financial services, such as banks, investment firms, insurance companies, and credit unions.

- Data Integrity

-

The maintenance of, and the assurance of the accuracy and consistency of, data over its entire life-cycle.

- Algorithmic Bias

-

Systematic and repeatable errors in a computer system that create unfair outcomes, such as favoring or disfavoring particular groups of individuals.

- Cloud Computing

-

The delivery of on-demand computing services—from applications to storage and processing power—typically over the internet and on a pay-as-you-go basis.

References: Sources and Further Reading on AI Financial System Threats

- IMF Blog: “Financial Stability Risks Mount as Artificial Intelligence Fuels Cyberattacks” (May 7, 2026)

- IMF Global Financial Stability Report (April 2026) – Chapter 3: AI and Financial Stability

- Financial Stability Board: “Artificial Intelligence and Machine Learning in Financial Services” (November 2025)

- Bank for International Settlements: “Cyber Resilience in the Age of AI” (February 2026)

- European Central Bank: “Supervisory Expectations for Banks’ Management of AI Risks” (January 2026)

- U.S. Treasury Department: “AI in Financial Services: Opportunities and Risks” (March 2026)

- Bank of England: “Discussion Paper on Artificial Intelligence and Financial Stability” (October 2025)

- Singapore Monetary Authority: “Principles to Promote Fairness, Ethics, Accountability and Transparency in AI” (June 2025)

- The Guardian: “IMF warns powerful AI tools like Claude Mythos could put financial stability at risk” (May 8, 2026)

- Firstpost: “Anthropic’s Mythos: The new generation AI model that IMF says threatens financial stability” (May 8, 2026)

- The Economic Times: “IMF flags AI-powered cyber threats to global financial system” (May 8, 2026)

- LiveMint: “IMF warns of ‘inevitable’ AI-powered threats to global financial system” (May 8, 2026)

- TechXplore: “IMF chief says global financial system not ready for AI cybersecurity threats” (April 24, 2026)