OpenAI has evolved from a builder of AI tools to the primary architect of post-specialist artificial general intelligence (AGI). Its focus in 2026 is on orchestrator models that autonomously manage workflows across domains, transforming how businesses and individuals leverage AI.

Current as of: 2026-05-14. FrontierWisdom checked recent web sources and official vendor pages for recency-sensitive claims in this article.

TL;DR

- OpenAI’s mission is crystallizing around incremental AGI via orchestrator models, not just better chatbots.

- The business model prioritizes ecosystem integration through APIs, making OpenAI the intelligence layer for applications.

- Key risks include strategic dependence, cost drift, and integration complexity, not rogue AI.

- Actionable steps: Skill up on agent design, prototype high-leverage workflows, and prioritize human-in-the-loop reliability.

Key takeaways

- OpenAI’s orchestrator model represents the practical architecture of early-stage AGI, managing workflows autonomously.

- Competitive advantage now hinges on seamlessly integrating AI agents into core operations, not just having an AI strategy.

- Building with OpenAI’s API requires focusing on agent design, tool integration, and reliability over pure capability.

- Career leverage comes from translating business problems into agentic workflows and bridging strategy with technology.

- Vendor lock-in and cost management are critical considerations when adopting OpenAI’s ecosystem.

What OpenAI Is Today

OpenAI operates as a public benefit corporation with a mandate to build artificial general intelligence (AGI) and ensure it benefits humanity. Its structure balances a for-profit arm funding massive compute needs with a nonprofit board retaining ultimate control over safety and mission alignment.

Why this legal detail matters: Unlike subsidiaries of tech giants, OpenAI’s charter legally prioritizes its mission over shareholder returns, enabling long-term, high-risk AGI research.

Why This Matters for You in 2026

OpenAI’s progress is now a structural force, not just a productivity tool. For knowledge workers, it automates complex workflows like synthesizing data from multiple sources. For developers, it enables building agentic applications that decompose high-level goals into reasoned steps. For leaders, competitive advantage shifts to integrating autonomous AI agents into core operations.

As discussed in our coverage of the enterprise AI landscape, seamless integration is becoming the differentiator.

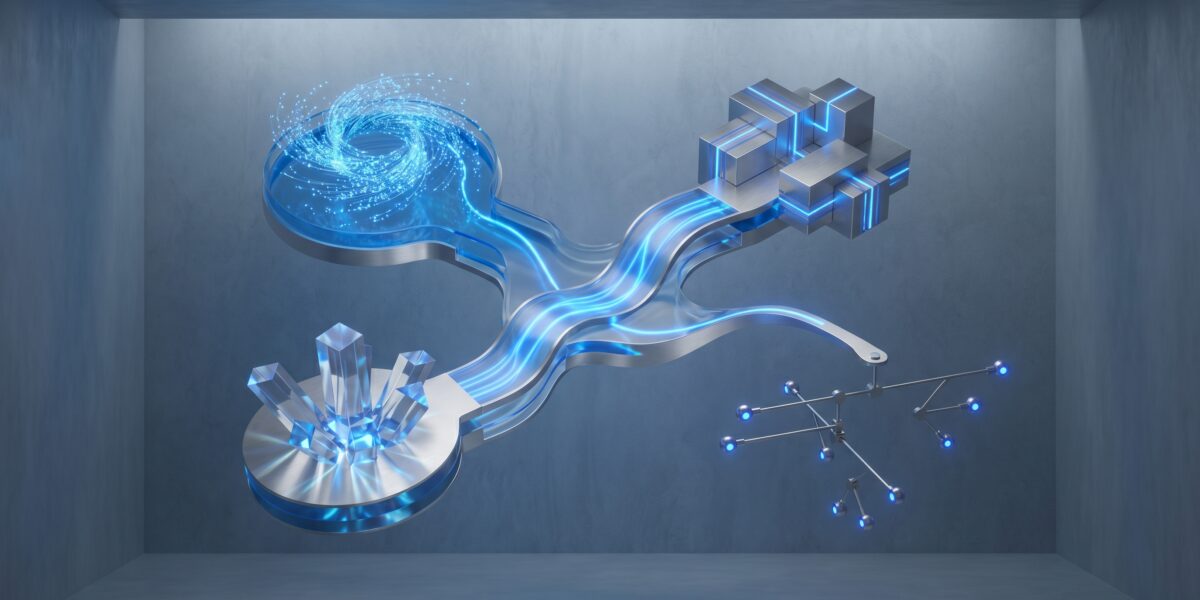

How OpenAI’s Technology Actually Works: The Orchestrator Model

The orchestrator model replaces “prompt in, text out” with a nuanced architecture:

- Input and Planning: The core model breaks down requests into subtasks.

- Tool Use & Execution: It calls predefined tools like web search, code interpreters, or internal APIs.

- Reasoning and Synthesis: Results are evaluated and woven together with logical reasoning.

- Output and Action: Final outputs can be actions like updated files, scheduled events, or deployed code.

This evolution means the AI manages processes, not just generates products.

Real-World Use Cases: Beyond Chat

- Automated Research Analyst: Agents ingest earnings calls, news, and data to produce synthesized briefs with anomalies flagged.

- Self-Debugging Codebases: Integrated agents examine code, error logs, and documentation to propose and explain fixes.

- Personalized Learning Orchestrator: Agents assess student work, identify gaps, retrieve tailored content, and adjust learning paths.

OpenAI vs. The Landscape: A 2026 Comparison

| Dimension | OpenAI | Anthropic | Google (Gemini Ecosystem) | Meta (Llama Ecosystem) |

|---|---|---|---|---|

| North Star | AGI via Orchestration | Constitutional AI | AI-Powered Google | Open Empowerment |

| Core Advantage | First-mover ecosystem, orchestrator capability | Trust & safety, long-context handling | Vertical integration | Cost, customizability |

| Strategic Posture | Proprietary Ecosystem Leader | Proprietary Premium Alternative | Vertical Integrator | Horizontal Enabler |

| Who It’s For | Builders of complex agentic apps | High-stakes enterprises | Google ecosystem users | Developers needing control |

Implementation Path: How to Start Building with This

- Skill up on agent design with frameworks like LangChain.

- Get API access and experiment in the Playground with the Assistants API.

- Identify high-leverage workflows involving decision-making across systems.

- Build a co-pilot prototype focusing on augmentation, not full automation.

- Iterate on reliability and human-in-the-loop safeguards.

Costs, ROI, and Career Leverage

Costs are consumption-based (tokens) and higher for agents but dropping. Prototyping may cost $10–100/month; production scaling requires careful budgeting. Measure ROI in business processes condensed, not time saved. The most valuable career skill is translating business problems into agentic workflows, bridging strategy and technology.

Risks, Pitfalls, and Myths vs. Facts

Myth: OpenAI’s AGI will be a sudden, conscious superintelligence.

Fact: AGI is emerging as gradually expanding autonomy via orchestrator models.

Pitfalls:

- Underestimating integration work (90% of effort).

- Ignoring cost drift with unsupervised agents.

- Over-automating before proving reliability.

- Vendor lock-in from building core logic within OpenAI’s ecosystem.

For guidance on governance, see our enterprise AI scaling guide.

FAQ

Q: Should I use ChatGPT or the API?

A: ChatGPT is for using AI; the Assistants API is for building with AI. Start with the API if you’re a builder.

Q: Is OpenAI’s data safe?

A: API data isn’t used for training by default on enterprise tiers, but it resides on their servers during computation. For sensitive data, consider open-source models like Meta’s Llama.

Q: Will this replace my job?

A: It will replace job definitions. Roles involving predictable, multi-step information processing are most at risk.

Actionable Next Steps: What to Do This Week

- Spend an hour in the OpenAI Playground creating an assistant with Code Interpreter to analyze a dummy CSV.

- Map one weekly workflow you handle, noting data sources and tools used.

- Schedule a discussion on building vs. buying AI solutions for that workflow.

Glossary

- AGI (Artificial General Intelligence): AI system with human-like versatility across tasks.

- Orchestrator Model: Architecture where a planning model delegates subtasks to tools.

- Agent (AI Agent): Autonomous program using AI to perceive, decide, and act.

- Assistant API: OpenAI’s interface for building persistent, tool-using agents.

- Token: Basic unit of text for AI models; roughly ¾ of a word.