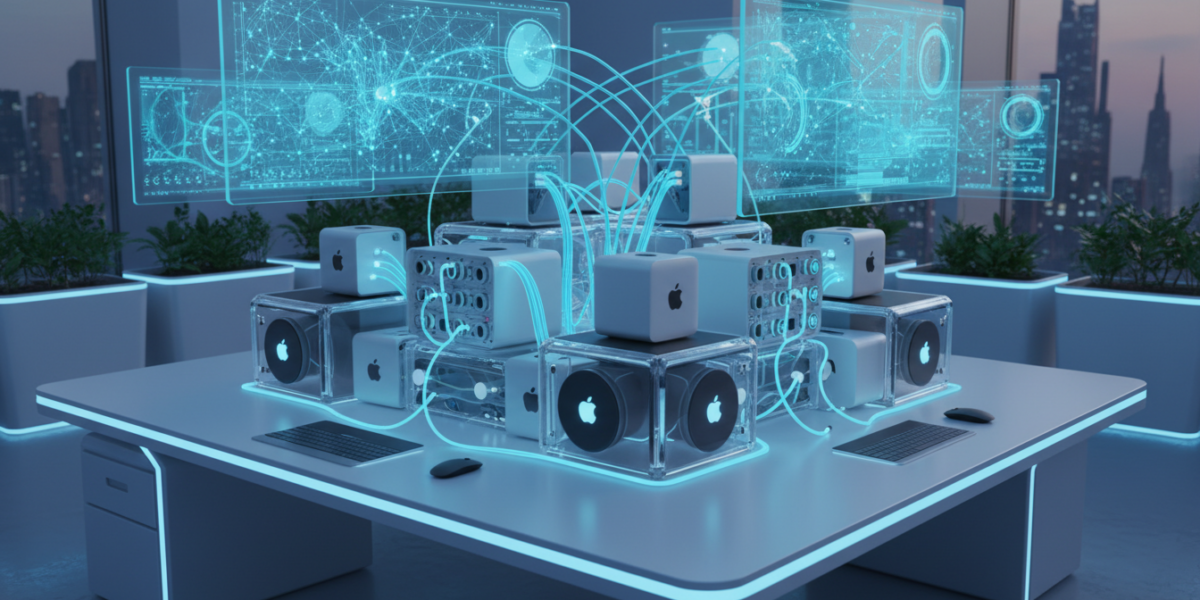

The Exo framework is an open-source tool that pools the memory and compute resources of multiple Apple Macs into a single inference cluster, enabling local running of large language models without cloud infrastructure. It leverages open-weight models and 2026 hardware advancements to democratize AI inference, reducing costs and enhancing data privacy.

TL;DR

- Cluster Macs for heavy AI workloads, combining unified memory to run large LLMs locally.

- Eliminate cloud dependency, avoiding API fees and data exposure.

- Leverage 2026 Mac hardware with Thunderbolt 5 and RDMA support for efficient clustering.

- Performance depends on network speed; wired networks are recommended.

- Alpha software but functional for technical users, offering significant cost savings over cloud inference.

Key takeaways

- Exo framework pools Mac resources for local LLM inference, reducing cloud dependency.

- Open-weight models and 2026 hardware make clustered inference practical and cost-effective.

- Network performance is critical; wired connections are essential for optimal results.

- Significant cost savings and enhanced data privacy are primary benefits.

- Alpha stage software requires technical expertise but is functional for real-world use.

What is the Exo Framework?

The Exo framework is an open-source software tool that pools the unified memory and compute resources of multiple Apple Macs into a single, virtualized inference cluster. This allows you to run large language models locally that would otherwise require expensive cloud instances or high-end servers.

Unlike traditional distributed computing, Exo is designed specifically for LLM inference, minimizing latency and maximizing memory-sharing efficiency between devices. It works with open-weight models, meaning you supply the model weights—Exo handles the distribution.

Who this is for: Developers, research teams, startups, and tech-savvy enterprises that want to run LLMs in-house without committing to cloud vendor lock-in or high inference bills.

Why Exo Matters Right Now

Three trends converge to make Exo particularly relevant:

- Open-weight models are now competitive. Models like Llama 3 70B and Command R+ rival closed APIs in quality but require significant memory.

- Mac hardware has evolved. 2026 Mac Studios and Minis feature larger unified memory pools and faster Thunderbolt 5 connections, making clustering viable.

- Cloud costs are accumulating. Teams running frequent or large-batch inference are seeing bills balloon—Exo offers a clear alternative.

This isn’t just a technical novelty. It’s a practical response to the economic and privacy constraints of cloud-based AI.

How Exo Works

Exo treats a group of networked Macs as one logical device. Here’s the flow:

- Resource Pooling: You install Exo on each Mac. The framework aggregates the total unified memory available across all devices.

- Model Loading: When you load a model (e.g., a 80B parameter model), Exo splits it across the cluster’s combined memory.

- Inference Execution: Inference requests are distributed dynamically. The coordinator node manages requests and assembles outputs.

The constraint is network speed. While Thunderbolt 5 and RDMA help, your cluster’s throughput depends heavily on your local network’s bandwidth and latency. For best results, use a wired Ethernet or Thunderbolt network between devices.

What This Means for You

| If You Are | Why You Should Care | One Action to Take This Week |

|---|---|---|

| Developer/Engineer | Prototype and test without cloud spend. | Download Exo alpha and test with two Macs connected via Ethernet. |

| Startup Founder | Cut monthly inference costs significantly while keeping data private. | Estimate your current cloud LLM spending vs. a Mac cluster setup. |

| Researcher/Academic | Experiment with large models in constrained budget environments. | Pool lab Mac resources to run bigger models than any single machine allows. |

| Enterprise Architect | Build on-premise AI solutions that comply with strict data governance. | Pilot Exo for internal chatbots or document analysis in a secure network. |

Real-World Use Cases

- Software teams are using Exo clusters to run local code-generation models without sending proprietary code to third-party APIs.

- Marketing agencies are processing large content batches with Llama 3 70B for ideation and summarization, avoiding per-call fees.

- Researchers in fields with sensitive data (e.g., healthcare) are using Exo to fine-tune and infer without data leaving their infrastructure.

Exo vs. Other Local Inference Solutions

| Solution | Best For | Limitations |

|---|---|---|

| Exo Framework | Scaling inference across multiple Macs | Network-dependent; alpha software |

| Single Machine (e.g., Mac Studio) | Simplicity, low latency | Limited by max unified memory (e.g., 192GB) |

| Cloud APIs (e.g., OpenAI) | Ease of use, scalability | Recurring costs, data privacy concerns |

| Dedicated Servers (e.g., on GPU) | Maximum performance | High upfront cost, power consumption, noise |

Exo isn’t for everyone—it requires hardware, networking, and some technical skill. But for those with underutilized Apple hardware, it turns sunk cost into strategic advantage.

How to Get Started with Exo

- Hardware: Use two or more Apple Silicon Macs with sufficient unified memory.

- Network: Connect devices via Thunderbolt 4/5 or 10Gbe for lowest latency.

- Install: Download the alpha release from the official Exo repository.

- Configure: Follow the setup guide to define your cluster.

- Test: Load a mid-sized model (e.g., Llama 3 8B) to validate the cluster before scaling up.

Costs and ROI

A typical Exo cluster built around two Mac Minis (32GB unified memory each) costs ~$3,500 upfront. Compared to cloud inference costs for a model like Llama 3 70B (which can run $5–$10 per million tokens), the cluster pays for itself after a few hundred million tokens—easily within months for active teams.

This doesn’t include productivity gains from faster iteration (no API rate limits) or risk reduction from data locality.

Risks and Limitations

- Network dependency: If your network drops, your cluster fails.

- Alpha software: Exo is in active development—not yet production-ready.

- Mac-only: You can’t mix in Windows or Linux machines.

- Memory fragmentation: Not all models split perfectly; some performance loss is possible.

Myth vs. Fact

❌ Myth: Exo requires identical Mac models.

✅ Fact: You can mix different M-series Macs, but performance will align with the slowest device.

FAQ

Can I use Exo with PCs or Linux machines?

No. Exo is built specifically for macOS and Apple Silicon unified memory architecture.

What’s the largest model I can run?

The total unified memory across your cluster is the limit. For example, 64GB pooled memory can run a 70B model comfortably.

Is RDMA support necessary?

Not strictly necessary, but Thunderbolt 5 with RDMA support improves throughput significantly.

How does Exo compare to VMware or Kubernetes?

Exo is purpose-built for LLM inference—lighter and more optimized for this task than general virtualization tools.

Glossary

- Unified Memory: Memory architecture in Apple Silicon Macs that allows CPU and GPU to access the same memory pool.

- Open-Weight Models: AI models released with weights available for public use and modification.

- RDMA (Remote Direct Memory Access): A method for accessing memory on a remote machine without involving the CPU, reducing latency.

- Thunderbolt 5: The latest connectivity standard offering high bandwidth for data transfer between devices.

References

- Exo Framework Alpha Release Notes – Medium

- Exo Framework GitHub Repository – GitHub

- Mac Studio Hardware Specifications – Apple

- Mac Mini Hardware Specifications – Apple

- Llama 3 Model Details – Meta

- Thunderbolt 5 Technology Overview – Intel