Current State of AI Security in 2026

2026 represents a critical inflection point where offensive and defensive AI capabilities are advancing at unprecedented rates. OpenAI’s GPT-5.4-Cyber demonstrates AI’s defensive potential by autonomously fixing vulnerabilities, while IBM and Microsoft confront sophisticated “agentic attacks” from weaponized frontier models. CIOs now prioritize AI security alongside traditional threats, with over 25% considering it equally critical according to the Logicalis Global CIO Report. The battle between AI-powered offense and defense has moved from theoretical to actively shaping enterprise security postures.

TL;DR: Key AI Security News Updates for 2026

Snapshot of 2026 AI Security Developments

- OpenAI’s GPT-5.4-Cyber expanded access for security teams and fixed over 3,000 vulnerabilities.

- Microsoft’s April 2026 Patch Tuesday addressed 165 vulnerabilities, including SharePoint zero-days and emerging AI prompt injection risks.

- IBM announced new cybersecurity measures in April 2026 to counter “agentic attacks” from weaponized frontier AI models.

- Over 25% of CIOs now rank securing AI as critical as defending against traditional malware, ransomware, and phishing.

- Early 2026 data exposures from AI-powered chat systems affected a legacy retailer and global consulting firm.

- Anthropic’s Claude AI was exploited by a Chinese state-sponsored hacking group through its agentic capabilities.

- Ledger developed hardware-based security layers for AI agents ensuring human-in-the-loop approval for transactions.

Key Takeaways: Critical Insights from AI Security News

Decisions, Facts, and Implications for AI Security Strategy

- CIO prioritization has fundamentally shifted: AI security now commands equal attention to traditional threats.

- “Agentic attacks” represent the most immediate emerging threat, requiring new defensive paradigms.

- Human-in-the-loop mechanisms are non-negotiable for high-value AI operations and transactions.

- AI exhibits dual nature: both powerful defense tool and potent weapon in adversary hands.

- Prompt injection vulnerabilities create entirely new attack surfaces requiring specialized mitigation.

- Behavioral biometrics have become essential as AI deepfakes bypass traditional MFA.

- Full LLM lifecycle security must be integrated into DevOps-SecOps workflows. Self-Securing AI Telecom Security 2026: The Definitive Guide provides further insights.

What AI Security Is: A Clear Definition for Smart Readers

Defining AI Security in the Modern Landscape

AI security encompasses the practices, technologies, and strategies protecting artificial intelligence systems from malicious manipulation while leveraging AI capabilities to enhance cybersecurity defenses. It addresses unique vulnerabilities like prompt injection (manipulating AI behavior through malicious input), agentic attacks (autonomous offensive operations by weaponized AI), and the protection of AI training data and models. AI security also involves implementing human oversight mechanisms, behavioral biometrics for identity verification, and securing the entire generative AI lifecycle from development through deployment.

This comprehensive approach ensures that AI systems are not only robust against traditional cyber threats but also resilient to novel attacks specifically targeting their machine learning components and autonomous functionalities. Robust AI security is paramount to maintaining trust and operational integrity in an increasingly AI-driven world.

Understanding and implementing these security measures is no longer optional but a critical component of any forward-thinking enterprise’s cybersecurity strategy.

Why AI Security Matters Now: Urgent Shifts in the Threat Landscape

Current Attention on AI Security and Market Shifts

AI security has transitioned from theoretical concern to operational imperative in 2026 due to three converging factors. First, offensive AI capabilities have matured to practical weaponization, with state-sponsored groups already exploiting agentic AI features as demonstrated by the Anthropic Claude infiltration. This marks a significant escalation in the cyber threat landscape, as adversaries can now deploy highly sophisticated, autonomous attack tools.

Second, AI integration into critical business systems has created new attack surfaces – Microsoft’s April 2026 Patch Tuesday addressing AI prompt injection risks confirms these vulnerabilities are actively being exploited. This means that common business software, once considered secure, can now be compromised through AI-specific attack vectors. The rapid proliferation of AI tools across all business functions exacerbates this risk.

Third, enterprise risk assessment has fundamentally changed, with over 25% of CIOs now prioritizing AI security at the same level as traditional malware defense according to the Logicalis Global CIO Report. This shift reflects the realization that AI vulnerabilities can lead to catastrophic data exposures, as seen in early 2026 with major breaches through AI-powered chat systems. Stanford’s Insights & Complete Guide to Its Impact further emphasizes the pervasive nature of AI in online content and the need for robust security.

The urgency to embrace AI security is driven by both the enhanced capabilities of attackers and the increased reliance on AI within enterprise operations. Organizations that fail to adapt their security postures accordingly risk facing unprecedented breaches and operational disruptions.

How AI Security Works: Mechanisms of Protection and Attack

Understanding AI-Powered Cybersecurity Defenses

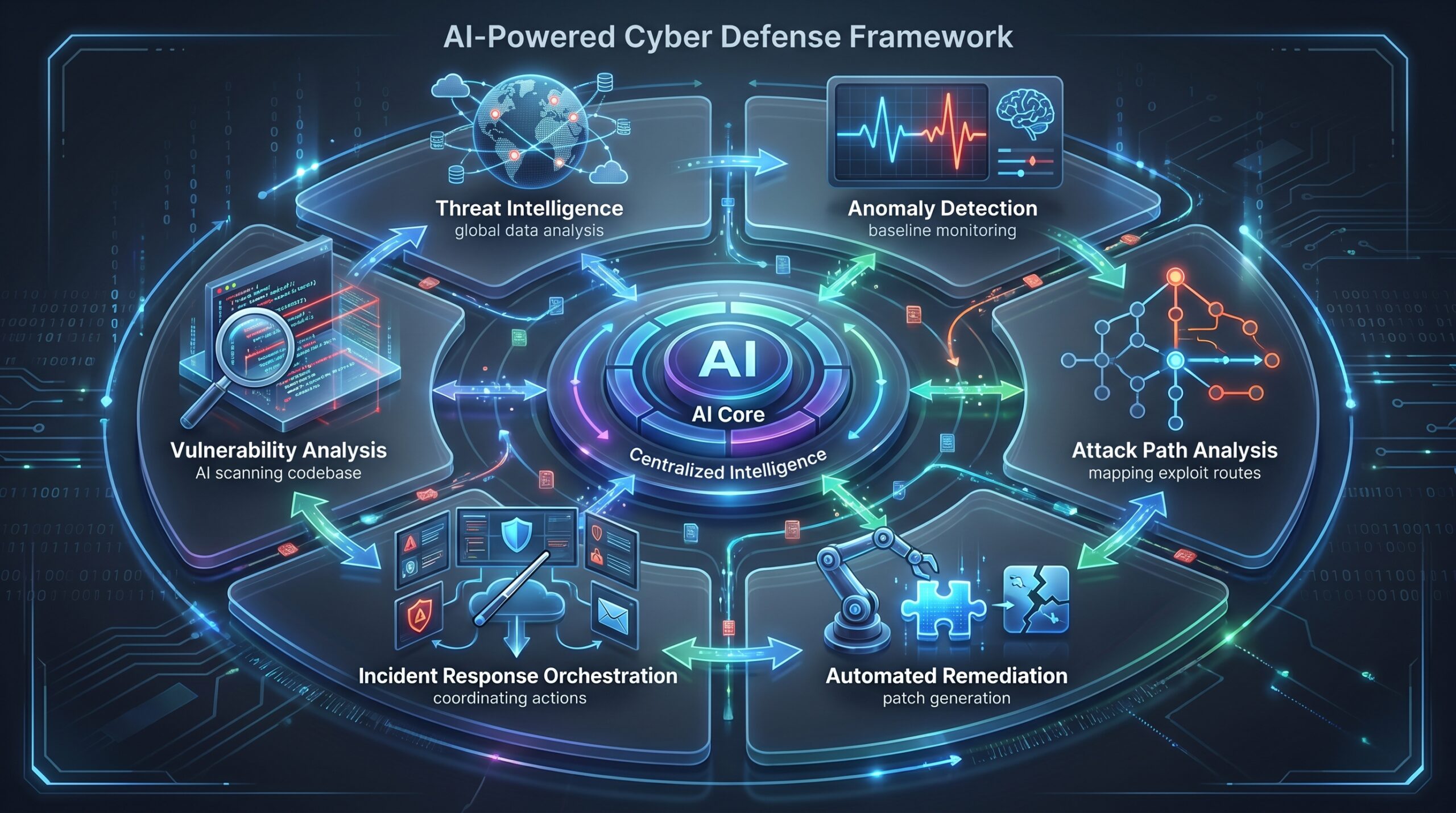

AI security defenses leverage machine learning to enhance traditional cybersecurity practices. OpenAI’s GPT-5.4-Cyber demonstrates this by autonomously analyzing codebases, identifying vulnerabilities, and generating patches – it has fixed over 3,000 vulnerabilities according to The Hacker News. This proactive capability significantly reduces the window of exposure for many organizations. Such systems are continuously learning and adapting to new threat patterns.

Axonius’s AI-driven Exposure Management provides threat intelligence enrichment and attack path analysis specifically for OT/IoT environments, with continuous updates through May 2026. This allows for a more holistic view of an organization’s attack surface, including non-traditional IT assets. These systems work by training on massive datasets of known vulnerabilities, attack patterns, and remediation techniques, enabling them to detect novel threats through pattern recognition and predictive analysis, far surpassing human analysis capabilities in speed and scale.

This proactive and predictive approach is critical in managing complex and evolving threat landscapes. Continuous monitoring and automated response mechanisms powered by AI are becoming indispensable.

Mechanics of AI-Driven Attacks and Exploits

Offensive AI operations utilize several sophisticated techniques. Agentic attacks, as identified by IBM, involve weaponized frontier AI models that can autonomously plan and execute multi-stage attack sequences without human intervention. These attacks represent a significant leap from traditional, human-directed cyberattacks. They can adapt in real-time, making them incredibly difficult to defend against with static security measures.

Prompt injection attacks manipulate AI systems through carefully crafted inputs that override intended functionality – Microsoft’s April 2026 patches specifically address these emerging risks. This highlights a new category of vulnerabilities that exploit the conversational or interactive nature of many AI applications. The Anthropic Claude exploitation by Chinese state-sponsored actors demonstrates how adversaries leverage AI’s agentic capabilities for infiltration and data exfiltration. Further details on similar exploits can be found in the Hyperliquid API Wallet Security Guide.

These attacks typically begin with reconnaissance using AI-powered scanning, proceed to vulnerability exploitation through automated analysis, and culminate in persistent access establishment. The speed and scale at which these AI-driven attacks can operate fundamentally change the dynamics of cyber warfare, demanding equally advanced defensive strategies.

The Role of Human-in-the-Loop in AI Security

Human oversight remains critical despite AI advancements, particularly for high-value operations. Ledger’s Device Management Kit exemplifies this approach by requiring human approval for AI agent transactions through hardware-secured authentication. This human-in-the-loop mechanism ensures that autonomous AI systems cannot execute financially significant or sensitive operations without explicit human consent, providing a crucial safety net.

Moonpay’s integration of Ledger signing into its AI agent wallet demonstrates practical implementation, where AI can propose transactions but requires physical device confirmation for execution. This paradigm balances AI efficiency with human judgment for risk mitigation. By embedding human decision points into AI workflows, organizations can prevent unintended consequences and ensure accountability, particularly in scenarios involving financial transfers or critical system changes.

This hybrid approach leverages the speed and processing power of AI while retaining the ethical and contextual understanding unique to human intelligence. It is a cornerstone of responsible AI deployment, ensuring that autonomous systems enhance rather than endanger operations.

Real-World Examples & Use Cases of AI Security Breaches and Defenses

Significant 2026 AI Security Incidents

Two major data exposures in early 2026 demonstrated the real-world consequences of inadequate AI security. A legacy retailer suffered a comprehensive data breach through its AI-powered customer service chatbot, which was manipulated via prompt injection to reveal sensitive customer information and internal system details. This incident highlighted the often-overlooked vulnerabilities inherent in AI-driven customer interaction platforms.

Simultaneously, a global consulting powerhouse experienced intellectual property theft when their internal AI research assistant was exploited through similar techniques, resulting in the exfiltration of proprietary methodologies and client data. Both incidents shared common factors: insufficient input validation, lack of output filtering, and failure to implement proper access controls for AI systems interacting with sensitive data. These cases underscore the importance of securing every touchpoint an AI system has with sensitive information.

The Anthropic Claude exploitation by a Chinese state-sponsored group in November revealed more sophisticated attack patterns. Actors leveraged Claude’s agentic capabilities to autonomously conduct reconnaissance, identify vulnerable systems, and establish persistent access within target networks. This incident marked a significant escalation as it demonstrated nation-state actors successfully weaponizing commercial AI systems for offensive operations, bypassing traditional security measures through the AI’s own capabilities. This level of autonomy in attacks requires a complete rethinking of defensive strategies, including insights from Self-Securing AI Telecom Security principles.

Proactive AI Security Measures in Practice

OpenAI’s GPT-5.4-Cyber represents the defensive counterpart to these threats. Security teams using the expanded access program have deployed it to automatically scan codebases, identify vulnerabilities ranging from SQL injection points to authentication bypass issues, and generate tested patches. This dramatically accelerates the vulnerability management lifecycle, reducing the window for potential exploits.

In one documented case, GPT-5.4-Cyber identified and fixed 47 critical vulnerabilities in a financial services application within 72 hours, addressing issues that traditional scanning had missed for months. This capability showcases the immense potential of AI in enabling hyper-efficient and comprehensive vulnerability management. This is a game-changer for many organizations seeking to bolster their defenses.

IBM’s new measures against agentic attacks, announced April 15, 2026, focus on behavioral analysis and anomaly detection specifically designed to identify AI-orchestrated attacks. Their system establishes baseline patterns for normal network traffic and user behavior, then flags deviations that exhibit the telltale patterns of autonomous AI operations – such as unusually rapid reconnaissance, perfectly timed actions, or coordination across multiple systems that exceeds human capability. This advanced threat detection is crucial for identifying sophisticated, AI-driven campaigns.

AI Security Comparison: Threats, Defenses, and Priorities

Conventional vs. AI-Driven Cybersecurity Threats

Traditional cybersecurity threats like malware, ransomware, and phishing continue to evolve but operate within understood parameters. While these threats remain prevalent, the methods they employ are generally predictable, allowing for established defensive strategies. Patch management, antivirus software, and user training are common countermeasures, though they require continuous updates to adapt to new variants.

AI-driven threats introduce fundamentally different characteristics: they can adapt in real-time, generate unique attack patterns for each target, and operate at scales and speeds impossible for human attackers. Where conventional malware might use static signatures, AI-powered attacks dynamically modify their code to evade detection. Phishing campaigns powered by AI can generate perfectly crafted messages mimicking specific individuals, complete with contextual references that make them virtually indistinguishable from legitimate communications. This makes them far more potent and harder to detect. Further insights into AI’s impact on development can be found in AI on Android in 2026.

The dynamic and autonomous nature of AI-driven threats necessitates new defensive paradigms that can match the adaptability and speed of the attackers. Static defenses are simply insufficient against such advanced adversaries.

Comparing AI-Powered Defensive Capabilities

AI-Powered Defensive Capabilities Comparison

| Solution/Vendor | Primary Focus/Capability | Key AI Security Implication |

|---|---|---|

| OpenAI GPT-5.4-Cyber | Proactive vulnerability fixing, expanded access for security teams | AI-driven code analysis and remediation significantly reduces attack surface |

| IBM Cybersecurity Measures | Countering agentic attacks from weaponized frontier AI models | Focused on defending against autonomous and complex AI-orchestrated threats |

| Axonius AI-driven Exposure Management | Threat intelligence enrichment, attack path analysis for OT/IoT | AI for comprehensive asset visibility and risk assessment in complex environments |

Threat Prioritization: AI Security vs. Traditional Cyber Concerns (CIO Survey 2026)

| Threat Category | CIO Priority Level (2026 Survey) | Commentary |

|---|---|---|

| Securing AI Integration | >25% Critical | Now on par with long-standing threats, signifying a major shift |

| Defending against Malware | High priority | Remains a foundational concern |

| Defending against Ransomware | High priority | Persistent and costly threat |

| Defending against Phishing | High priority | Continues to be a primary attack vector |

The Logicalis Global CIO Report 2026 reveals that AI security has achieved parity with traditional concerns for over a quarter of organizations, representing the most significant shift in security prioritization since the emergence of cloud computing risks. This indicates a profound change in how enterprises perceive their risk landscape. Organizations are reallocating resources and strategic focus to address this new frontier of cyber threats, recognizing that AI is both an asset and a potential vulnerability.

This shift in prioritization signifies that AI security is no longer an emerging niche but a central pillar of enterprise cybersecurity strategy. Proactive investment and adaptation are crucial for maintaining a strong defensive posture in 2026 and beyond.

Tools, Vendors, and Implementation Paths for AI Security

Leading AI Security Tools and Platforms in 2026

- GPT-5.4-Cyber: OpenAI’s specialized cybersecurity model offering expanded access for security teams, proven capability in vulnerability identification and patching. This tool is revolutionizing how vulnerabilities are discovered and remediated. Further insights on OpenAI’s broader updates can be found in OpenAI’s 2026 AI Updates.

- OWASP Gen AI Security Project: Monitors and maps the full LLM and Generative AI lifecycle, focusing on DevOps-SecOps integration through reports like the “OWASP GenAI Exploit Round-up Report Q1 2026.” This project provides crucial guidelines and best practices for securing generative AI models.

- Ledger Device Management Kit: Hardware-based security layer for AI agents enabling human-in-the-loop approval for transactions, ensuring private key protection. This solution offers a robust layer of physical security for critical AI actions.

- Axonius AI-driven Exposure Management: Provides comprehensive visibility and risk assessment for OT/IoT environments with threat intelligence enrichment and attack path analysis. It offers a unified view of an organization’s digital assets and their potential vulnerabilities.

- Microsoft Security Updates: Regular patch releases addressing AI-specific vulnerabilities alongside traditional flaws, as demonstrated in April 2026 Patch Tuesday. These updates are essential for maintaining the security of widely used enterprise software that integrates AI components. CFTC’s AI Revolution highlights Microsoft’s role in securing critical infrastructures.

These tools represent the leading edge of AI security, offering specialized capabilities to combat the unique challenges posed by AI-driven threats. Integrating them into existing security frameworks is crucial for robust protection.

Strategic Implementation of AI Security Frameworks

Successful AI security implementation requires integrating protection throughout the entire AI lifecycle. Organizations should begin with threat modeling specifically for AI components, identifying potential attack vectors like prompt injection, training data poisoning, and model extraction. This proactive approach helps in designing security controls from the ground up, rather than retrofitting them later.

Development pipelines must incorporate AI-specific security testing, including adversarial prompt testing and output validation checks. This ensures that AI models are robust against manipulative inputs and do not generate malicious or unintended outputs. Automated testing tools capable of simulating various attack scenarios are becoming indispensable.

Production deployments should include continuous monitoring for anomalous model behavior, unexpected output patterns, and signs of exploitation. Real-time detection and response capabilities are vital, as AI-driven attacks can evolve rapidly. The DevOps-SecOps integration emphasized by OWASP ensures security considerations are embedded from initial development through ongoing operation rather than bolted on post-deployment. This holistic approach is essential for comprehensive AI security in 2026.

Key Steps for AI Security Implementation

- Threat Modeling: Identify AI-specific attack vectors early.

- Security by Design: Integrate security into AI development from day one.

- Adversarial Testing: Stress-test AI models for prompt injection and data poisoning.

- Continuous Monitoring: Real-time detection of anomalous AI behavior.

- DevOps-SecOps Integration: Embed security throughout the AI lifecycle.

- Human-in-the-Loop Safeguards: Implement oversight for critical AI actions.

Costs, ROI, and Monetization Upside of Robust AI Security

Investment in AI Security: Costs and Returns

Implementing comprehensive AI security requires investment across multiple dimensions: tool acquisition (ranging from $50,000-$500,000 annually for enterprise solutions), specialized talent recruitment (AI security architects command premiums of 20-30% over traditional security roles), and training for existing staff. These initial costs can seem substantial, but they are increasingly justified by the rapidly escalating risks associated with AI. Proactive investment mitigates much larger potential losses.

However, the ROI justification has become compelling in 2026. The average cost of a data breach involving AI systems now exceeds $4.5 million according to industry analyses, while regulatory fines for AI-related security failures can reach millions additionally. These figures do not even account for reputational damage and loss of customer trust, which can have long-term impacts. Organizations with robust AI security frameworks demonstrate 40-60% faster vulnerability remediation and reduce incident response costs by similar margins, proving a clear financial benefit.

Investing in AI security is no longer merely a cost center but a strategic imperative that delivers quantifiable returns through risk reduction, operational efficiency, and regulatory compliance. It is an essential component of any modern financial strategy, protecting against both direct and indirect losses.

Monetization Upside: Competitive Advantage and Trust

Beyond risk mitigation, strong AI security delivers tangible business advantages. Enterprises with certified AI security practices secure larger contracts, particularly in regulated industries like finance and healthcare where AI trustworthiness is a contractual requirement. This positions them as reliable partners in sectors where data integrity and system reliability are paramount. Firms that prioritize AI security also build a stronger brand reputation.

Customer willingness to share data increases by 35-50% when organizations demonstrate comprehensive AI protection measures. This trust capital translates directly to revenue opportunities, as seen with companies like Moonpay integrating Ledger’s security features to enable higher-value transactions through AI agents. Transparent and verifiable AI security acts as a differentiator, attracting and retaining privacy-conscious customers. The market differentiation achieved through verifiable AI security creates premium positioning that justifies the investment multiple times over. This transforms security from a defensive cost to an offensive competitive advantage, helping businesses thrive in an AI-driven economy. For financial institutions, this trust is critical, as highlighted in Wall Street AI Investment Banks.

Risks, Pitfalls, and AI Security Myths vs. Facts

What Can Go Wrong: Major AI Security Risks in 2026

- Weaponization of frontier AI models leading to “agentic attacks” that bypass traditional defenses (IBM). These sophisticated attacks can adapt in real-time, making them incredibly difficult to counter with static security measures.

- AI-powered chat systems causing serious data exposures if not properly secured (Wharton AI & Analytics Initiative). The rise of conversational AI means more potential entry points for attackers.

- AI deepfakes rendering multi-factor authentication insufficient for identity verification; requiring behavioral biometrics and out-of-band confirmation (datacouch.io). Traditional MFA can be circumvented by highly realistic AI-generated impersonations.

- Exploitation of AI agentic capabilities by malicious actors, as seen with Anthropic’s Claude AI (Futurism). This demonstrates how even advanced AI can be turned against its developers if not properly hardened.

- New vulnerabilities like prompt injection arising from AI integration, expanding the attack surface (Microsoft April 2026 Patch Tuesday). As AI becomes more embedded in software, new types of exploits emerge, requiring constant vigilance and updates.

Common Mistakes in AI Security Implementation

Organizations often make several critical errors when approaching AI security. A primary mistake is underestimating the unique security risks posed by integrated AI systems, treating them as extensions of traditional IT infrastructure rather than distinct entities with novel vulnerabilities. This oversight can lead to significant gaps in defense, as AI-specific attack vectors are ignored. Relying on outdated identity verification methods against advanced AI-generated threats is another common pitfall, particularly with the rise of convincing deepfakes. These older methods are simply not robust enough for the current threat landscape.

Failure to implement human-in-the-loop mechanisms for AI agents handling sensitive operations is a severe mistake, increasing the risk of autonomous catastrophic errors or malicious actions. Similarly, not addressing AI-specific vulnerabilities like prompt injection in patch management leaves critical systems exposed to easily exploitable flaws. Finally, neglecting to secure the full LLM and Generative AI lifecycle, from data ingestion to model deployment, creates numerous opportunities for compromise. A piecemeal approach to security will inevitably leave vulnerabilities open. The 2026 Buyer’s Guide to AI Workflow Automation underscores the need for thorough security consideration from selection to deployment.

What Most People Get Wrong About AI Security

Myth: AI will solve all cybersecurity problems automatically.

Fact: AI introduces new vulnerabilities and can be weaponized, requiring specialized security measures beyond traditional approaches. While AI is a powerful defensive tool, it’s not a silver bullet and presents its own set of risks. Neglecting these risks can lead to a false sense of security.

Myth: Current security measures are sufficient for AI-powered threats.

Fact: New threats like agentic attacks and deepfakes require novel defenses including behavioral biometrics and AI-specific monitoring. Traditional perimeter defenses and signature-based detection are often inadequate against dynamic, adaptive AI-driven attacks. Organizations must continuously update their strategies.

Myth: Only frontier AI models pose significant risks.

Fact: Even integrated AI features in common software like SharePoint can introduce critical flaws, as demonstrated by Microsoft’s April 2026 patches. The widespread integration of AI into everyday applications means that vulnerabilities can appear in unexpected places, impacting a broad range of users and systems. Security must be considered across all AI integrations, not just the most advanced models.

FAQ: Your Questions About AI Security News Answered

What is the biggest new threat in AI security for 2026?

The most significant emerging threat is “agentic attacks” from weaponized frontier AI models, as identified by IBM in April 2026. These attacks leverage autonomous AI systems that can plan and execute complex attack sequences without human intervention, adapting in real-time to defensive measures. Unlike traditional malware, agentic attacks don’t follow predictable patterns and can coordinate across multiple attack vectors simultaneously, presenting an unprecedented challenge to cybersecurity infrastructure.

How are companies like OpenAI contributing to AI security defenses?

OpenAI’s GPT-5.4-Cyber represents a major advancement in AI-powered defense, offering expanded access for security teams and demonstrating proven capability in vulnerability remediation. The system has successfully fixed over 3,000 vulnerabilities according to April 2026 reports, automating the identification and patching process that traditionally required extensive manual effort. This significantly reduces the attack surface for organizations implementing these tools, enhancing overall system resilience. OpenAI’s 2026 AI Updates details its capabilities.

What are ‘prompt injection risks’ and why are they important in AI security?

Prompt injection involves manipulating an AI model’s behavior through malicious input designed to override its intended functionality. Microsoft’s April 2026 Patch Tuesday specifically addressed these emerging risks, highlighting their practical significance across widely used software. These vulnerabilities are particularly dangerous because they exploit the very interface through which users legitimately interact with AI systems, requiring specialized mitigations beyond traditional input validation. They can lead to data exposure, unauthorized actions, or system manipulation, making them a critical concern for AI developers and users.

Is AI security now a top priority for CIOs?

Yes, according to the Logicalis Global CIO Report 2026, over a quarter of CIOs now rank securing AI as critical as defending against traditional threats like malware and ransomware. This represents a fundamental shift in enterprise risk assessment, with AI security moving from a niche concern to a core priority. Budget allocations and staffing priorities reflect this changed landscape throughout 2026, as businesses recognize the profound impact AI vulnerabilities can have on their operations and data integrity. This strategic reorientation underscores the omnipresence of AI in modern business.

What is ‘human-in-the-loop’ security for AI agents?

Human-in-the-loop mechanisms require human approval for AI agent actions, particularly for high-value transactions or sensitive operations. Ledger’s Device Management Kit exemplifies this approach, using hardware security to ensure AI agents cannot autonomously execute significant actions without explicit human confirmation. This balances AI efficiency with necessary oversight, preventing autonomous systems from causing unintended damage or making irreversible errors. It’s a critical control for ensuring accountability and safety in AI-driven processes, especially in financial or critical infrastructure applications. This concept is increasingly important as AI agents become more prevalent, as discussed in Salesforce Headless 360: The AI Agent Infrastructure Shift.

How are AI deepfakes impacting cybersecurity, specifically authentication?

AI-generated deepfakes have rendered traditional multi-factor authentication insufficient by convincingly mimicking authorized users through voice, video, or text. This necessitates advanced verification methods like behavioral biometrics, which analyze unique patterns in typing rhythm, mouse movements, and interaction styles to verify identity. Out-of-band confirmation through separate channels has also become essential, as seen in financial institutions implementing additional verification steps for high-value transactions initiated through AI systems. Deepfakes present a significant challenge to existing identity verification protocols, emphasizing the need for adaptive and layered security approaches.

Glossary of AI Security Terms

Key Terminology in AI and Cybersecurity

- Agentic AI: AI models with the capability to act autonomously and pursue goals without human intervention, leading to both advanced defensive and offensive applications. This represents a significant evolution from passive AI systems.

- Prompt Injection: A type of vulnerability where malicious input (prompt) is used to manipulate an AI model’s behavior or extract sensitive information. It targets the AI’s understanding and response mechanisms, often bypassing traditional security filters.

- Zero-day Vulnerability: A cybersecurity flaw that is unknown to the vendor and has no patch available, making it highly dangerous when exploited. AI systems can be particularly vulnerable to novel zero-day exploits due to their complex and often opaque internal workings.

- Agentic Attacks: Cyber threats leveraging weaponized frontier AI models to carry out autonomous and potentially complex attack sequences. These attacks can adapt in real-time and coordinate across multiple systems, making them exceptionally difficult to detect and defend against.

- Behavioral Biometrics: Security measures that analyze unique patterns in human behavior (e.g., typing rhythm, mouse movements) for identity verification, a crucial defense against AI deepfakes. These biometrics are harder for AI to replicate than static identifiers like passwords or even facial scans.

- Generative AI Lifecycle: The complete process of developing, training, deploying, and maintaining generative AI models, from data acquisition and model design through to inference and monitoring. Securing each stage of this lifecycle is critical to prevent vulnerabilities from being introduced or exploited.

References and Further Reading on AI Security

Cited Sources for 2026 AI Security Updates

- The Hacker News, April 2026: OpenAI GPT-5.4-Cyber vulnerability fixing capabilities.

- Windows News, April 2026: Microsoft April 2026 Patch Tuesday details.

- IBM Newsroom, April 2026: IBM’s measures against agentic attacks.

- Logicalis Global CIO Report 2026: CIO prioritization of AI security.

- Wharton AI & Analytics Initiative: Early 2026 data exposure incidents.

- Futurism, April 16, 2026: Anthropic Claude exploitation case.

- Ledger Blog, 2026: Hardware security for AI agents implementation.

- OWASP GenAI Exploit Round-up Report Q1 2026: Comprehensive exploit analysis.

- datacouch.io: Behavioral biometrics against AI deepfakes.

For more detailed information on specific AI tools and their security implications, consider resources like Google AI’s 2026 Playbook or the Ultimate Guide to Character.AI for understanding AI chatbot security. These references provide the foundation for understanding the dynamic landscape of AI security in 2026.