The statistic that 51.72% of online content is AI-generated, as reported by Stanford University affiliated research, indicates a significant and accelerating shift in the digital publishing landscape. This figure, often cited in discussions about artificial intelligence’s impact on information dissemination, highlights the increasing proficiency and widespread adoption of generative AI models in creating text, images, and other media. While the exact methodology and scope of such a Stanford-affiliated claim are crucial for full context, its prominence underscores growing concerns about content authenticity, potential misinformation, and the evolving nature of digital authority in an AI-permeated environment.

Recent insights, often attributed to Stanford-affiliated research, suggest that artificial intelligence now generates over half of all online content (51.72%). This rapid rise of AI-produced material poses profound implications for content authenticity, SEO, digital trust, and the future of human-authored work. Understanding the methodologies behind such claims and preparing for a future dominated by AI-generated information is critical for creators, consumers, and platforms alike.

Introduction: The Rise of AI-Generated Content Online

The internet has become an indispensable source of information, entertainment, and connection. However, the nature of its content is rapidly changing with the advent of sophisticated artificial intelligence. Recent discussions, frequently referencing studies from institutions like Stanford University, suggest a significant milestone: over half of all online content might now be AI-generated.

This statistic, specifically AI 51.72% online content Stanford, has sent ripples through the digital landscape. It compels us to reassess our understanding of authenticity, authorship, and the very fabric of the information we consume daily. The implications are far-reaching, impacting everything from search engine optimization (SEO) and digital marketing to media literacy and the perceived value of human creativity.

Unraveling the 51.72% Statistic: Origin and Context

The claim that 51.72% of online content is AI-generated, often linked to Stanford, is a powerful data point that requires careful examination. Understanding its origins and the methodology behind it is crucial for appreciating its true significance and avoiding misinterpretation.

The Stanford Connection: What Does It Mean?

When a figure is associated with a prestigious institution like Stanford University, it naturally garners attention and credibility. However, it is essential to distinguish between research directly published by Stanford faculty or departments and studies conducted by individuals or groups with a more tangential affiliation. Often, a researcher at Stanford, an alumnus, or a research center affiliated with the university might produce such findings.

The specific study or report needs to be identified to understand the full context. Was it a comprehensive analysis of the entire internet, or a specific subset? What detection methods were employed? These details are paramount to evaluating the statistic’s robustness.

Methodology of Detection: How is AI Content Identified?

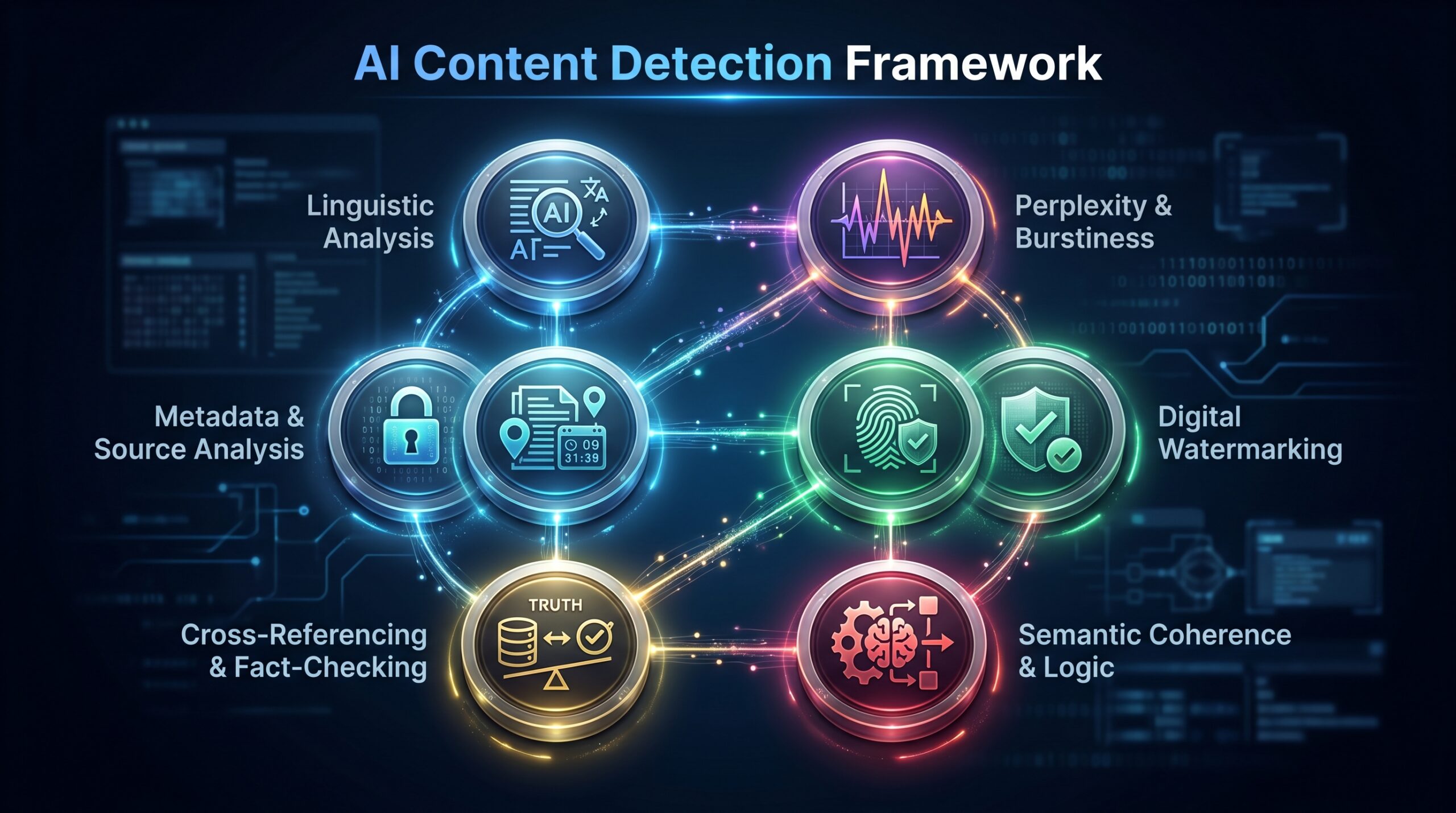

Detecting AI-generated content is a complex and evolving field. Researchers employ a variety of techniques:

- Statistical Analysis: AI-generated text often exhibits patterns or lack of variance in sentence structure, vocabulary, and semantic coherence that humans might not.

- Neural Network Detectors: Specialized AI models are trained to identify characteristics unique to content produced by other AI models.

- Watermarking: Some advanced generative AI models now embed invisible digital watermarks into their output, allowing for easier detection.

- Metadata Analysis: Examining creation dates, author information (or lack thereof), and platform usage patterns can offer clues.

- Human Review: Ultimately, human experts are often employed to validate and refine AI detection methods.

Each methodology has its strengths and weaknesses, and the accuracy of detection can vary significantly depending on the sophistication of the generative AI and the detector.

Scope and Limitations: What Content is Included?

The term "online content" is incredibly broad. Does 51.72% refer solely to text? Or does it include images, videos, audio, and code? Each content type presents unique challenges for AI generation and detection.

Furthermore, the dataset used for the study is critical. Was it a random sample of the entire web, specific platforms (e.g., social media, news sites, blogs), or particular content categories (e.g., product descriptions, academic papers)? The scope directly impacts the generalizability of the 51.72% figure. Without this context, the number can be misleading. It likely represents a specific snapshot or weighted average across certain content types.

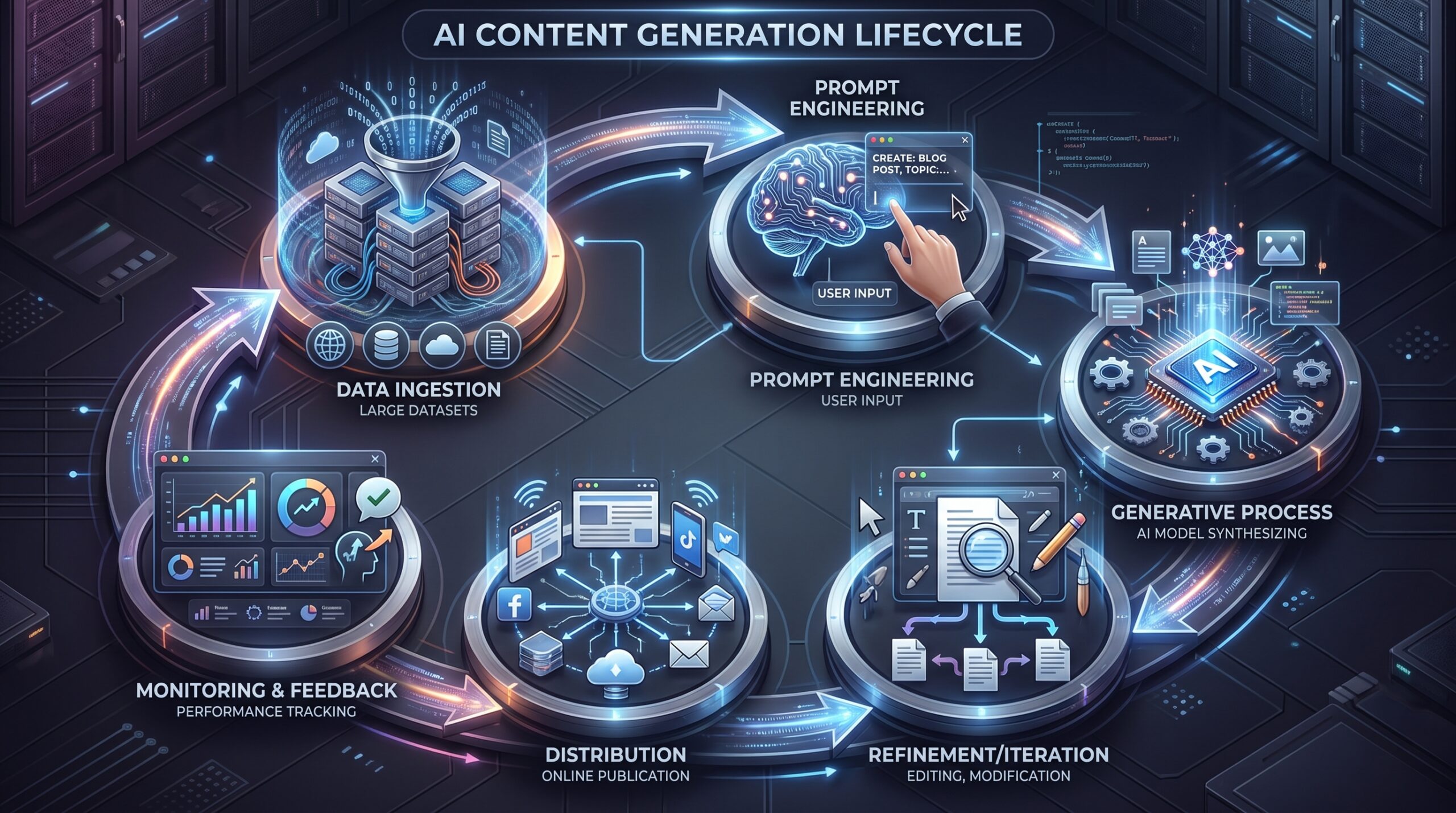

The Mechanisms: How AI Generates Online Content

The astonishing growth in AI-generated content is powered by remarkable advancements in artificial intelligence, particularly in the realm of generative models. These systems have moved beyond simple automation to produce sophisticated and often indistinguishable outputs.

Generative AI Models: Large Language Models (LLMs) and Beyond

At the forefront of this revolution are Large Language Models (LLMs) such as GPT-4, Bard (now Gemini), and Claude. These models are trained on vast datasets of text and code, enabling them to understand, generate, and translate human language with remarkable fluency.

- Text Generation: LLMs can write articles, summaries, marketing copy, code, and even creative fiction. They are adept at maintaining context, adhering to specific tones, and generating information that appears coherent and authoritative.

- Image Generation: Models like Gemini Nano Banana, DALL-E 3, Midjourney, and Stable Diffusion create photorealistic images and intricate artwork from textual prompts. They can generate anything from abstract concepts to specific scenes and styles.

- Video and Audio Generation: More recently, AI has made significant strides in generating video clips from text or static images, and hyper-realistic synthetic voices or musical compositions.

The continuous improvement in these models’ capabilities, driven by larger datasets and more efficient architectures, means they can produce content that increasingly blurs the line between human and machine creation.

Automation at Scale: Content Farms and AI Writers

The adoption of AI in content creation is not limited to individual users experimenting with tools. Large-scale operations are leveraging AI for massive content generation:

- Automated News and Reports: AI can rapidly compile data and generate news reports, financial summaries, and sports recaps, especially for formulaic content.

- E-commerce Product Descriptions: Retailers use AI to generate thousands of unique product descriptions, improving SEO and reducing manual workload.

- Marketing Copy and Ads: AI can generate diverse ad variations, social media posts, and email campaigns, optimizing for engagement and conversion.

- SEO-driven Content: Many websites use AI to create articles and blog posts tailored to specific keywords, aiming to capture search traffic at an unprecedented volume. This strategy, however, carries risks related to quality and search engine guidelines, as discussed in How Google AI is Rewriting the Spam Defense Playbook in 2026.

These applications demonstrate how AI enables content production at a scale previously unimaginable, contributing significantly to the 51.72% figure. The ease of access to these tools further democratizes content creation, but also complicates content moderation.

Template-Driven and Hybrid Approaches

Not all AI-generated content is created entirely by a machine. Many solutions use a hybrid approach:

- Template-Based Generation: AI fills predefined templates with data, such as real estate listings, weather reports, or financial market updates.

- AI-Assisted Writing: Human writers use AI tools for brainstorming, outlining, drafting, or refining content. In these cases, AI acts as a powerful co-pilot, enhancing productivity rather than fully replacing human input.

- Content Spinning and Remixing: Less ethical applications involve AI rewriting existing content to bypass plagiarism detectors, often resulting in low-quality or nonsensical output, which search engines like Google are actively working to combat, as explored in Google AI’s Role in Combating Spam in 2026.

These varied mechanisms illustrate the pervasive nature of AI in online content creation, from fully autonomous systems to tools that augment human capabilities.

Implications for the Digital Landscape

The statistic of 51.72% AI-generated online content signals a monumental shift with profound implications across various sectors of the digital landscape. This transformation impacts how information is consumed, verified, and valued.

SEO and Search Engine Rankings: Google's Stance

The proliferation of AI content poses a significant challenge for search engine optimization (SEO) and the fundamental goal of search engines: to provide high-quality, relevant results. Google, in particular, has adapted its guidelines and algorithms. While it doesn't explicitly prohibit AI-generated content, its focus remains on quality, helpfulness, and originality.

- Quality over Origin: Google states that it values content created for people, not for search engines, regardless of how it's produced. The key is whether it provides value.

- E-E-A-T (Experience, Expertise, Authoritativeness, Trustworthiness): AI-generated content often struggles to demonstrate genuine experience or expertise, making it harder to rank for topics requiring deep insight.

- Spam and Manipulation: Content generated solely to manipulate search rankings, especially low-quality, repetitive, or misleading AI content, is considered spam and can lead to penalties. The ongoing efforts by Google to combat this are well-documented, including new strategies discussed in Google AI’s Role in Combating Spam: What Just Changed and Why It Matters.

SEO strategies must now prioritize human oversight, factual accuracy, and unique value propositions to stand out in an AI-saturated content environment.

Content Authenticity and Trust

One of the most critical challenges is the erosion of trust in online content. When half of what we read or see could be AI-generated, differentiating between human expertise and synthetic creation becomes increasingly difficult.

- Misinformation and Deepfakes: AI can generate convincing fake news, propaganda, and deepfake media, making it harder for individuals to discern truth from fabrication. This impacts public discourse and democratic processes.

- "Hallucinations" and Factual Errors: LLMs are known to "hallucinate" facts or generate confidently incorrect information. If unverified, this propagates inaccuracies at scale.

- Attribution and Authorship: The concept of authorship becomes muddled. Is the AI the author, the prompt engineer, or the human who edits and publishes? This has implications for copyright, journalistic ethics, and academic integrity.

Building trust in the digital age will increasingly rely on transparent content disclaimers, robust verification tools, and a renewed emphasis on critical thinking for consumers.

Economic and Labor Market Impact

The widespread adoption of AI in content creation inevitably affects the labor market and creative industries.

- Job Displacement: Roles focused on repetitive content creation (e.g., basic copywriting, data entry, translation) are at risk of automation. This shift is a key concern across many industries, including software engineering, as highlighted in The Real AI Impact on Software Engineer Jobs in 2026.

- New Job Roles: Conversely, new roles emerge, such as prompt engineers, AI content strategists, AI ethicists, and AI tool developers.

- "Content Pollution" and Value Erosion: If AI floods the market with cheap, low-quality content, the perceived value of all online content, including human-created work, could diminish. This creates a "race to the bottom" unless quality standards are maintained.

- Intellectual Property: The legal frameworks surrounding AI-generated content and copyright are still nascent, leading to disputes over ownership and fair use.

This economic transformation necessitates adaptation, continuous skill development, and policy discussions to navigate the evolving landscape effectively.

Ethical Considerations and Bias

AI models are trained on vast datasets that reflect existing human biases present in the internet and historical texts. When AI generates content, it can unwitting perpetuate and amplify these biases.

- Algorithmic Bias: If training data disproportionately represents certain demographics or viewpoints, AI outputs may reflect and reinforce those biases, leading to unfair or discriminatory content.

- Lack of Nuance and Empathy: AI struggles with genuine empathy, cultural nuance, and deep ethical reasoning, often producing content that is technically correct but emotionally barren or culturally insensitive.

- Environmental Impact: The training and operation of powerful generative AI models consume significant energy, raising concerns about their carbon footprint.

Addressing these ethical challenges requires proactive measures in data curation, model development, and rigorous ethical reviews.

Digital Landscape Impact Matrix

- Content Authenticity: High risk of misinformation, deepfakes, and difficulty distinguishing human from AI.

- SEO & Search: Focus shifts to E-E-A-T, helpfulness, and unique value; spam detection becomes critical.

- Economic & Labor: Job displacement in some creative roles, emergence of new AI-centric jobs (e.g., prompt engineers).

- Trust & Credibility: Erosion of public trust in online information sources; increased need for transparency.

- Ethical & Bias: Amplification of biases from training data, concerns about fairness, privacy, and environmental impact.

- Intellectual Property: Complex questions around copyright ownership and usage of AI-generated works.

Navigating an AI-Dominated Content World

As the digital landscape evolves to incorporate a majority of AI-generated content, stakeholders must adapt their strategies. This includes content creators, consumers, and platform providers alike.

Strategies for Content Creators

For individuals and businesses who create content, the rise of AI presents both challenges and opportunities.

- Leverage AI as a Tool: Instead of viewing AI as a competitor, use it to augment your workflow. AI can assist with research, brainstorming, outlining, drafting first passes, and optimizing for SEO. This enables creators to focus on higher-level strategic thinking and adding unique value. For developers, this extends to using AI for code generation and debugging, a practice that also introduces AI Generated Code Business Risk: The Complete 2026 Guide to Mitigation and Management.

- Focus on E-E-A-T: Emphasize your unique experience, expertise, authoritativeness, and trustworthiness. Share personal anecdotes, original research, case studies, and distinct perspectives that AI cannot replicate.

- Prioritize Human Touch and Emotional Resonance: AI struggles with genuine emotion, nuanced humor, and deep human connection. Content that evokes real feeling, tells compelling stories, or connects on a personal level will stand out.

- Verify and Fact-Check Rigorously: If using AI for content generation, always double-check facts, figures, and sources. AI models can "hallucinate," and unverified information can damage credibility.

- Innovate with New Formats: Experiment with interactive content, personalized experiences, and formats that inherently require human creativity and judgment.

Best Practices for Consumers

For the average internet user, developing critical media literacy skills is more important than ever.

- Question the Source: Always consider where the information comes from. Is it a reputable news organization, an expert in the field, or an anonymous blog?

- Look for Signs of AI: While increasingly difficult, some AI content may still exhibit subtle tells: overly generic language, lack of unique perspective, factual inconsistencies, or repetitive phrasing.

- Cross-Reference Information: Don't rely on a single source. Verify important information across multiple reputable outlets.

- Develop Critical Thinking Skills: Actively analyze the arguments presented, look for biases, and assess the logic of the content.

- Utilize Detection Tools: As AI detection tools become more sophisticated, they can assist in identifying potentially AI-generated content, though none are foolproof.

Platform and Regulator Responsibilities

Digital platforms and governmental bodies have a significant role in shaping the future of content authenticity.

- Transparency and Disclosure: Platforms should implement policies requiring disclosure for AI-generated content, especially for news, health, or political information.

- Improved Detection and Moderation: Investing in advanced AI detection technologies and scalable content moderation systems is crucial for curbing misinformation and spam. This is an ongoing battle, as platforms struggle to keep up with the pace of AI advancement.

- Developing Industry Standards: Collaboration across tech companies, media organizations, and academia to establish common standards for ethical AI content generation and detection.

- Legal and Regulatory Frameworks: Governments may need to consider new laws regarding AI authorship, copyright, data provenance, and liability for AI-generated harm.

- Promoting Media Literacy: Supporting educational initiatives to equip citizens with the skills to critically evaluate online information.

The collective effort of all stakeholders is necessary to foster a digital ecosystem that remains informative, trustworthy, and beneficial.

The Future Outlook: Predictions and Challenges

The implications of AI 51.72% online content Stanford extend far into the future, promising a digital landscape profoundly different from today. Understanding these trends is crucial for preparing for the next wave of technological and societal shifts.

Hyper-Personalization and Curation

As AI becomes even more adept at generating content, we can expect a surge in hyper-personalized experiences:

- Tailored Content Feeds: AI will generate news, entertainment, and educational material specifically molded to individual users’ interests, learning styles, and emotional states, creating incredibly sticky platforms.

- Dynamic Storytelling: Interactive narratives and experiences where the plot, characters, and even outcomes are dynamically generated by AI in real-time, based on user preferences or actions.

- "Everything as a Service" (EaaS): AI will enable new models where personalized content, services, and even virtual companions are generated on demand, pushing content beyond static pages.

While offering unparalleled relevance, this could also lead to “filter bubbles” and “echo chambers” where users are rarely exposed to diverse viewpoints.

The Arms Race Between AI Generation and Detection

The battle between AI systems creating content and those designed to detect it will intensify:

- Evolving Sophistication: Generative AI will become even more indistinguishable from human output, making detection a constantly moving target. Techniques like embedding subtle "human errors" could emerge to fool detectors.

- New Watermarking Technologies: Digital watermarking will become more robust and widespread, both as a tool for identifying AI content and for proving human authorship.

- Ethical AI Development: More emphasis will be placed on developing ethical guidelines and technical safeguards within AI models themselves to prevent misuse and ensure transparency.

This ongoing arms race will require continuous research and investment in AI safety and verification technologies.

Redefining Value and Creativity

The rise of AI-generated content forces a re-evaluation of what constitutes valuable content and human creativity:

- Focus on Originality and Insight: Content that offers truly novel insights, unique research, or deeply personal perspectives will become even more prized.

- Curatorial Expertise: The ability to sift through vast amounts of AI and human-generated content to identify, verify, and present valuable information will be a highly sought-after skill.

- Human-AI Collaboration: The most significant advancements will likely come from synergistic human-AI partnerships, where AI handles the routine and humans provide the vision, empathy, and strategic direction. This aligns with the concept of a composable AI coding stack, enabling flexible and powerful AI systems, as discussed in The Complete Guide to Building Flexible AI Systems in 2026.

- Rise of “Authenticity Labels”: We may see certifications or official labels indicating whether content is human-curated, AI-assisted, or fully AI-generated, similar to organic food labels.

Ultimately, the future of online content will be a complex interplay of rapid technological advancement, evolving consumer expectations, and ongoing ethical and regulatory debates. The 51.72% statistic is not just a number; it’s a harbinger of a new digital era.

Conclusion: Adapting to an AI-Augmented Internet

The remarkable statistic that 51.72% of online content is AI-generated, as highlighted by discussions often referencing Stanford-affiliated research, marks a pivotal moment in the history of the internet. This shift underscores a profound transformation in how information is created, disseminated, and consumed. We are no longer simply interacting with human-authored content; we are immersed in an increasingly AI-augmented digital ecosystem.

The implications are undeniable and multifaceted, touching upon issues of verifiable authenticity, the trustworthiness of information, and the very future of creative work. While AI offers unprecedented opportunities for efficiency, personalization, and access to information, it also presents significant challenges related to misinformation, algorithmic bias, and the economic shifts it induces.

Adapting to this new reality requires a concerted effort from all stakeholders. Content creators must evolve their strategies, emphasizing unique human value and ethical AI utilization. Consumers must hone their critical media literacy skills to navigate a landscape where distinguishing human from machine-generated content grows increasingly difficult. Platforms and regulators, meanwhile, bear the responsibility of fostering transparency, developing robust detection mechanisms, and establishing clear ethical and legal frameworks to ensure a healthy and trustworthy digital environment.

The 51.72% figure is not merely a data point; it is a call to action. It urges us to embrace the potential of AI constructively while diligently addressing its inherent risks. The future of online content depends on our collective ability to adapt, innovate, and uphold the principles of truth, transparency, and human creativity in an increasingly automated world.

Key Takeaways: AI’s Dominance in Online Content

- Over Half of Online Content is AI-Generated: A notable statistic, often linked to Stanford-affiliated research, suggests 51.72% of online content is created by AI.

- Driven by Generative AI: The rise is fueled by advanced LLMs and models capable of producing text, images, and other media at scale.

- Profound SEO Implications: Search engines prioritize quality and E-E-A-T, making unique, human-centric input crucial for visibility amidst AI content proliferation.

- Erosion of Trust: A major concern is the challenge in discerning authentic, human-authored content from AI-generated material, leading to potential misinformation.

- Economic & Labor Shifts: While some jobs are automated, new roles focusing on prompt engineering, AI strategy, and ethical oversight are emerging.

- Critical Ethical Challenges: Bias perpetuation, lack of nuance, and environmental impact of AI models require urgent attention.

- Adaptation is Key: Creators must leverage AI as a tool while emphasizing human value; consumers need enhanced media literacy; platforms and regulators must ensure transparency and develop robust safeguards.

Frequently Asked Questions (FAQ)

What does ‘AI 51.72% online content Stanford’ mean?

This statistic refers to a widely discussed claim, often attributed to Stanford-affiliated research, suggesting that over half (51.72%) of all content found online is now generated by artificial intelligence. It highlights the significant and growing role of generative AI in digital publishing, impacting text, images, and other media types.

How is AI-generated content detected?

AI-generated content is detected through various methods, including statistical analysis of language patterns, specialized neural network detectors trained to identify AI output, and increasingly, digital watermarking embedded by generative AI models. Human oversight and content analysis also play a crucial role in validating these detection techniques.

What are the main implications of so much AI-generated content for SEO?

For SEO, the main implication is a reinforced focus on quality, helpfulness, and E-E-A-T (Experience, Expertise, Authoritativeness, Trustworthiness). While search engines don’t inherently penalize AI content, they actively combat low-quality, spammy, or unhelpful content, regardless of its origin. Human oversight, unique insights, and factual accuracy are increasingly vital for ranking well.

How does AI content impact trust and authenticity online?

The proliferation of AI content significantly erodes trust and authenticity online. It makes it harder for users to distinguish between human-authored expertise and AI-generated information, increasing the risk of misinformation, deepfakes, and the spread of "hallucinated" facts. Transparency and clear attribution become paramount to maintaining credibility.

Will AI replace human content creators?

While AI will automate repetitive content tasks and displace some jobs, it is more likely to augment human creators rather than fully replace them. The focus will shift towards roles that leverage AI as a tool for efficiency, while emphasizing uniquely human attributes like creativity, critical thinking, emotional intelligence, and strategic oversight. New roles like prompt engineers and AI content strategists are also emerging.