AI chipmaker Cerebras Systems publicly filed for a U.S. Initial Public Offering (IPO) on April 17, 2026, targeting a Nasdaq listing under ticker symbol “CBRS” in Q2 2026. The company aims to raise approximately $2 billion at a valuation between $22 billion and $28 billion. This follows a withdrawn IPO attempt in October 2025 and a recent $1 billion financing round at a $23 billion valuation. A significant boost comes from a reported deal where OpenAI will spend over $20 billion on Cerebras chips and receive an equity stake, signaling strong market validation.

Cerebras Systems, an AI chipmaker, is going public on Nasdaq (CBRS) in Q2 2026, aiming to raise $2 billion at a $22-28 billion valuation. This IPO is underpinned by a massive $20B+ commitment from OpenAI. Cerebras’s wafer-scale technology offers a fundamental architectural shift, especially for training large language models. Operators should understand its unique advantages over traditional GPU clusters, its financial performance, potential market impact, and the operational considerations for deploying its high-performance systems. The IPO presents both opportunities and risks, requiring careful assessment for strategic AI infrastructure planning.

AI chipmaker Cerebras Systems publicly filed for a U.S. Initial Public Offering (IPO) on April 17, 2026. The company is targeting a Nasdaq listing under ticker symbol “CBRS” in Q2 2026. This strategic move aims to raise approximately $2 billion, with a projected valuation between $22 billion and $28 billion. This current attempt follows a withdrawn IPO in October 2025 and is bolstered by a recent $1 billion financing round at a $23 billion valuation. A significant catalyst for this IPO is a reported deal where OpenAI will spend over $20 billion on Cerebras chips. Furthermore, OpenAI is set to receive an equity stake in Cerebras, solidifying their partnership.

Why Cerebras Systems’ IPO Matters for AI Operators

Cerebras represents a fundamental architectural shift in AI acceleration. While Nvidia currently dominates the market with its GPU clusters, Cerebras’s Wafer-Scale Engine 3 (WSE-3) takes a different approach. It integrates an entire silicon wafer as a single, enormous processor. This innovative design effectively eliminates the inter-chip communication bottlenecks that frequently hinder the performance of distributed systems when training massive AI models. For operators engaged in developing and running cutting-edge Large Language Models (LLMs), this technological advantage could translate into significantly reduced training times, potentially shrinking processes from weeks to mere days.

The company’s financial performance further underscores its significance. Cerebras reported revenues of $510 million and a profit of $87.9 million, showcasing rare profitability for a deep tech hardware company. This financial stability, coupled with the substantial endorsement from OpenAI, sends a clear signal to the industry. It indicates that wafer-scale technology has matured beyond experimental phases and is now a production-ready solution capable of handling real-world, demanding AI workloads.

Cerebras Systems IPO Timeline and Key Dates

Understanding the timeline and key dates associated with the Cerebras IPO is crucial for operators and potential investors. These milestones provide a roadmap for when specific actions and information are expected.

- October 2025: Cerebras initially withdraws its IPO filing. This decision was primarily due to unfavorable market conditions at the time.

- February 2026: The company successfully secures $1 billion in financing. This round values the company at $23 billion, demonstrating strong investor confidence.

- April 17, 2026: Cerebras publicly files its S-1 registration document with the U.S. Securities and Exchange Commission (SEC). This filing provides comprehensive financial and operational details.

- Q2 2026 (Expected): The anticipated Nasdaq listing will occur under the ticker symbol “CBRS”. The exact date within Q2 will be announced closer to the event.

- Post-IPO: A lockup period typically follows an IPO, usually lasting 180 days after the listing. During this time, company insiders and early investors are restricted from selling their shares.

Operators interested in the company’s performance and future should closely monitor ongoing SEC filings for precise details regarding the IPO pricing date and allocation specifics. While institutional investors generally receive priority access to initial shares, retail investors will have the opportunity to participate through their standard brokerage platforms once public trading commences.

Key Players in the Cerebras Systems IPO

The success and trajectory of the Cerebras Systems IPO involve several pivotal individuals and organizations. Their roles and influence are critical to understanding the offering’s context.

- Andrew Feldman (CEO): As a former SeaMicro executive, Feldman co-founded Cerebras in 2016. He has strategically positioned the company as a direct challenger to Nvidia, even while acknowledging fundamental differences in their architectural approaches. His leadership has been instrumental in navigating the complex AI hardware landscape.

- OpenAI: This partnership extends beyond a simple customer-vendor relationship. OpenAI’s commitment of over $20 billion for Cerebras chips provides significant validation and revenue stability. The equity stake granted to OpenAI further aligns their long-term interests, potentially fostering collaborative product development that could benefit both entities and the broader AI community. This major endorsement is a powerful signal of confidence in Cerebras’s technology for OpenAI’s 2026 AI Updates and beyond.

- Investment Banks: While specific names have not yet been publicly confirmed in recent reports, the involvement of major investment banks is a given for an IPO of this scale. You can typically expect firms such as Goldman Sachs, Morgan Stanley, or JPMorgan to lead the offering. These banks manage the complex process of underwriting, marketing, and distributing shares to investors.

- Early Investors: Prominent early investors include Benchmark, Foundation Capital, and Eclipse Ventures. The expiration of their lockup periods post-IPO could introduce volatility into the stock price if a large volume of shares floods the market. Monitoring these dates is important for stock performance analysis.

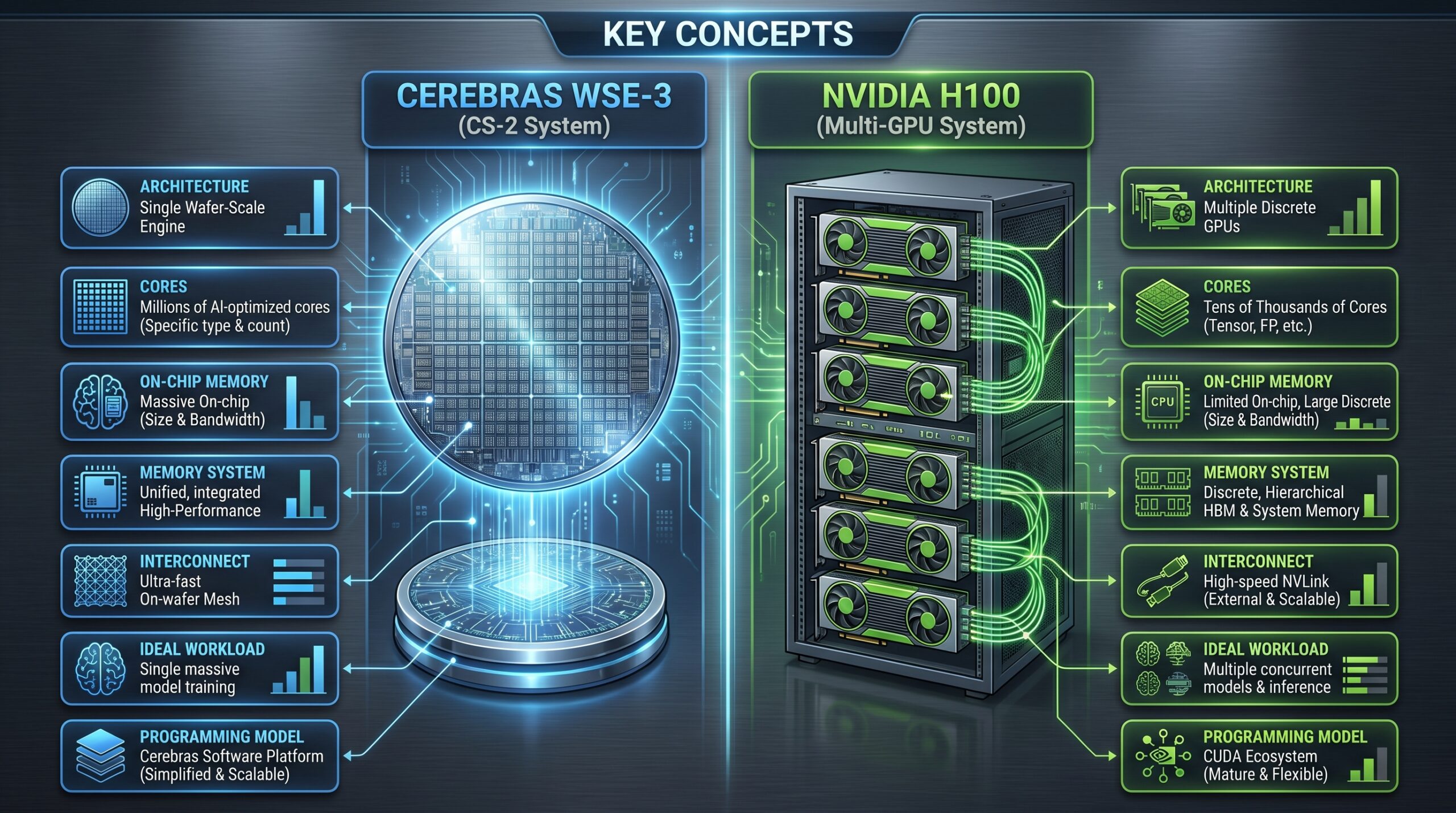

Cerebras WSE-3 vs. Nvidia H100: Architectural Breakdown

The core of why Cerebras matters to AI operators lies in its fundamental architectural differences when compared to Nvidia’s dominant GPU offerings. This comparison highlights where each accelerator excels.

| Feature | Cerebras WSE-3 (CS-2 System) | Nvidia H100 (Multi-GPU System) |

|---|---|---|

| Architecture | Single wafer-scale chip (46,225 cm²) | Multiple GPUs (814 cm² per H100) |

| Cores | 900,000 AI-optimized cores | 14,592 CUDA cores + 528 Tensor cores |

| On-chip Memory | 44 GB SRAM (12.8 PB/s bandwidth) | 50 MB L2 cache per GPU |

| Memory System | Unified memory across wafer | Discrete VRAM (80GB HBM3 per GPU) |

| Interconnect | On-wafer communication | NVLink (900 GB/s between GPUs) |

| Ideal Workload | Single massive model training | Multiple concurrent model training |

| Programming Model | Cerebras Software Platform | CUDA ecosystem |

The WSE-3 particularly excels when training models that are too large to fit into the memory of a single GPU. Its unified memory architecture across the entire wafer eliminates the complex and often inefficient process of model parallelism across multiple discrete devices. This simplifies model distribution and reduces communication overhead.

However, Nvidia’s long-established CUDA ecosystem supports a significantly broader range of workloads and boasts more mature and widely adopted tooling. While Cerebras focuses on specialized power for massive model training, Nvidia offers versatility and a vast developer community, impacting the Composable AI Coding Stack choices for many organizations.

Cerebras Systems Financial Performance Analysis

Cerebras Systems has demonstrated compelling financial growth and, notably, profitability in a sector often characterized by heavy investment and delayed returns. This financial health provides a strong foundation for its IPO.

| Metric | 2024 Performance | 2025 Performance |

|---|---|---|

| Revenue | $310 million | $510 million |

| Gross Profit | $124 million | $214 million |

| Operating Income | $42 million | $87.9 million |

| R&D Investment | $180 million | $220 million |

| Customer Concentration | Top 3 customers: 65% of revenue | Top 3 customers: 58% of revenue |

The company achieved a revenue growth of 64.5% year-over-year, which indicates strong market adoption and increasing demand for its specialized AI acceleration solutions. Additionally, the decrease in customer concentration from 65% to 58% for the top three customers is a positive sign. It suggests Cerebras is successfully diversifying its client base, even while securing the monumental OpenAI deal.

Research and Development (R&D) investment increased by 22% year-over-year. This sustained investment highlights Cerebras’s commitment to continued innovation and maintaining its technological edge in a highly competitive market. Furthermore, achieving profitability at this stage is quite unusual for semiconductor companies, especially those pioneering new architectures. This suggests efficient scaling of operations and strong unit economics, which could be attractive to investors.

Market Impact of Cerebras Systems IPO

The Cerebras Systems IPO is poised to create significant ripples across the AI accelerator market and the broader technology sector. Its public debut marks a new chapter for an alternative AI hardware architecture.

The AI accelerator market experienced substantial growth, reaching $85 billion in 2025. Projections indicate it will expand further to an estimated $150 billion by 2028. Within this surging market, Cerebras’s IPO stands out as the first pure-play wafer-scale AI company to go public. This event will establish a new public benchmark for evaluating and valuing alternative AI architectures, moving beyond the traditional GPU-centric view.

Nvidia, the current market leader, boasts a market capitalization exceeding $2 trillion. In comparison, Cerebras’s projected valuation of $22-28 billion represents approximately 1% of Nvidia’s size. This indicates a more realistic and perhaps less hype-driven pricing strategy, focusing on its niche strengths rather than attempting to directly rival Nvidia’s broad market dominance. This IPO could significantly influence the AI Decentralization vs. Bitcoin Mining Industrialization debate, offering a powerful, centralized solution.

Beyond its direct impact, the IPO has the potential to trigger increased Mergers & Acquisitions (M&A) activity within the AI hardware space. Larger tech companies, such as cloud providers (AWS, Azure, GCP) or established semiconductor leaders (Intel, AMD), might view Cerebras’s success as a signal to acquire specialized AI hardware capabilities. This strategic move could help them counter Nvidia’s growing influence and secure their position in the evolving AI landscape.

Risks for Cerebras Systems Post-IPO

While Cerebras Systems presents compelling advantages, operators must also consider the inherent risks associated with its technology and market position post-IPO. These risks span technical, market, and operational domains.

Technical Risks

- Wafer-scale manufacturing yields: The complexity of wafer-scale production means that manufacturing yields could fluctuate, directly impacting the company’s production capacity and cost-efficiency. Maintaining consistent high yields is a constant challenge.

- Future WSE generations: Cerebras must continually innovate and ensure that future generations of its Wafer-Scale Engine maintain or extend their performance leadership. Failure to do so could allow competitors to close the gap.

- Software ecosystem expansion: The Cerebras Software Platform needs to expand significantly to eventually match the breadth and maturity of Nvidia’s CUDA ecosystem. A limited software environment could deter broader adoption.

Market Risks

- IPO valuation assumptions: The current IPO valuation heavily assumes continued robust growth in AI investment. Any slowdown in global AI spending could negatively impact revenue projections and stock performance.

- Economic downturn: A broader economic downturn could lead to reduced enterprise AI spending, particularly for high-capital expenditure items like Cerebras systems. This could impact sales volumes.

- Nvidia’s next-generation chips: Nvidia’s upcoming chips, such as the B100 and Blackwell architectures, are expected to deliver significant performance improvements. These advancements could narrow the performance gap with Cerebras, especially for certain workloads, affecting Cerebras’s competitive edge. Google AI Advances in 2026 also highlights increased competition across the board.

Operational Risks

- Dependence on TSMC: Cerebras relies on TSMC for its wafer production. This creates a single point of failure in its supply chain, making it vulnerable to geopolitical issues, manufacturing disruptions, or capacity constraints at TSMC.

- Limited customer base: Despite diversification efforts, the current customer base remains relatively concentrated. A highly specialized product with a high price point naturally involves fewer clients.

- High system costs: Each CS-2 system carries a significant cost, estimated between $2-3 million. This high capital expenditure inherently limits the addressable market size, primarily targeting organizations with very large-scale AI needs.

Case Study: Implementing Cerebras CS-2 for LLM Training

To illustrate the practical implications of Cerebras technology, consider a common scenario for advanced AI operators: training an extremely large language model (LLM).

Scenario Details

A leading research organization is tasked with training a complex 1 trillion parameter model. The challenge lies in efficiently handling such a massive scale.

GPU Approach

Using a traditional GPU-based architecture, this task would necessitate deploying 512 Nvidia H100 GPUs. These GPUs would typically be distributed across 64 separate servers, requiring a large-scale cluster. The engineering team would then spend approximately three weeks implementing and debugging complex model parallelism strategies to effectively distribute the model across these numerous devices. Training the model would then take around 28 days. However, due to significant inter-GPU communication overhead, the operational efficiency would drop to approximately 45%. The total cloud compute fees for this duration are estimated at $1.9 million.

Cerebras Approach

Conversely, with the Cerebras approach, only two CS-2 systems would be required. These systems are designed to handle the entire model without the need for partitioning across multiple devices. The programming effort, utilizing Cerebras’s specialized software tools, would take about five days, significantly less than the GPU approach. The actual training process would complete in just nine days. Crucially, the efficiency would be much higher, around 78%, because the on-wafer architecture virtually eliminates inter-chip communication overhead. The total cost, including hardware amortization and power, is estimated at $1.2 million for hardware amortization and an additional $200,000 for power. This leads to a total cost of $1.4 million.

Outcome Analysis

In this comparison, the Cerebras approach offers substantial time savings, completing the training in a third of the time (9 days vs. 28 days). The cost is also lower, even with the high capital expenditure. For organizations that frequently train multiple large models, the 3:1 time savings more than justifies the initial capital investment in Cerebras hardware. This rapid turnaround allows for faster iteration, research, and deployment of advanced AI capabilities, underscoring Cerebras’s value proposition for very specific high-end workloads, particularly for training with large datasets.

Case Study: Enterprise AI Inference Deployment

Another crucial application area for AI accelerators is inference — deploying trained models for real-time predictions. This case study explores how Cerebras compares to GPUs for enterprise-level inference at scale.

Scenario Details

A major financial services company intends to deploy sophisticated fraud detection models across its global data centers. The primary requirements are high throughput and consistent, low latency.

GPU Approach

To meet the company’s demands, 128 Nvidia A100 GPUs would be distributed across eight distinct geographic locations. This setup would manage approximately 2 million inferences per second. However, network conditions between the various GPU clusters, as well as internal cluster communication, would cause latency to vary significantly, ranging from 15 milliseconds to 45 milliseconds. This variability could impact real-time fraud detection capabilities, where every millisecond counts.

Cerebras Approach

Using Cerebras technology, just four CS-2 systems would be centralized in two critical data centers. This more compact setup can handle an impressive 2.4 million inferences per second. A key advantage is the consistent latency, achieving around 8 milliseconds due to the single-chip processing nature of the wafer-scale engine. While the upfront capital outlay for Cerebras systems is higher, the operational costs are estimated to be 30% lower. This reduction is primarily due to minimized power consumption and cooling requirements associated with fewer, more efficient systems compared to a sprawling GPU cluster.

Operational Impact

The Cerebras solution, while offering superior performance and lower operating costs, introduces a different kind of operational challenge. It requires fewer systems but leads to a higher degree of geographic concentration. To mitigate this potential single point of failure or localized service disruption, the financial services company implemented an active-active redundancy strategy between its two data centers. This ensures continuous service availability and resilience, even with the centralized architecture. The choice between these approaches often boils down to balancing upfront investment, operational costs, performance gains, and specific risk tolerance for latency and availability.

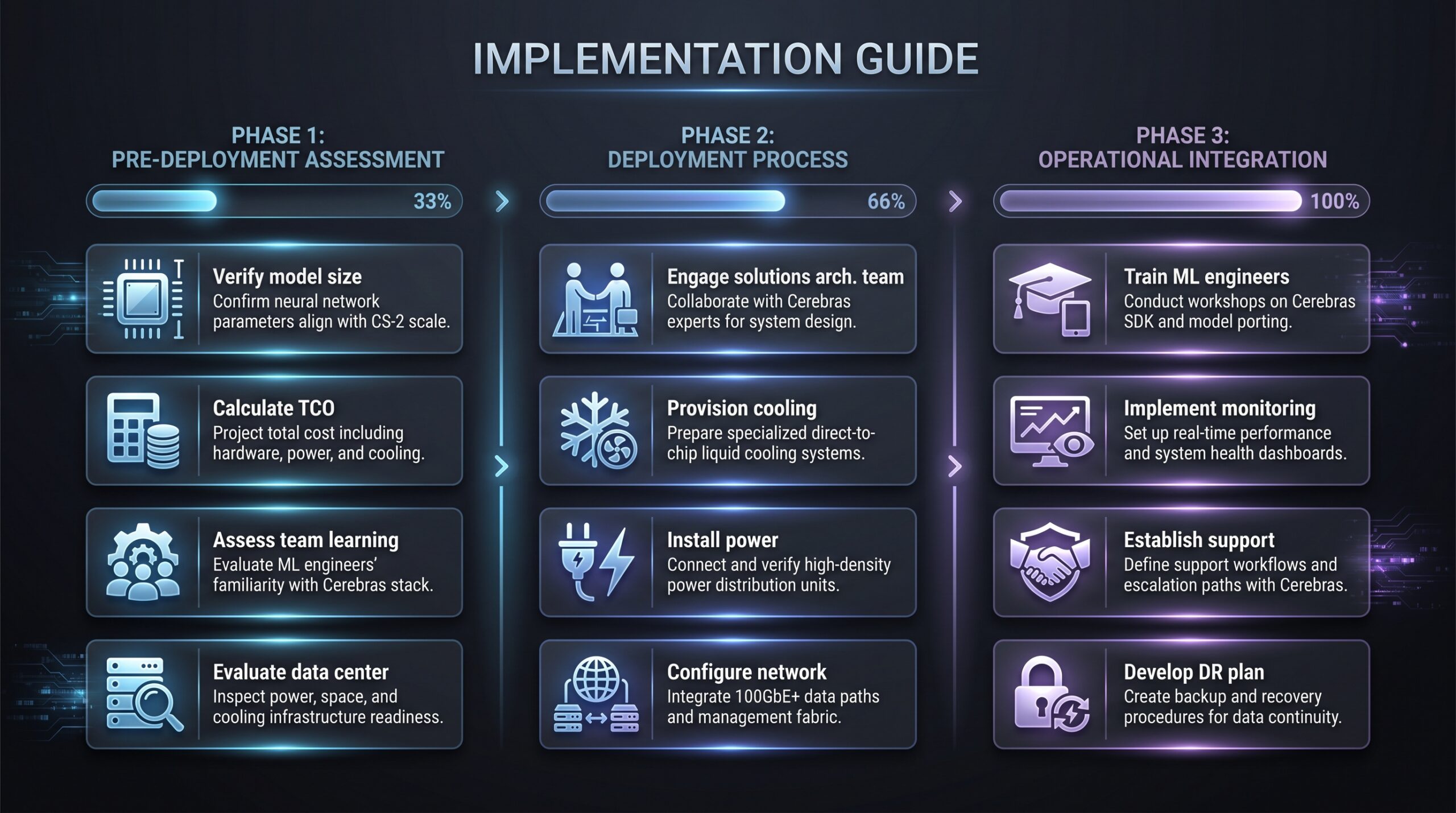

Implementation Checklist for Cerebras Systems

Deploying Cerebras Systems requires a thorough and systematic approach due to their specialized nature and high performance demands. This checklist guides operators through the essential pre-deployment, deployment, and operational integration steps.

Pre-Deployment Assessment

- Verify model size: Confirm that your specific AI models exceed a threshold of 50 billion parameters. This helps justify the specialized architecture and high cost of Cerebras systems.

- Calculate Total Cost of Ownership (TCO): Thoroughly estimate the TCO, which must include not only hardware costs but also significant expenses related to power consumption and the advanced cooling requirements of the CS-2 systems.

- Assess team capability: Evaluate your machine learning team’s readiness and ability to quickly learn and adapt to the Cerebras Software Platform. This includes assessing their existing skills and the potential for upskilling.

- Evaluate data center requirements: Conduct a detailed assessment of your existing data center infrastructure. Focus on available space, floor loading capacity, and crucially, the ability to provide substantial power and specialized cooling.

Deployment Process

- Engage solutions architecture team: Proactively work with the Cerebras solutions architecture team. They can provide essential guidance and a design review to ensure optimal integration and performance within your specific environment.

- Provision cooling infrastructure: Prepare to provision appropriate and robust liquid cooling infrastructure. Each CS-2 system typically requires around 35kW of cooling capacity, a much higher demand than standard servers.

- Install dedicated power circuits: Ensure the installation of dedicated 240V power circuits, which are necessary to meet the high electrical demands of the Cerebras systems.

- Configure network connectivity: Establish high-speed network connectivity. A minimum of 100GbE per system is recommended to handle the massive data throughput and ensure efficient data transfer.

Operational Integration

- Train ML engineers: Invest in comprehensive training for your machine learning engineers on the Cerebras Software Platform. This ensures they can effectively utilize the hardware for model development and deployment.

- Implement monitoring: Deploy robust monitoring tools to track system health, performance metrics, and resource utilization. Proactive monitoring is key to maintaining optimal uptime and identifying issues quickly.

- Establish support contract: Secure a comprehensive support contract with Cerebras, ideally for 24/7 response. Given the mission-critical nature of these systems, rapid issue resolution is crucial.

- Develop disaster recovery plan: Because of the centralized nature of some Cerebras deployments, develop a meticulous disaster recovery plan. This should outline procedures for data backup, system restoration, and failover strategies to ensure business continuity. This is particularly important for critical operations such as those described in our 2026 guide to AI Generated Code Business Risk mitigation.

Risk Mitigation Strategies

Adopting any cutting-edge technology, especially one as specialized as Cerebras Systems, requires a proactive approach to risk mitigation. Operators should consider strategies across technical, financial, and strategic dimensions.

Technical Risk Mitigation

- Maintain GPU cluster: Continue to maintain and utilize a GPU cluster for smaller models and general development tasks. This hybrid approach allows for flexibility and reduces reliance on a single architecture.

- Implement comprehensive backup: Establish robust model backup and versioning protocols. This safeguards developmental progress and allows for quick recovery in case of system failures or programming errors.

- Negotiate Service Level Agreements (SLAs): Secure stringent SLAs with Cerebras. These agreements should specify performance guarantees, uptime commitments, and rapid response times for support issues.

- Develop cross-trained staff: Prevent knowledge silos by cross-training multiple staff members on the Cerebras Software Platform and system operations. This ensures redundancy in expertise and operational resilience.

Financial Risk Mitigation

- Structure as operating lease: Consider structuring the acquisition of Cerebras systems as an operating lease rather than a direct capital purchase. This can help manage cash flow and potentially reduce upfront capital expenditure.

- Start with a pilot project: Initiate with a smaller pilot project before committing to a full-scale deployment. A pilot allows for thorough testing of the technology’s suitability and proves its Return on Investment (ROI) in your specific environment.

- Negotiate volume discounts: If planning to deploy multiple systems, negotiate volume discounts with Cerebras. This can significantly reduce the overall cost per unit.

- Include performance clauses: Incorporate performance clauses into purchase agreements. These clauses can provide financial recourse or contractual adjustments if the systems do not meet agreed-upon performance metrics.

Strategic Risk Mitigation

- Diversify AI accelerator portfolio: Avoid vendor lock-in by diversifying your AI accelerator portfolio across multiple vendors. This strategy improves resilience and provides leverage in negotiations.

- Monitor Cerebras’s financial health: Continuously monitor Cerebras’s financial health post-IPO. This vigilance can provide early warning signs of any operational or strategic challenges the company might face.

- Develop migration plan: Create a contingency migration plan to alternative platforms. This ensures that if Cerebras technology no longer meets your needs or its support falters, you have a clear path to transition workloads.

- Participate in advisory board: Engage actively by participating in Cerebras’s customer advisory board, if one exists. This allows you to influence product roadmap development and stay abreast of upcoming changes. This kind of proactive engagement is vital in the fluid world of AI workflow automation.

Strategic Importance of Ecosystem Compatibility

When integrating advanced AI hardware like Cerebras Systems, the compatibility with existing software ecosystems and future development pipelines is paramount. Operators should not only assess raw performance but also the ease of integration with frameworks like PyTorch or TensorFlow, and the availability of development tools and libraries. A powerful chip is only as useful as its accessible software interface. Consider the long-term implications for developer productivity and the potential for lock-in with proprietary platforms.

FAQ: Cerebras Systems IPO Questions

Common Questions About Cerebras Systems’ IPO

What is Cerebras Systems’ ticker symbol?

Cerebras will trade on Nasdaq under ticker symbol “CBRS” following its IPO. The company confirmed this in their April 17, 2026, SEC filing, making it straightforward for investors to track once trading begins.

When will Cerebras Systems IPO price be set?

The IPO pricing typically occurs after the roadshow, approximately one to two weeks before trading officially begins on the exchange. Based on the current timeline, expect the pricing to be set in late Q2 2026. Keep an eye on official SEC filings for the precise date.

How does Cerebras differ from traditional AI chips?

Cerebras utilizes a unique wafer-scale integration approach. This means that entire silicon wafers are designed and fabricated as single, extremely large chips. This design fundamental eliminates the inter-chip communication overhead that typically limits the performance of traditional GPU clusters when processing massive AI models. The result is significantly faster communication and processing for specific, large-scale workloads.

Is Cerebras profitable?

Yes, Cerebras Systems reported a profit of $87.9 million on $510 million in revenue in their latest financial results. This profitability is considered unusual for semiconductor companies, especially those still in advanced growth stages and pioneering entirely new architectures, signaling strong financial management and market acceptance.

What is the OpenAI relationship?

OpenAI has made a substantial commitment to Cerebras, agreeing to spend over $20 billion on its AI chips. In return for this significant investment and partnership, OpenAI has also received an equity stake in Cerebras. This deep collaboration provides Cerebras with considerable revenue visibility and further validates its technology in the eyes of the AI industry.

How can investors participate in the IPO?

The majority of initial IPO allocation typically goes to institutional investors, such as large funds and wealth management firms. However, retail investors can purchase shares once public trading commences through their standard brokerage accounts. It’s advisable to consult with a financial advisor for specific investment strategies regarding IPOs.

What to Do Next: Action Steps for Operators

For AI operators considering the implications of Cerebras Systems’ IPO and technology, a structured approach to evaluation and planning is essential.

Cerebras IPO: Operator Action Steps

Navigating the Cerebras IPO and its technological implications requires a multi-phased approach for AI operators. This action plan categorizes critical tasks into immediate, short-term, and long-term steps to ensure thorough assessment and strategic positioning.

- Immediate Actions (Next 7 Days):

• Review Cerebras’ S-1 filing on SEC EDGAR system once available.

• Assess current AI workloads for potential Cerebras suitability.

• Contact Cerebras sales team for a technical briefing.

• Analyze power and cooling requirements for CS-2 systems. - Short-Term Planning (Next 30 Days):

• Develop a business case for a Cerebras pilot project.

• Monitor IPO pricing and initial trading performance closely.

• Evaluate staffing needs for Cerebras software platform expertise.

• Attend Cerebras technology deep dive sessions. - Long-Term Strategy (Next 90 Days):

• Decide on a build vs. buy approach for AI infrastructure.

• Negotiate an enterprise agreement if pursuing deployment.

• Develop a comprehensive hybrid architecture plan combining Cerebras and GPUs.

• Establish a performance benchmarking methodology for key workloads.

Immediate Actions (Next 7 Days)

- Review S-1 filing: Once available, thoroughly review Cerebras’s S-1 filing on the SEC EDGAR system. This document provides critical financial, operational, and risk information.

- Assess AI workloads: Evaluate your current AI workloads to determine if any could specifically benefit from Cerebras’s wafer-scale architecture. Focus on massive model training or high-throughput, low-latency inference.

- Contact Cerebras sales: Reach out to the Cerebras sales team to request a detailed technical briefing. This will provide deeper insights into their technology suitable for their Salesforce Headless 360: AI Agent Infrastructure.

- Analyze infrastructure requirements: Begin a preliminary analysis of the power and cooling requirements necessary to host CS-2 systems within your existing or planned data centers.

Short-Term Planning (Next 30 Days)

- Develop pilot business case: Create a compelling business case for a Cerebras pilot project. This should outline expected ROI, costs, and key performance indicators.

- Monitor IPO performance: Closely monitor the IPO pricing and initial trading performance of Cerebras shares to gauge market sentiment and potential investment opportunities.

- Evaluate staffing needs: Assess the current skill sets of your engineering team and identify any staffing or training needs for proficiency with the Cerebras Software Platform.

- Attend deep dive sessions: Participate in any Cerebras technology deep dive sessions or webinars offered. These can provide invaluable technical details and best practices.

Long-Term Strategy (Next 90 Days)

- Decide build vs. buy: Make a strategic decision on whether to build your AI infrastructure internally using Cerebras or to leverage external cloud services that offer Cerebras as a managed solution.

- Negotiate enterprise agreement: If proceeding with direct deployment, enter into negotiations for an enterprise agreement with Cerebras. This will cover purchasing, support, and future upgrades.

- Develop hybrid architecture plan: Plan for a hybrid AI infrastructure that intelligently combines Cerebras systems for specialized workloads with traditional GPUs for more general-purpose tasks. This ensures optimal resource allocation as detailed in our complete guide to self-custodial bot trading.

- Establish benchmarking methodology: Implement a robust performance benchmarking methodology to continuously evaluate the efficiency and effectiveness of Cerebras systems against your specific AI workloads.

Key Takeaways from Cerebras Systems IPO

- Cerebras Systems (CBRS) is targeting a Q2 2026 Nasdaq IPO, aiming for a $22-28 billion valuation, backed by a significant $20B+ commitment and equity stake from OpenAI.

- Its Wafer-Scale Engine 3 (WSE-3) offers a fundamental architectural shift, eliminating inter-chip communication bottlenecks crucial for training massive AI models, potentially cutting training times from weeks to days.

- Cerebras reported $510 million in revenue and $87.9 million in profit in 2025, demonstrating rare profitability in deep tech hardware.

- The IPO establishes a new benchmark for pure-play wafer-scale AI companies and could trigger M&A activity in the AI accelerator market.

- Key risks include wafer-scale manufacturing yields, software ecosystem breadth compared to CUDA, dependence on TSMC, and the high cost of CS-2 systems.

- Case studies demonstrate significant time and cost savings for large-scale LLM training and improved, consistent latency for enterprise inference compared to traditional GPU approaches.

- Operators must conduct thorough pre-deployment assessments, plan for specialized cooling and power, train staff, and implement robust risk mitigation strategies for successful Cerebras integration.