A new research paper, “Reinforced Agent: Inference-Time Feedback for Tool-Calling Agents,” introduces a novel approach to enhance the reliability of LLM-powered tool-calling agents by integrating a specialized reviewer agent into the execution loop. This reviewer proactively evaluates provisional tool calls before they are executed, shifting from traditional post-hoc error detection to real-time mitigation and course correction, thereby improving agent performance on tasks requiring tool selection and parameter accuracy.

- Reinforced Agent introduces an inference-time feedback loop where a secondary LLM reviews the primary agent’s tool calls before execution.

- This proactive review mechanism identifies and corrects errors in tool selection, parameters, and scope recognition, improving agent reliability.

- The system quantifies the trade-off between helpfulness (correcting errors) and harmfulness (degrading correct responses) of the reviewer.

- Evaluations show a +5.5% improvement in irrelevance detection and +7.1% on multi-turn tasks, with reviewer model choice being critical.

What changed

Traditionally, evaluating tool-calling agents has been a post-hoc exercise. Errors in tool selection, parameter accuracy, or scope recognition were identified after a trajectory completed, leading to subsequent prompt-tuning or retraining efforts [2]. This meant that once an agent made a decision, it was executed, and any issues were only discovered after the fact, preventing real-time course correction.

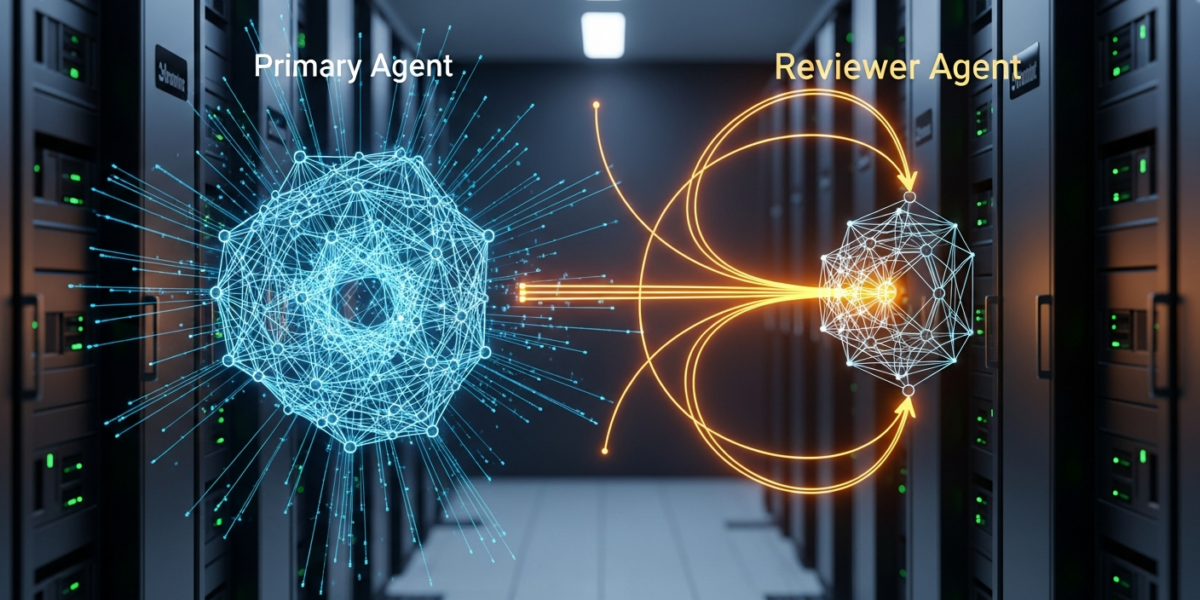

The “Reinforced Agent” paper, published on arXiv on , introduces a fundamental shift by moving this evaluation into the active execution loop at inference time [1]. Instead of waiting for a task to complete, a specialized “reviewer agent” now scrutinizes provisional tool calls before they are executed. This architecture establishes a clear separation of concerns: a primary agent handles execution, while a secondary agent provides real-time feedback and error mitigation. This approach contrasts with methods like ReAgent-U, which utilize textual critiques exclusively during training and operate as standard agents at inference time without external guidance [3].

This change enables proactive error correction, allowing the system to identify and potentially rectify mistakes before they impact the outcome. The researchers also introduce new “Helpfulness-Harmfulness” metrics to systematically quantify the trade-off introduced by this multi-agent setup, measuring how often the reviewer corrects errors versus how often it degrades correct responses [2].

How it works

The core of the Reinforced Agent architecture lies in its two-part system: a base agent and a reviewer agent. When the base agent proposes a tool call, instead of executing it directly, the provisional call is first routed to the reviewer agent. The reviewer’s task is to assess the proposed tool call for potential issues, such as incorrect tool selection, inaccurate parameters, or misinterpretation of the task’s scope [2].

If the reviewer identifies an issue, it provides feedback to the base agent, which can then refine its tool call before execution. This iterative feedback loop happens entirely at inference time, meaning the agent can course-correct in real-time based on the reviewer’s input. This is distinct from traditional reinforcement learning (RL) from human feedback (RLHF), where human ratings train a reward model that guides the RL agent, often during a training phase [6]. Here, the feedback loop is automated and occurs dynamically during the agent’s operation.

The effectiveness of this system is measured using two key metrics:

- Helpfulness: The percentage of errors made by the base agent that the reviewer agent successfully corrects.

- Harmfulness: The percentage of correct responses from the base agent that the reviewer agent incorrectly flags or degrades [2].

These metrics are crucial for understanding the net positive value of a given reviewer model or prompt. The paper highlights that reviewer model choice is critical, with different models yielding varying benefit-to-risk ratios. For instance, the reasoning model o3-mini achieved a 3:1 benefit-to-risk ratio, outperforming GPT-4o‘s 2.1:1 ratio [2]. Additionally, automated prompt optimization via GEPA provided further performance gains, demonstrating that the reviewer component can be systematically improved without needing to retrain the base agent [2].

Why it matters for operators

For operators deploying LLM-powered agents, particularly those relying on tool-calling for critical tasks, the Reinforced Agent approach offers a tangible path to increased reliability and reduced operational overhead. The current state of LLM agents often involves a significant amount of post-deployment monitoring and reactive prompt engineering to address errors that only surface during live execution. This new paradigm directly tackles that by integrating a proactive quality assurance step into the agent’s decision-making process.

The ability to catch and correct erroneous tool calls before they execute means fewer failed API calls, reduced waste of computational resources, and, crucially, a higher success rate for end-user interactions. Consider an agent managing financial transactions or controlling industrial equipment; an incorrect tool call could have severe consequences. By introducing an inference-time reviewer, operators can build a more robust safety net, mitigating risks associated with LLM hallucinations or misinterpretations. This is a significant step towards making LLM agents truly production-ready for high-stakes environments. The FrontierWisdom view is that this separation of concerns—execution and review—will become a standard architectural pattern for complex agentic systems, allowing for independent optimization and iteration on each component. It also suggests that the market for specialized “reviewer” models, perhaps smaller, highly-tuned LLMs focused solely on validation, could emerge as a distinct product category.

Benchmarks and evidence

The Reinforced Agent framework was evaluated on two distinct benchmarks: BFCL (single-turn tasks) and Tau2-Bench (multi-turn stateful scenarios) [2]. The results demonstrate measurable improvements:

- Irrelevance Detection: The system achieved a +5.5% improvement in detecting irrelevant tool calls [2].

- Multi-Turn Tasks: Performance on multi-turn stateful scenarios saw a +7.1% increase [2].

A key finding from the evaluation pertains to the critical role of reviewer model choice. The paper compared the benefit-to-risk ratios of different models:

o3-mini: Demonstrated a 3:1 benefit-to-risk ratio [2].GPT-4o: Achieved a 2.1:1 benefit-to-risk ratio [2].

This indicates that o3-mini was more effective at providing net positive value by correcting errors more frequently than it introduced new ones, compared to GPT-4o. Further optimization through automated prompt engineering via GEPA yielded additional gains, improving performance by +1.5% to +2.8% [2]. The primary error mode identified for the reviewer was “over-skepticism,” where it incorrectly flagged correct responses [1].

Risks and open questions

While the Reinforced Agent approach offers significant advantages, operators should be aware of potential risks and open questions:

- Increased Latency: Introducing an additional LLM into the inference loop inherently adds latency. For real-time applications, the overhead introduced by the reviewer agent needs careful consideration and optimization.

- Reviewer Error Modes: The paper identifies “over-skepticism” as a primary error mode for the reviewer [1]. A reviewer that is too cautious could lead to unnecessary re-prompts or even prevent correct actions, impacting user experience and agent efficiency. Optimizing the helpfulness-harmfulness trade-off is crucial.

- Cost Implications: Running an additional LLM for every tool call will increase operational costs. Operators need to weigh the reliability benefits against the increased inference costs, especially for high-volume applications.

- Complexity of Reviewer Design: While the paper shows prompt optimization can improve reviewer performance, designing effective reviewer prompts and models that generalize across diverse tool sets and tasks remains a challenge. The complexity of the reviewer itself could become a new source of errors.

- Scalability of Multi-Agent Interactions: As agent systems become more complex, managing the interactions and potential cascading effects between multiple specialized agents (base, reviewer, and potentially others) will require robust orchestration and monitoring frameworks.

Sources

- Reinforced Agent: Inference-Time Feedback for Tool-Calling Agents

- [2604.27233] Reinforced Agent: Inference-Time Feedback for Tool-Calling Agents

- Exploring Reasoning Reward Model for Agents

- Reinforcement Learning – GeeksforGeeks

- How Top AI Labs Are Building RL Agents in 2026

- Reinforcement learning – Wikipedia

- Tool Calling – vLLM

- One Refiner to Unlock Them All: Inference-Time Reasoning Elicitation via Reinforcement Query Refinement