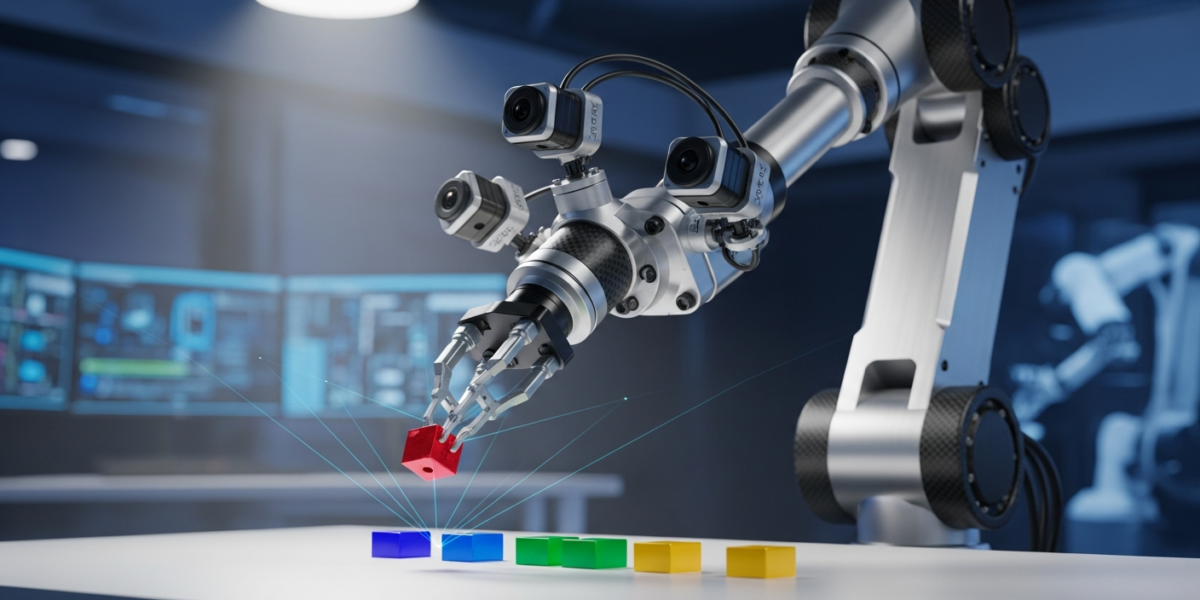

ConsisVLA-4D, introduced on , is an open-source framework designed to overcome the spatiotemporal perception and reasoning limitations of current Vision-Language-Action (VLA) models in robotic manipulation. It achieves this by focusing on efficient 3D perception and 4D reasoning, integrating cross-view object semantic consistency, cross-object spatial geometric consistency, and cross-scene spatiotemporal consistency, leading to significant performance gains and inference speedups over existing methods like OpenVLA.

- ConsisVLA-4D addresses core limitations in spatiotemporal consistency for robotic manipulation, moving beyond 2D observations to robust 3D perception and 4D reasoning.

- It introduces three key components: CV-Aligner for cross-view semantic consistency, CO-Fuser for cross-object geometric consistency, and CS-Thinker for cross-scene spatiotemporal consistency.

- The framework demonstrates substantial improvements, achieving 21.6% and 41.5% performance gains, alongside 2.3x and 2.4x inference speedups compared to OpenVLA on the LIBERO benchmark and real-world platforms, respectively.

- ConsisVLA-4D is open-sourced, providing a practical foundation for operators to integrate more robust and efficient perception into robotic systems.

What changed

Current Vision-Language-Action (VLA) models, often used in robotic control, have largely focused on mapping 2D visual observations to actions. This approach, while effective for some tasks, exhibits notable limitations in understanding the complex spatiotemporal dynamics required for advanced robotic manipulation. Specifically, existing methods frequently rely on additional sensors for spatial representations, introducing computational overhead. Furthermore, visual reasoning is often restricted to future-frame prediction, which lacks alignment with instruction-grounded scene understanding, compromising spatiotemporal consistency.

ConsisVLA-4D directly tackles these issues by introducing a unified framework that prioritizes spatiotemporal consistency in both 3D perception and 4D reasoning. Unlike prior approaches that might treat these aspects separately or rely on computationally expensive external sensors, ConsisVLA-4D integrates them efficiently. It moves beyond single-view RGB object pose estimation, which, while precise, can lack the temporal consistency and robustness needed for real-world robot control, as noted in research on temporally consistent object 6D pose estimation. The core change is a systematic design that ensures object identity, spatial relationships, and scene dynamics are consistently understood across different views and over time, without the typical computational burden.

How it works

ConsisVLA-4D operates through a three-pronged architectural design focused on achieving spatiotemporal consistency at different levels:

- CV-Aligner (Cross-View Object Semantic Consistency): This component ensures that the system consistently identifies and understands objects from multiple camera viewpoints. It filters instruction-relevant regions within the visual input and aligns object identities across these different views. This means if a robot is instructed to pick up a “red block,” the CV-Aligner ensures that the system recognizes the same red block regardless of the camera angle, resolving potential ambiguities that arise from partial occlusion or varying perspectives.

- CO-Fuser (Cross-Object Spatial Geometric Consistency): Building on the CV-Aligner, the CO-Fuser focuses on the spatial relationships between objects. It eliminates ambiguities in spatial relations by using compact latent representations. For instance, if a “red block” is “on top of a blue cylinder,” the CO-Fuser ensures this spatial relationship is consistently understood across all views, preventing misinterpretations of depth or relative positioning. This is crucial for precise manipulation tasks where object interactions are key.

- CS-Thinker (Cross-Scene Spatiotemporal Consistency): This is the framework’s reasoning engine, which ensures consistency as actions unfold over time and the scene changes. The CS-Thinker learns implicit knowledge of local dynamics from the object-semantic tokens provided by the CV-Aligner and global depth information from the geometric tokens of the CO-Fuser. This allows the system to predict how objects will move and interact, and to adapt its understanding as the robot manipulates the environment. It enhances visual reasoning under scene variations, making the robot more robust to dynamic environments.

Together, these components create an efficient pipeline that processes visual information, understands object semantics and spatial geometry, and reasons about temporal changes, all while maintaining a high degree of consistency.

Why it matters for operators

For operators in robotics, manufacturing, and logistics, ConsisVLA-4D represents a significant step towards more reliable and autonomous manipulation systems. The persistent challenge with existing VLA models has been their fragility when confronted with real-world variability—slight changes in lighting, object orientation, or camera perspective could derail a task. ConsisVLA-4D’s explicit focus on “spatiotemporal consistency” directly addresses this, meaning robots can maintain a robust understanding of their environment even as they move and interact with objects.

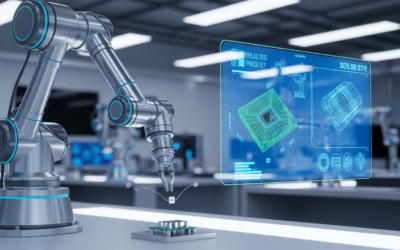

This is not merely an incremental improvement; it’s a foundational shift. The ability to achieve 2.3x to 2.4x inference speedups while simultaneously boosting performance by over 20% on benchmarks like LIBERO translates directly into tangible operational benefits. Faster inference means quicker decision-making cycles for robots, enabling higher throughput in assembly lines or more agile responses in dynamic environments. The performance gains imply fewer errors, less need for human intervention, and ultimately, a lower cost of operation.

Operators should view ConsisVLA-4D as a critical enabler for deploying robots in more complex, unstructured environments. The open-source nature of the framework means that teams can begin experimenting and integrating these advanced perception capabilities without proprietary licensing hurdles. However, a critical takeaway is that while the framework provides robust perception, successful deployment still hinges on careful task definition and robust error handling at the application layer. The system will tell you what is where and how it’s moving, but the operator is still responsible for defining the why and what-if scenarios for the robot’s actions. Over-reliance on perception alone without considering the broader system’s robustness would be a mistake. This technology empowers better perception, but it does not absolve the need for comprehensive system design and safety protocols.

Benchmarks and evidence

ConsisVLA-4D’s efficacy is demonstrated through rigorous evaluation, showcasing substantial improvements over existing Vision-Language-Action (VLA) models, specifically OpenVLA. The framework was tested on both established benchmarks and real-world robotic platforms.

| Metric | LIBERO Benchmark (vs. OpenVLA) | Real-World Platforms (vs. OpenVLA) |

|---|---|---|

| Performance Improvement | 21.6% | 41.5% |

| Inference Speedup | 2.3-fold | 2.4-fold |

These figures indicate that ConsisVLA-4D not only enhances the accuracy and reliability of robotic manipulation tasks but also does so with significantly improved computational efficiency. The consistent performance gains and speedups across both simulated and physical environments underscore the practical applicability and robustness of its spatiotemporal consistency design.

How to try it today

ConsisVLA-4D is open-sourced and publicly available, making it accessible for immediate exploration and integration by researchers and developers. The project details, including source code and potentially pre-trained models, can be accessed via the arXiv publication itself. Operators interested in implementing or experimenting with ConsisVLA-4D should navigate to the provided arXiv link for the paper, which typically includes links to associated GitHub repositories or project pages. This allows for direct access to the framework’s components, enabling hands-on evaluation and customization for specific robotic manipulation tasks.

Risks and open questions

While ConsisVLA-4D offers significant advancements, operators should consider several factors. The “implicit knowledge of local dynamics” learned by CS-Thinker, while efficient, may still struggle with highly novel or rapidly changing physics outside its training distribution. The robustness of the “compact latent representations” used by CO-Fuser against adversarial inputs or subtle sensor noise in real-world, non-ideal conditions warrants further investigation. Furthermore, while the framework is open-source, integrating it into diverse existing robotic hardware and software stacks may still require substantial engineering effort, particularly regarding sensor calibration and action execution interfaces. The long-term maintenance and community support for an open-source project of this complexity are also important considerations for industrial adoption.