New research published on arXiv on , provides a theoretical framework for understanding when In-Context Learning (ICL) in transformer models can generalize to out-of-distribution (OOD) tasks. The study, titled “Out-of-Distribution Generalization of In-Context Learning: A Low-Dimensional Subspace Perspective,” demonstrates that if pre-training task vectors are drawn from a union of low-dimensional subspaces, transformers can generalize across varying distribution shifts. Conversely, if tasks are from a single Gaussian distribution, OOD generalization is significantly hampered.

- ICL’s OOD generalization hinges on the structure of pre-training data: a union of low-dimensional subspaces enables it.

- If pre-training tasks are from a single Gaussian, ICL struggles with OOD shifts.

- The theory was developed using a single-layer linear attention model and empirically validated on models like GPT-2.

- Distribution shifts are modeled as varying angles between these low-dimensional subspaces.

What changed

While transformers’ In-Context Learning (ICL) capabilities are well-documented, a rigorous theoretical explanation for their out-of-distribution (OOD) generalization has been largely absent. This paper introduces a minimal mathematical model that precisely identifies the conditions under which ICL can generalize OOD. The core insight is that the geometric structure of the pre-training data’s task vectors — specifically, whether they reside in a union of low-dimensional subspaces — dictates ICL’s ability to adapt to novel distributions [1].

Previous work has explored ICL’s mechanics, but the question of “when and why it works beyond its training data” remained unclear. This research provides a provable answer for a simplified linear attention model and offers empirical evidence that these findings extend to more complex, non-linear models like GPT-2 [1]. This moves beyond empirical observation to a foundational understanding of ICL’s robustness.

How it works

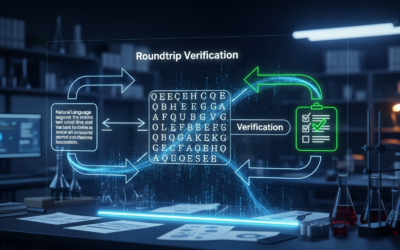

The researchers developed a mathematical model focusing on linear regression tasks within a single-layer linear attention model. The key idea is to represent different tasks or “weight vectors” as points within specific low-dimensional subspaces. Distribution shifts are then conceptualized as changes in the “angle” between these subspaces [1].

The model posits two primary scenarios for pre-training task vector distributions:

- Union of Subspaces: If the pre-training task vectors are drawn from a union of several distinct low-dimensional subspaces, the model can learn to interpolate across all possible angles between these subspaces. This enables robust OOD generalization, allowing ICL to function effectively even in data regions with zero probability mass in the original training distribution [1]. This aligns with the idea that transformers can relate tokens on multiple dimensions simultaneously, often approximated by multiple low-rank projections [4].

- Single Gaussian Distribution: If the pre-training tasks are drawn from a single, undifferentiated Gaussian distribution, the model’s test risk shows a significant dependence on the angle of the distribution shift. This implies that ICL cannot effectively generalize OOD under these conditions [1].

In essence, the model suggests that the diversity and structured separation of task representations during pre-training are critical for ICL’s ability to handle novel situations. The “low-rank” nature of these subspaces is crucial, as it implies a more efficient representation, similar to how Multi-Query Attention (MQA) uses shared K and V across heads to improve efficiency [4].

Why it matters for operators

This research offers more than just theoretical elegance; it provides actionable insights for operators building and deploying large language models. The finding that ICL’s OOD generalization is tied to the geometric structure of pre-training data — specifically, the presence of task vectors in a union of low-dimensional subspaces — is a critical design principle that has likely been implicitly leveraged but not explicitly understood. For operators, this means that the diversity and inherent structure of your pre-training corpus are even more vital than previously assumed for robust ICL performance in novel scenarios.

Instead of merely accumulating vast amounts of data, the focus should shift towards ensuring that this data effectively spans a “union of subspaces” representing diverse task types and relationships. This implies a need for more sophisticated data curation and augmentation strategies that explicitly aim to introduce varied, yet structured, task representations. For instance, creating synthetic data that systematically explores different “angles” of distribution shifts could significantly enhance OOD robustness. Furthermore, when fine-tuning or adapting models, operators should consider how new tasks relate to these learned subspaces. If a new task falls entirely outside the learned union of subspaces, ICL might fail, necessitating more traditional fine-tuning. This work suggests that models trained on a single, undifferentiated data distribution will inevitably be brittle when faced with real-world distribution shifts, a common pitfall in deployment. Operators should therefore prioritize pre-training data that encourages the model to learn distinct, low-dimensional representations for different task types, rather than a monolithic, high-dimensional blob.

Benchmarks and evidence

The theoretical findings, initially derived from a minimal mathematical model using a single-layer linear attention model, were empirically validated. The researchers demonstrated that their results hold for transformer models such as GPT-2, extending the applicability of their subspace theory beyond simplified linear cases. They also presented experiments showing how these findings generalize to nonlinear function classes, suggesting broader relevance for modern deep learning architectures [1]. This empirical validation on a widely recognized model like GPT-2 strengthens the practical implications of the theoretical framework.

Risks and open questions

- Defining “Subspace” in Practice: While the theory identifies the importance of task vectors residing in a “union of low-dimensional subspaces,” practically identifying and engineering these subspaces in real-world, high-dimensional data remains a significant challenge. How can operators quantitatively assess if their pre-training data meets this criterion?

- Scalability to Extreme OOD: The model discusses generalization to “all angle shifts,” but real-world OOD scenarios can be far more complex than simple angular variations. Will this theory hold for extreme semantic shifts or entirely novel task paradigms not implicitly represented in any pre-training subspace?

- Impact on Model Architecture: Does this theoretical understanding suggest specific architectural modifications for transformers that could explicitly encourage the learning of such diverse, low-dimensional subspaces, beyond current attention mechanisms?

- Non-Linearity Complexity: While the paper states results extend to non-linear function classes, the exact mechanisms and limitations in highly non-linear, deep neural networks (which are not universal approximators if their width is too small [3]) still require further exploration.

Sources

- Out-of-Distribution Generalization of In-Context Learning: A Low-Dimensional Subspace Perspective — https://arxiv.org/html/2505.14808

- 1 Introduction — https://arxiv.org/html/2510.04548

- Deep learning – Wikipedia — https://en.wikipedia.org/wiki/Deep_learning

- Top 50 LLM Interview Questions — Study Guide (detailed, independently-authored answers with supply-chain security notes). Question list credit: Hao Hoang, May 2025. — https://gist.github.com/rajesamp/c6a509d2f6be84db6cf5d509eabaa08d