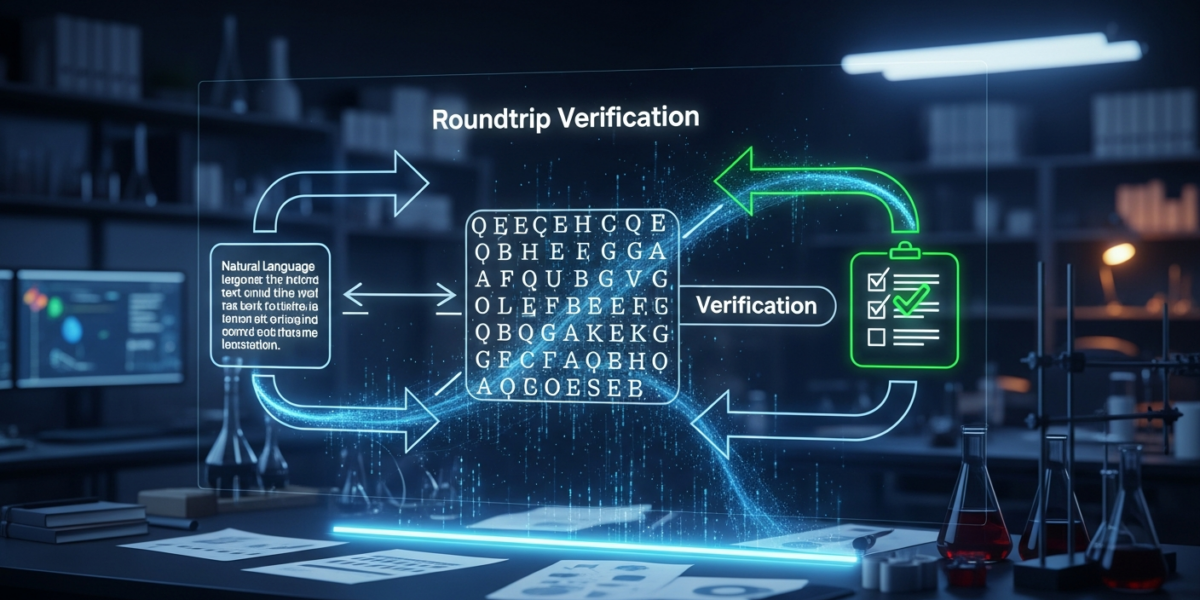

A new roundtrip verification and repair framework significantly improves the faithfulness of Large Language Model (LLM) autoformalizations. This method formalizes natural language, translates it back, re-formalizes, and checks logical equivalence. Diagnosis-guided repair boosts formal equivalence from 45–61% to 83–85% for models like Claude Opus 4.6 and GPT-5.2, without requiring ground-truth annotations.

| Attribute | Detail |

|---|---|

| Released by | Daneshvar Amrollahi et al. (arXiv cs.CL) |

| Release date | |

| What it is | A method to verify and repair Large Language Model (LLM) formalizations of natural language. |

| Who it is for | AI researchers, developers, and anyone building systems that rely on LLM autoformalization. |

| Where to get it | arXiv (Paper ID: 2604.25031) |

| Price | Free (open-access paper) |

- A novel roundtrip verification framework assesses LLM autoformalization faithfulness.

- It operates without requiring ground-truth formalizations for evaluation [2].

- The process involves formalizing, translating back, re-formalizing, and checking logical equivalence [1].

- Diagnosis-guided repair significantly raises formal equivalence rates [1].

- Formal equivalence correlates with less semantic drift in the autoformalization process [1].

- The roundtrip verification method provides a signal for autoformalization truthfulness without ground-truth data [2].

- Diagnosis-guided repair improves formal equivalence for LLMs like Claude Opus 4.6 and GPT-5.2 [1].

- Formal equivalence rates increased from 45–61% to 83–85% using this repair method [1].

- The approach helps identify specific stages where translation failures occur [1].

- Less semantic drift is observed when formal equivalence is achieved [1].

What is Faithful Autoformalization?

Faithful autoformalization ensures that an LLM’s formalization of natural language accurately reflects the original meaning. This new method proposes a roundtrip verification approach to achieve this fidelity [1]. It aims to confirm that the formal output logically corresponds to the initial natural language statement [1].

What is new vs the previous version?

This new approach introduces a formalism-agnostic roundtrip verification framework that does not require ground-truth formalizations [2]. It also includes a diagnosis and repair mechanism to correct translation failures [1].

How does Roundtrip Verification Work?

Roundtrip verification involves several key steps to ensure the faithfulness of autoformalization.

- Formalize Statement: An LLM formalizes a natural language statement into a logical representation [1].

- Translate Back: The formalization is then translated back into natural language [1].

- Re-formalize: This new natural language statement is re-formalized by the LLM [1].

- Check Equivalence: A formal tool checks if the initial and re-formalized logical outputs are equivalent [1].

- Diagnose and Repair: If the formalizations disagree, a diagnosis step identifies the failure stage. A targeted repair operator attempts to correct the issue [1].

Benchmarks and Evidence

The roundtrip verification and repair approach was evaluated on 150 traffic rules.

| Model | Initial Formal Equivalence | Formal Equivalence with Diagnosis-Guided Repair | Improvement | Source |

|---|---|---|---|---|

| Claude Opus 4.6 | 45–61% | 83–85% | ~38% | [1] |

| GPT-5.2 | 45–61% | 83–85% | ~38% | [1] |

Diagnosis-guided repair consistently outperformed a random-repair baseline [1]. An independent Natural Language Inference (NLI) analysis confirmed that formal equivalence correlates with less semantic drift [1].

Who should care?

Builders

Builders developing LLM-powered applications requiring high-fidelity logical outputs should care. This method offers a way to improve the reliability of autoformalization [1].

Enterprise

Enterprises using LLMs for tasks like legal document analysis or code generation can benefit. Ensuring faithful formalization reduces errors and increases trust in AI outputs [1].

End users

End users interacting with LLMs for complex reasoning tasks will experience more accurate results. Improved autoformalization leads to better understanding and fewer misinterpretations [1].

Investors

Investors in AI companies focused on logical reasoning and formal verification should note this development. It represents progress in making LLMs more robust and trustworthy [1].

How to use Roundtrip Verification today?

The code, data, and prompts for this research will be released upon acceptance of the paper [2]. Currently, researchers can study the methodology described in the arXiv paper [1].

Roundtrip Verification vs. Competitors

This method offers a unique approach to verifying LLM autoformalization.

| Feature | Roundtrip Verification & Repair | LogicReward (Alternative) | Traditional Manual Verification |

|---|---|---|---|

| Ground-Truth Requirement | No ground-truth formalizations required [2] | Can operate without ground-truth labels for reward signal [3] | Requires human-annotated ground truth |

| Verification Method | Formalization, back-translation, re-formalization, logical equivalence check [1] | Theorem prover for step-level logical correctness [3] | Human expert review |

| Repair Mechanism | Diagnosis-guided repair for specific translation stages [1] | Guides model training via reward system [3] | Manual correction by experts |

| Improvement (Example) | Raises formal equivalence from 45–61% to 83–85% [1] | 8B model surpasses GPT-4o by 11.6% on NLI/logical tasks [3] | Subjective, depends on human expertise |

| Focus | Post-hoc verification and repair of autoformalization [1] | Incentivizing LLM reasoning during training [3] | Ensuring correctness through human oversight |

Risks, limits, and myths

- Myth: Roundtrip verification guarantees perfect faithfulness. While significantly improved, the method raises formal equivalence to 83–85%, not 100% [1].

- Limit: Dependency on formal tools. The approach relies on formal tools to check logical equivalence [1].

- Risk: Complexity of diagnosis. Identifying the exact failure stage in complex formalizations can be challenging [1].

- Limit: Scope of evaluation. The current evaluation used 150 traffic rules; broader domain testing is needed [1].

FAQ

- What is autoformalization? Autoformalization is the process where a Large Language Model (LLM) converts natural language statements into formal logical representations [1].

- How does roundtrip verification improve LLM fidelity? It improves fidelity by formalizing, translating back, re-formalizing, and then checking for logical equivalence, with a repair mechanism for discrepancies [1].

- Does this method require ground-truth data? No, the roundtrip verification framework provides a signal on truthfulness without requiring ground-truth formalizations [2].

- Which LLMs were tested with this approach? The approach was evaluated using Claude Opus 4.6 and GPT-5.2 [1].

- What was the performance improvement? Diagnosis-guided repair increased formal equivalence from 45–61% to 83–85% for both tested models [1].

- What is semantic drift in autoformalization? Semantic drift refers to the change in meaning between the original natural language statement and its formalization [1].

- Is the code available for this research? Code, data, and prompts will be released upon acceptance of the paper [2].

- What kind of rules were used for evaluation? The evaluation was conducted on 150 traffic rules [1].

Glossary

- Autoformalization

- The process by which an AI model, typically an LLM, translates natural language into a formal, logical representation [1].

- Formal Equivalence

- A state where two formal logical statements are provably identical in their meaning or truth conditions [1].

- Large Language Model (LLM)

- An artificial intelligence model trained on vast amounts of text data, capable of understanding and generating human-like text [1].

- Natural Language Inference (NLI)

- A task in natural language processing that determines the logical relationship (entailment, contradiction, or neutral) between two text statements [1].

- Roundtrip Verification

- A method to verify the faithfulness of a translation by translating content to a target form, then back to the original form, and comparing the two original forms [1].

- Semantic Drift

- The gradual change or deviation in meaning of a word, phrase, or statement over time or through translation processes [1].

Sources

- [1] [2604.25031] Faithful Autoformalization via Roundtrip Verification and Repair — https://arxiv.org/abs/2604.25031

- [2] Faithful Autoformalization via Roundtrip Verification and Repair — https://arxiv.org/html/2604.25031

- [3] LogicReward: Incentivizing LLM Reasoning via Step-Wise Logical Supervision [Quick Review] — https://liner.com/review/logicreward-incentivizing-llm-reasoning-via-stepwise-logical-supervision