AI Energy Consumption’s Impact on Big Tech Profits: A Complete Guide

The escalating energy consumption of AI infrastructure is significantly impacting Big Tech profits by driving up capital expenditures and operational costs for data centers. While AI integration can boost revenue growth, profitability hinges on balancing these compute costs with continuous improvements in energy efficiency and infrastructure optimization.

The Escalating Strain of AI Energy Use on Big Tech Finances

Global AI computing capacity surged by 3.3x annually, reaching 17.1 million GPUs by early 2026, leading to a global energy demand of 29.6 GW in 2025. This massive energy requirement translates directly into financial strain for Big Tech companies. This strain occurs through increased capital expenditures (CapEx) and operational expenses (OpEx).

Profit margins are being squeezed as companies must invest billions in new infrastructure while managing rising electricity costs. The need for constant upgrades coupled with energy consumption presents a significant challenge to sustained profitability in the AI sector. Failure to address this could lead to reduced returns despite technological advancements.

TL;DR: AI Energy & Big Tech Profit Summary

Key Takeaways on AI’s Energy Footprint and Profit Margins

- Global AI computing capacity reached 17.1 million GPUs by early 2026, driving energy demand to 29.6 GW in 2025. This surge creates substantial pressure on existing power grids.

- Big Tech’s capital expenditure for data centers exceeded $400 billion in 2025 and is projected to increase by 75% in 2026. These investments are crucial for scaling AI infrastructure.

- Companies with fully integrated AI are nearly four times more likely to report revenue growth (58%) compared to those piloting AI (15%). This highlights the competitive advantage of early adoption.

- Profitability depends on balancing rising compute costs with energy efficiency improvements. Strategic investment in green technologies is becoming paramount.

- Advanced data centers are achieving PUE as low as 1.1 with liquid cooling technology. These innovations are setting new benchmarks for efficiency.

- The AI energy paradox shows efficiency gains at task level are offset by overall consumption increases. Broad deployment means total energy usage continues to climb.

- The market for AI in energy management is expected to reach $10.5 billion by 2026. This indicates a significant opportunity for specialized solutions.

Key Takeaways: AI Energy Consumption’s Profit Implications for Big Tech

Strategic Decisions for Sustainable AI Growth and Profitability

- Prioritize energy efficiency investments: Every 0.1 improvement in PUE translates to significant operational cost savings. This small increment can lead to immense long-term financial benefits.

- Balance CapEx with long-term efficiency: The 75% projected CapEx increase in 2026 must be offset by efficiency gains. Smart capital allocation is essential to avoid unsustainable growth.

- Implement liquid cooling systems: Hyperscale facilities achieving PUEs of 1.1 demonstrate the cost-saving potential. These advanced cooling solutions are becoming a standard for optimal performance.

- Develop AI energy management solutions: The $10.5 billion market represents both cost savings and revenue opportunities. Companies can innovate in this space to gain a competitive edge.

- Address the AI energy paradox: Recognize that task-level efficiency doesn’t automatically translate to overall consumption reduction. A holistic view of energy use is critical.

- Plan for distributed energy strategies: Avoid over-reliance on traditional grid infrastructure. Diversifying energy sources can enhance resilience and reduce costs.

- Monitor regulatory developments: Growing scrutiny could impact expansion plans and compliance costs. Staying ahead of regulations is key to avoiding penalties and disruptions.

What is AI Energy Consumption’s Impact on Big Tech Profits?

Defining the Financial Consequences of AI’s Growing Power Needs

AI energy consumption impact on Big Tech profits refers to the direct financial consequences of the massive energy requirements needed to power AI infrastructure. This includes both capital expenditures for building and upgrading data centers and operational expenses for electricity, cooling, and maintenance. The financial impact manifests through reduced profit margins, increased investment requirements, and potential limitations on growth capacity.

Big Tech companies must navigate these financial challenges strategically. They need to find ways to sustain innovation while mitigating the rising costs associated with their growing AI operations. This balance is critical for long-term financial health and market leadership.

Key Definitions: Understanding the Financial Nexus

- PUE (Power Usage Effectiveness)

- A metric used to determine the energy efficiency of a data center, calculated by dividing the total power entering the data center by the power used by the IT equipment. A lower PUE indicates greater efficiency, with 1.0 being perfectly efficient. This metric is a crucial indicator of operational performance and cost management.

- Capital Expenditure (CapEx)

- Funds used by a company to acquire, upgrade, and maintain physical assets such as property, industrial buildings, or equipment. In the context of AI, this includes vast investments in AI infrastructure and data centers. These investments are foundational but also represent significant financial commitments.

- AI Energy Paradox

- The phenomenon where, despite individual AI tasks becoming more energy-efficient, the overall energy consumption of AI systems increases due to the dramatic expansion of AI deployment across numerous applications and increased usage. This paradox underlines the complexity of managing AI’s environmental footprint.

- Hyperscale Facilities

- Large-scale data centers designed to efficiently support robust, scalable applications and often operated by Big Tech companies for cloud computing, AI, and big data workloads. These facilities are at the forefront of AI infrastructure development and energy consumption.

Why AI Energy Consumption Matters Now for Big Tech Profitability

Current Attention on AI’s Gigantic Energy Footprint

The AI energy consumption impact on Big Tech profits has become critical in 2026 due to several converging factors. Global AI energy use hit 29.6 GW in 2025, equivalent to the energy consumption of a medium-sized European country. This massive demand comes at a time when energy prices remain volatile and regulatory scrutiny is increasing.

The capital expenditure of five large technology companies surged to more than $400 billion in 2025 and is set to increase by a further 75% in 2026 due to data center investments. This financial commitment highlights the urgent need for sustainable energy strategies. Companies are now keenly aware of how their energy demands affect both their balance sheets and public perception.

The AI Energy Paradox: Efficiency vs. Overall Consumption

The AI energy paradox represents a fundamental challenge for Big Tech profitability. While individual AI tasks are becoming more efficient, the overall consumption is skyrocketing due to widespread deployment. Major model providers reported a threefold increase in active users and a fivefold increase in revenue over the past year.

This scaling effect means that efficiency gains at the task level are completely offset by the massive expansion of AI applications across diverse use cases. As Big Tech continues to integrate AI into more products and services, the total energy demand will only grow. This necessitates a strategic re-evaluation of energy management at every level.

How AI Energy Consumption Impacts Big Tech’s Bottom Line

Mechanics of Rising Capital Expenditures (CapEx)

The AI energy consumption impact on Big Tech profits is most visible in capital expenditures. Data center CapEx exceeded $400 billion in 2025 and is projected to increase by 75% in 2026. This represents a massive reinvestment requirement that directly impacts profitability and shareholder returns.

Companies must balance these infrastructure investments against other growth opportunities, creating strategic trade-offs in resource allocation. For example, a significant portion of CapEx is now dedicated to acquiring and installing advanced GPUs and specialized cooling systems. These are essential for handling complex AI workloads efficiently. Such investments, while necessary, tie up considerable capital that could otherwise be used for research, development, or market expansion. The long-term returns on these investments are dependent on sustained AI innovation and cost management.

Operational Cost Increases from AI’s Power Demands

Beyond CapEx, operational costs are rising significantly. Electricity costs for data centers running AI workloads have increased by 40-60% since 2023 in major markets. This substantial increase directly shrinks profit margins.

Cooling represents another major expense, with traditional air cooling systems becoming inadequate for high-density AI servers. As AI models become more powerful and complex, they generate more heat. This requires more sophisticated and energy-intensive cooling solutions. Maintenance costs have also increased due to the complexity of AI infrastructure and the need for specialized technical staff. These operational pressures put a constant strain on Big Tech’s financial performance, making efficient management paramount.

The Role of Power Usage Effectiveness (PUE) in Cost Control

PUE improvements directly translate to operational cost savings. The US average PUE for data centers was 1.32 in 2024, while hyperscale facilities with liquid cooling currently under construction could achieve PUEs of 1.1 by 2026. This is a significant improvement in efficiency. The impact of AI on data center security and efficiency is becoming a major focus for these companies.

This 0.22 difference represents approximately 20% reduction in energy costs for non-IT infrastructure, significantly impacting profitability for large-scale operations. Investing in technologies that lower PUE, such as advanced cooling systems and intelligent power management, can provide a substantial competitive advantage. Companies that achieve lower PUEs can reinvest their savings into further AI development or return more profit to shareholders, demonstrating a clear link between efficiency and financial success.

Real-World Examples of AI Energy Impact on Big Tech

Hyperscale Data Centers and Their Energy Footprint

Major tech companies are investing billions in hyperscale facilities specifically designed for AI workloads. These facilities typically consume 100-300 MW of power, equivalent to a small city. This immense power draw requires careful planning and infrastructure development.

The energy requirements are so substantial that companies are building dedicated power infrastructure and negotiating long-term energy contracts to secure supply and manage costs. This can include developing their own renewable energy sources or partnering with utility companies on large-scale solar or wind projects. The sheer scale of these operations necessitates a sophisticated approach to energy sourcing and management. Additionally, the CFTC’s AI revolution also highlights the increasing role of AI in financial infrastructure, which also contributes to overall energy demand.

AI Integration and Revenue Growth vs. Profitability Challenges

Organizations with fully integrated AI are nearly four times more likely to report revenue growth (58%) compared to those still piloting (15%). This demonstrates the clear revenue upside of adopting AI at scale. However, this revenue growth doesn’t automatically translate to profitability.

Microsoft attributed part of its emissions growth in 2023 to increased data-center energy consumption and supply-chain costs, demonstrating how energy expenses can offset revenue gains. This underscores the need for a holistic approach to AI strategy that considers both revenue generation and cost management. Without this balance, even significant revenue increases might not lead to improved bottom lines.

Comparing AI Investment Strategies: Efficiency vs. Pure Scale

AI Integration: Revenue Growth vs. Profit Margin Reality

The balance between maximizing revenue growth through broad AI integration and maintaining healthy profit margins is a critical strategic decision for Big Tech. Companies face a trade-off that requires careful consideration of their long-term financial health and market position.

Pure scale strategies may offer rapid market penetration and revenue spikes, but they risk eroding profits if energy costs are not actively managed. Conversely, an efficiency-first approach might yield slower initial revenue growth but ensures greater financial stability and sustainability. The choice often depends on a company’s risk appetite and its existing infrastructure.

| AI Integration Level | Likelihood of Revenue Growth | Impact on Profit Margins (with unmitigated energy costs) |

|---|---|---|

| Integrated AI | 58% | Significant pressure unless energy efficiency measures implemented |

| Piloting AI | 15% | Lower immediate impact but limited competitive advantage |

This comparison highlights that aggressive movement into integrated AI, while revenue-positive, demands a parallel commitment to energy efficiency. Without such measures, the high operational costs of AI infrastructure can quickly outpace revenue growth. This makes profit margins vulnerable. The AI talent crisis further complicates this, as specialized engineers are needed to implement these efficiency solutions.

PUE Efficiency in Data Centers: Industry Averages vs. Hyperscale Goals

Optimizing PUE is a key battleground for Big Tech as they strive to control the AI energy consumption impact on profits. The gap between average data center efficiency and hyperscale ambitions showcases the potential for significant savings and competitive advantage.

Hyperscale facilities are not merely larger; they are designed with advanced technologies to minimize energy waste. This dedication to efficiency directly impacts their operational expenditure. Achieving lower PUEs requires substantial upfront investment in innovative cooling, power distribution, and AI-driven management systems.

| Data Center Type | Average PUE (2024) | Key Efficiency Technologies | Impact on Operational Costs |

|---|---|---|---|

| US Average Data Center | 1.32 | Traditional air cooling, basic efficiency measures | Higher energy costs, limited scalability |

| Hyperscale Facilities (2026 Projection) | 1.1 | Liquid cooling, advanced heat recovery, AI-driven optimization | 20%+ cost savings, better scalability |

The difference between average and hyperscale PUE represents a significant competitive advantage in operational cost management. Companies able to bridge this gap will realize considerable financial benefits, freeing up capital for further innovation. These optimized data centers can thus support the ever-growing demands of AI without disproportionately increasing energy expenses.

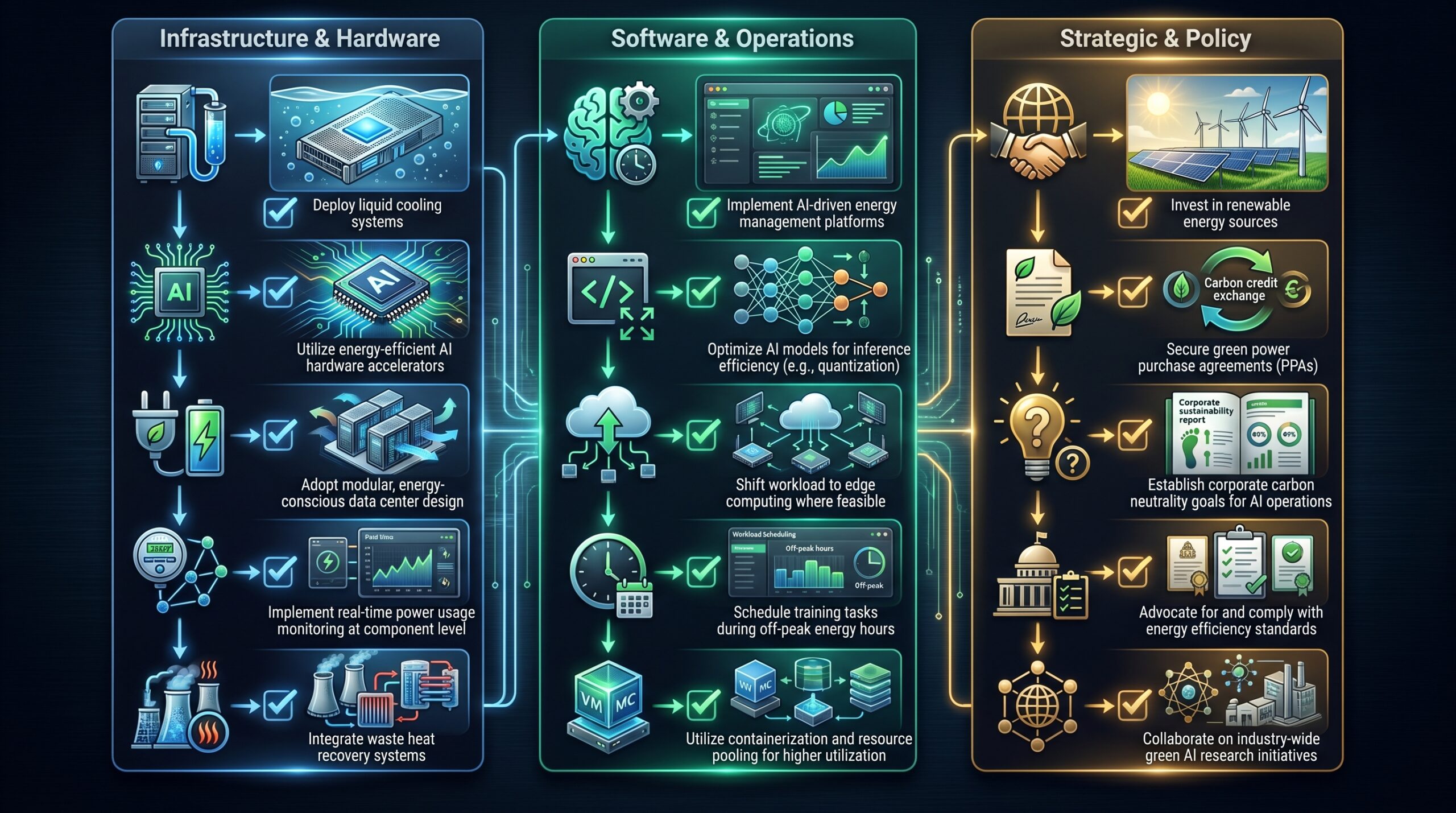

Tools & Technologies for Mitigating AI Energy Consumption Impacts

Advanced Cooling Solutions: Liquid Cooling Systems

Liquid cooling systems represent the most significant advancement in data center efficiency. These systems use liquid instead of air to dissipate heat from IT equipment, capable of achieving PUEs as low as 1.1 in hyperscale facilities. This direct contact cooling is far more efficient than air.

Implementation costs are higher initially but pay back through significantly reduced operational expenses. The improved heat transfer capabilities of liquid cooling allow for denser server racks and more powerful AI accelerators. This directly impacts the risks associated with AI development by reducing energy-related operational risks. The long-term cost benefits and increased performance make liquid cooling an essential technology for future AI infrastructure.

AI in Energy Management Platforms: A Growing Market

AI-driven energy management solutions are expected to grow to a $10.5 billion market by 2026. These platforms use machine learning to optimize energy usage across data centers, predict demand patterns, and automate efficiency measures. This predictive capability allows for proactive energy adjustments.

Big Tech companies are both consumers and developers of these solutions. They leverage their own AI expertise to build internal tools for managing their vast data center networks. This dual role positions them uniquely to benefit from the market growth. Such platforms can identify inefficiencies and recommend optimal operational settings, leading to substantial energy savings and reduced operational costs. The demand for these tools is also growing as more businesses adopt AI, making workflow automation with AI increasingly common.

Modular Data Center Solutions for Faster & More Efficient Deployment

Modular approaches can dramatically reduce equipment requirements and shorten the time to power data centers. These solutions can deliver 25% faster time-to-power and unlock revenue nine months earlier compared to traditional construction methods. This acceleration in deployment is crucial in the fast-paced AI market.

The flexibility also allows for better matching of capacity to actual demand. Rather than building massive, monolithic data centers, companies can deploy modular units as needed, reducing initial CapEx and optimizing resource utilization. This agile approach minimizes waste and allows for more efficient scaling of AI infrastructure. It also aligns with the need to quickly expand compute capacity to meet the demands of advanced models like those used in AI on Android development.

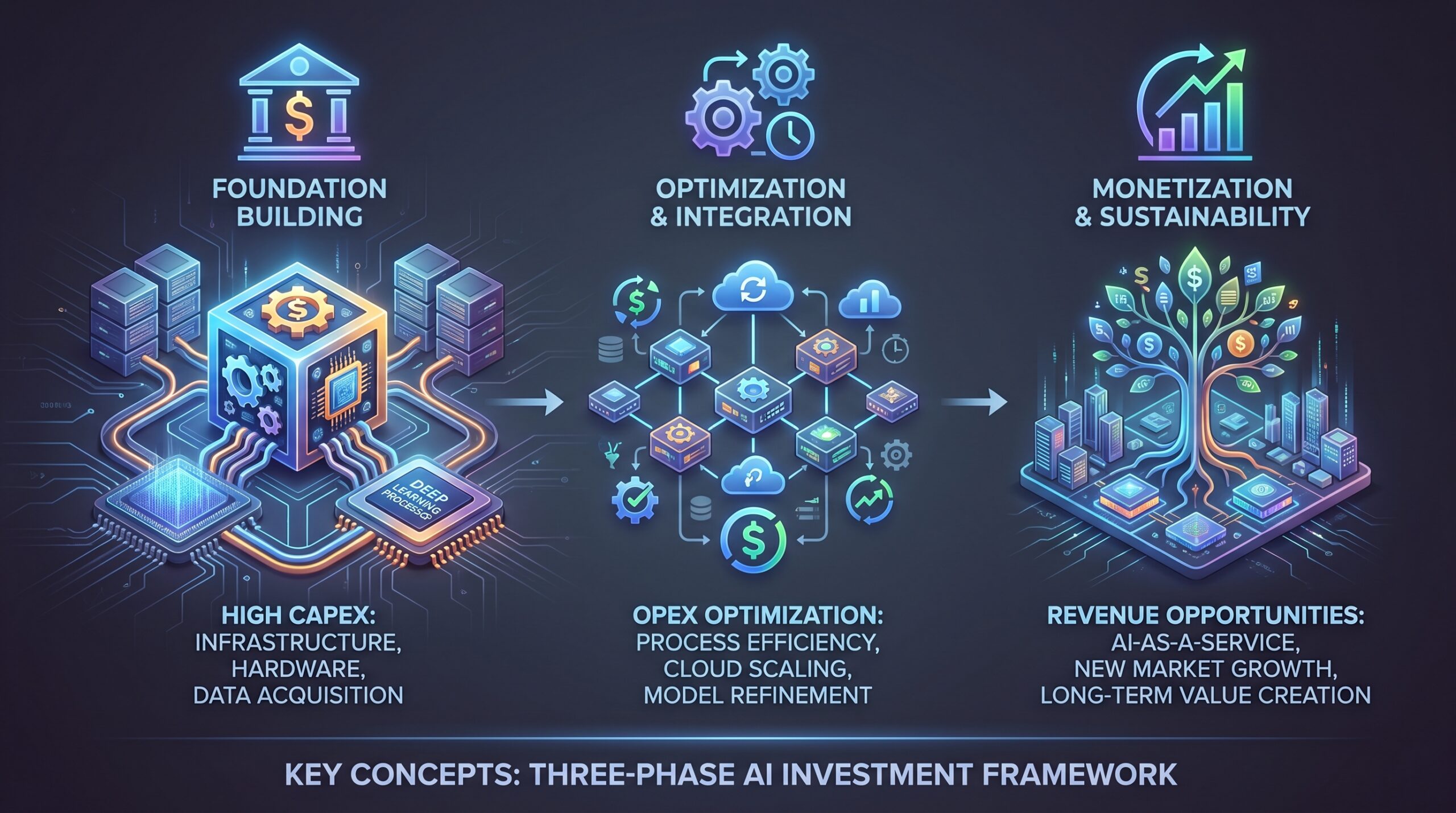

Costs, ROI, and Monetization Upside from AI Energy Management

Investment in Energy Efficiency: CapEx vs. OpEx Savings

The upfront costs of advanced energy efficiency solutions must be weighed against long-term operational savings. Liquid cooling systems typically require 30-40% higher initial investment but can reduce energy costs by 20-30%. This significant reduction in OpEx makes the initial CapEx justifiable.

The ROI period is typically 2-3 years for large-scale operations, making them financially viable despite the high CapEx environment. Companies that prioritize these investments are not only demonstrating environmental responsibility but also securing a competitive advantage through lower operating costs. This strategic foresight becomes even more critical as AI workloads continue to grow exponentially.

Monetization Opportunities: AI as an Energy Solution

Beyond cost savings, Big Tech companies have opportunities to monetize their energy management expertise. The $10.5 billion market for AI in energy management represents a potential revenue stream. This allows companies to turn a cost center into a profit generator.

Companies can develop proprietary solutions for internal use and then commercialize them for other industries. This strategy leverages their considerable R&D investments and allows them to export their efficiency gains. For example, a company might develop an AI algorithm to optimize data center cooling and then license it to other corporations or even smaller data center operators. This creates an additional revenue stream while also promoting broader energy efficiency.

Risk Reduction Through Sustainable Energy Practices

Managing AI’s energy footprint reduces risks of regulatory backlash, reputational damage, and future cost increases. As public awareness of AI’s environmental impact grows, so does the demand for sustainable practices. Companies seen as environmentally responsible often enjoy better public perception and customer loyalty.

Companies with strong sustainability practices face lower regulatory risk and potentially better access to capital markets. Investors are increasingly looking for ESG (Environmental, Social, and Governance) compliant companies, making sustainable energy practices a financial imperative. The "Ratepayer Protection Pledge" signed by tech giants represents an acknowledgment of these risks, signaling a commitment to broader energy responsibility. This proactive approach helps mitigate potential fines, legal challenges, and brand erosion.

AI Energy Cost & Profit System Map

- Inputs:

- High CapEx (75% projected increase for 2026)

- Rising OpEx (40-60% electricity cost increase)

- Global AI Energy Demand (29.6 GW in 2025)

- Regulatory Scrutiny & Public Pressure

- Processing Core (Big Tech Data Centers):

- AI Workloads & GPU Usage (17.1M GPUs by 2026)

- Cooling Systems (Air vs. Liquid)

- Power Distribution Units

- AI-driven Energy Management Platforms

- Outputs:

- Revenue Growth (58% for integrated AI)

- Profit Margin (Pressured without efficiency)

- PUE (Target 1.1 with liquid cooling)

- Cost Savings (20-30% via liquid cooling)

- Monetization (Energy management market $10.5B)

- Brand Reputation & Compliance

- Feedback Loops:

- Efficiency gains enable more AI deployment.

- Increased deployment drives higher energy demands.

- Regulatory actions impact investment decisions.

- Market demand for green AI influences product development.

Risks, Pitfalls, and Myths vs. Facts of AI Energy Consumption

What Can Go Wrong: Major Risks to Big Tech Profits

- Downward shift in AI sentiment: A decline in investor confidence in AI could have an outsized impact on the wider market and Big Tech valuations. Such a shift could lead to reduced funding for new AI projects.

- Unsustainable energy demands: The rapid increase in AI’s global energy consumption (29.6 GW in 2025) could outpace energy supply infrastructure. This could lead to energy shortages or increased costs.

- Profitability erosion: Without balancing high compute costs with energy efficiency, massive capital expenditures could erode Big Tech profits. Investments must be carefully managed to ensure returns.

- Regulatory backlash: Growing concerns over AI’s energy demands could lead to regulatory interventions that increase compliance costs. Governments may impose carbon taxes or stricter energy efficiency standards.

- Job displacement and economic instability: Significant job disruption could occur without corresponding job creation, leading to social unrest and negative public perception of AI. Addressing AI’s impact on software engineer jobs is crucial.

- Runaway utility rates: Grid upgrade costs could lead to increased utility bills despite protection pledges. Consumers and businesses might bear the burden of infrastructure investments.

Common Mistakes in Managing AI’s Energy Footprint

Avoiding common pitfalls is crucial for Big Tech to effectively manage the AI energy consumption impact on their profits. Strategic missteps can quickly amplify costs and undermine sustainability efforts.

- Underestimating energy infrastructure needs leading to bottlenecks and delays. This often results in expensive emergency upgrades.

- Ignoring PUE improvements and missing opportunities to reduce operational costs. A lack of focus on efficiency is a missed financial opportunity.

- Focusing solely on immediate gains while overlooking long-term environmental impacts. Short-sighted strategies can lead to future regulatory and reputational issues.

- Centralizing compute without distributed energy strategies. Over-reliance on a single energy source or location poses significant risks.

Myths vs. Facts: Unpacking AI Energy Misconceptions

Understanding the reality behind common AI energy misconceptions is vital for Big Tech to make informed strategic decisions. Separating fact from fiction helps in developing effective and realistic energy management plans.

| Myth | Fact |

|---|---|

| AI will inherently make everything more efficient, including energy | While AI can optimize specific tasks, the overall deployment and scale are driving net increases in energy consumption |

| Big Tech’s pockets are infinite, energy costs won’t matter | The 75% CapEx increase in 2026 directly impacts profit margins, making efficiency crucial |

| Renewable energy fully offsets AI’s carbon footprint | Renewable energy generation can be intermittent, requiring grid-level storage and backup, and building new infrastructure still has embodied carbon. |

| AI models are too complex to be energy-optimized | Architectural innovations, quantization, and specialized hardware can significantly reduce energy per inference, but overall scale remains the primary challenge. |

FAQ: Your Questions About AI Energy Consumption and Big Tech Profits

How much energy does AI consume globally?

Global AI energy use reached 29.6 GW in 2025, driven by computing capacity that surged to 17.1 million GPUs by early 2026. This represents approximately 2-3% of global electricity consumption and is growing at 3.3x annually. This rapid growth highlights the escalating demand on energy grids and the increasing carbon footprint of AI.

How does AI energy consumption affect Big Tech’s capital expenditure?

CapEx for data centers exceeded $400 billion in 2025 and is projected to increase by 75% in 2026 due to AI infrastructure demands. This massive investment requirement directly impacts profitability and limits resources available for other growth initiatives. Big Tech companies must allocate substantial capital to build and upgrade facilities capable of handling intense AI workloads, including specialized cooling and power systems.

Can AI integration still lead to profit for Big Tech despite high energy costs?

Yes, but profitability hinges on balancing revenue growth from AI (58% likelihood for integrated AI) with stringent energy efficiency and infrastructure optimization. Unchecked energy costs can completely erase revenue gains from AI implementation. Companies must strategically invest in technologies like liquid cooling and AI-driven energy management to maintain healthy profit margins.

What is the ‘AI energy paradox’?

The AI energy paradox describes how, despite individual AI tasks becoming more energy-efficient, the overall energy consumption dramatically increases due to widespread deployment and the sheer scale of AI applications across various tasks. While algorithms might be optimized, the sheer volume of AI operations worldwide drives net energy consumption upwards, challenging global sustainability efforts.

What is PUE, and why is it important for Big Tech data centers?

PUE (Power Usage Effectiveness) measures data center energy efficiency. It is calculated by dividing total facility power by IT equipment power. A lower PUE (e.g., 1.1 target for hyperscale with liquid cooling) translates directly into lower operational costs and better profit margins for Big Tech companies operating large-scale facilities. Improving PUE is a critical strategy for managing the significant energy expenses associated with AI.

Are tech companies addressing the rising utility rates caused by AI data centers?

While some tech giants have signed ‘Ratepayer Protection Pledges,’ the cost of upgrading the grid to support massive AI data centers could still lead to increased utility bills for consumers, creating a potential PR challenge for Big Tech. These pledges aim to mitigate consumer impact, but the underlying infrastructure costs remain substantial. Companies are exploring various avenues, including investment in renewable energy sources and local microgrids, to manage and offset these costs.

References: Sources for AI Energy Consumption and Profit Impact

- BusinessToday, 2026-04-14: Global AI computing capacity and energy demand statistics

- IEA News, 2025: Capital expenditure projections and data center investment trends

- Grant Thornton, 2026 AI Impact Survey: Revenue growth statistics for AI-integrated organizations

- Wikipedia, ‘Environmental impact of artificial intelligence’: Microsoft emissions data

- Daily AI & Tech Trends: Market size projections for AI in energy management

- The Motley Fool: PUE statistics and efficiency technology analysis

- IEA – Key Questions on Energy and AI: User growth and revenue statistics

- The AI Journal: Time-to-power comparisons and modular solution benefits

- Reuters, The Globe and Mail: AI sentiment and market impact analysis

- Brookings: Regulatory landscape and energy demand concerns

- The Guardian: Job displacement and economic impact analysis

- Unboxfuture.com: Utility rate impacts and grid upgrade costs

- Global Business Outlook: AI energy paradox analysis

- Domain-b.com: Profitability pressure from infrastructure costs