AI-generated code introduces immediate and significant business risks in 2026, including security vulnerabilities and data exposure. This guide details every risk, backed by 2026 data, and provides structured frameworks for mitigation and management.

AI-generated code introduces significant business risks in 2026, primarily through security vulnerabilities, the accrual of technical and comprehension debt, and rampant data exposure. Over 51% of GitHub commits are AI-assisted, yet 46% of AI-generated code contains vulnerabilities, and 73% of AI systems are exposed to prompt injection. Mitigation requires new governance policies, robust technical controls like secure development environments, and revamped human-in-the-loop review processes.

AI-generated code introduces immediate and significant business risks due to security vulnerabilities, the accumulation of technical and comprehension debt, and rampant data exposure through unregulated use. As of early 2026, over 51% of GitHub commits are AI-assisted, creating a “code overload” that developers and security teams cannot sustainably manage. This guide details every risk, backed by 2026 data, and provides structured frameworks for mitigation.

The core dangers are quantifiable: 46% of AI-generated code contains vulnerabilities, it accounts for 81% of application security blind spots, and 73% of AI systems are exposed to prompt injection attacks. Half of all organizations lack policies to govern this activity, leaving customer data, API keys, and intellectual property exposed. This is not a future problem; it is a present operational crisis that demands new governance, security practices, and developer workflows.

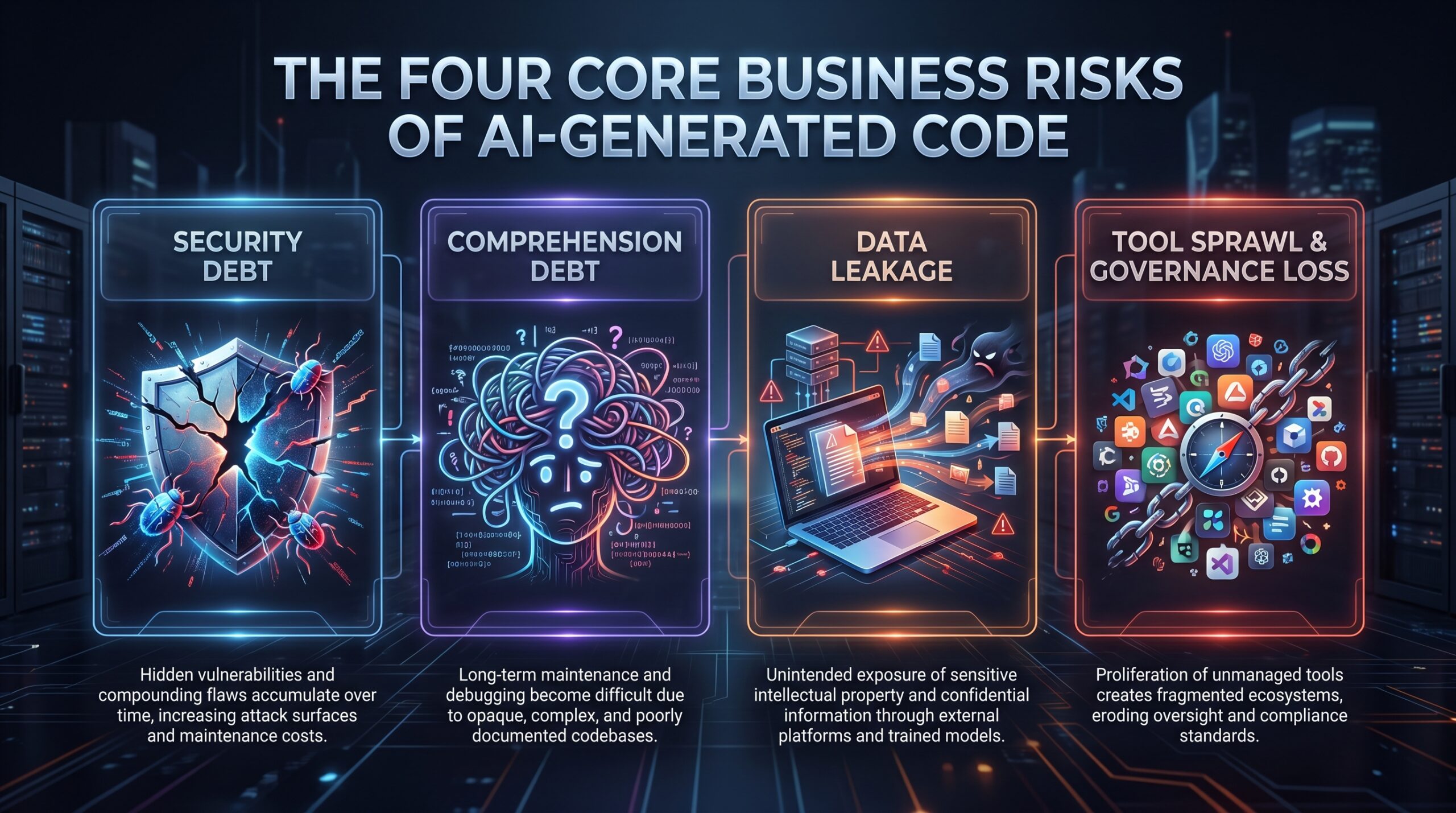

Section 1: Defining the Core Risks of AI-Generated Code

Understanding AI generated code business risk requires moving beyond generic warnings about “bias” or “hallucinations.” The real business impact stems from four concrete, interconnected categories: security debt, comprehension debt, data leakage, and unmanaged tool proliferation.

1.1 Security Vulnerabilities and the "Nearly Right" Code Problem

AI coding tools like GitHub Copilot are prolific but imprecise generators of “AI slop”—code that functions but is insecure, inefficient, or non-robust. The business impact isn’t just bugs; it’s a systemic increase in your attack surface. A 2026 report by Cycode found that AI-generated code was responsible for 81% of application security blind spots. This isn’t minor; it’s the primary source of new vulnerabilities entering codebases.

These vulnerabilities are often subtle. An AI might generate an SQL query that is technically correct but vulnerable to injection because it concatenates user input. It might create an API endpoint without proper authentication checks or leave hardcoded placeholder credentials in configuration files. The Harness field CTO noted that 46% of AI-generated code contains vulnerabilities. This rate is unsustainable for any security team performing manual reviews.

Prompt injection adds a new attack vector. In 2026 security audits, 73% of AI systems showed exposure to this flaw, where malicious input manipulates the model’s output. An AI coding assistant could be tricked into generating malicious code, revealing secrets from its training data, or bypassing guardrails. This risk extends beyond the generated code to the AI tools themselves.

1.2 Comprehension Debt: The Hidden Long-Term Cost

Comprehension debt is the effort required for a developer to understand, validate, and integrate AI-generated code, even if it appears correct on the surface. This debt accrues silently and compounds. A developer might accept a 50-line AI-generated function that works in a test, but if they don’t fully grasp its edge cases or architectural fit, they incur debt. When that function fails in production or needs modification six months later, that debt is paid “with interest” through extended debugging and refactoring.

This debt directly impacts business velocity and product stability. Teams ship faster initially but later experience a steep slowdown as they grapple with code they don’t fully own. It also concentrates risk in a small number of senior engineers who possess the context to review AI output, creating bottlenecks and single points of failure. The emergence of best AI agents for developers aims to alleviate some of this, but careful oversight remains crucial.

1.3 Data Exposure Through Shadow AI and Local Tooling

Shadow AI—the unauthorized use of AI tools—is rampant. Developers, seeking efficiency, paste proprietary code, customer data, or API keys into public AI chat interfaces or use unapproved local tools. 50% of organizations lack policies for handling sensitive data in AI workflows, making this a widespread, uncontrolled data leak. A developer debugging a script might paste a database connection string containing credentials directly into ChatGPT, exposing it to the model provider and potentially other users.

A 2026-specific risk vector is the shift to local AI tooling. As reported in The New York Times in April 2026, AI coding tools often perform better on laptops than in secured, web-based cloud environments. This prompts engineers to download entire company codebases to their local machines to use with tools like GitHub Copilot. If that laptop is lost, stolen, or compromised, the thief gains access to the complete corporate intellectual property repository, not just individual files.

1.4 Tool Sprawl and the Loss of Governance

Without centralized governance, organizations experience AI coding tool sprawl. Different teams use Copilot, Claude Code, Amazon CodeWhisperer, and various open-source models, each with different data handling policies, security postures, and licensing implications. This fragmentation makes aggregate risk assessment impossible. You cannot secure what you cannot see. The lack of a unified policy leads to inconsistent code quality, license violations from AI-suggested snippets, and an inability to perform post-incident audits to see what code was generated by which tool.

Section 2: Quantifying the Impact: 2026 Statistics and Trends

The scale of AI code adoption has transformed risks from theoretical to operational in 2026. The data shows a paradigm shift in software creation, with corresponding gaps in management and security.

| Trend / Metric | 2026 Data Point | Business Risk Implication |

|---|---|---|

| Adoption Volume | Over 51% of GitHub commits are AI-generated/assisted. | "Code overload" overwhelms review capacity, making manual security and quality gates ineffective. |

| Security Blind Spots | AI code causes 81% of application security blind spots (Cycode). | Traditional SAST/DAST tools and processes are missing the primary source of new vulnerabilities. |

| Vulnerability Rate | 46% of AI-generated code contains vulnerabilities (Harness). | A near-majority of new code introduces potential exploits, demanding new review methodologies. |

| Prompt Injection Exposure | 73% of AI systems are exposed in audits. | The AI tools themselves are a vulnerable attack surface, requiring infrastructure security. |

| Policy Gap | 50% of orgs lack sensitive data policies for AI workflows. | Unmitigated data leakage is occurring at an organizational level, risking compliance breaches. |

| Corporate Adoption | Firms like Anthropic generate "almost all" code using AI. | Demonstrates the scale of dependency; a flaw in the AI toolchain could cripple development. |

| Local Development Risk | Engineers download full codebases for local AI tool use. | Concentrates IP theft risk on individual endpoints, a severe vector for corporate espionage. |

The “Big Bang” of AI code has created a volume problem. The throughput of code generation has surpassed human capacity for comprehension and review. This isn’t just about more bugs; it’s about a fundamental mismatch between the pace of creation and the pace of responsible engineering. The OWASP Gen AI Security Project’s Q1 2026 Exploit Round-up Report catalogs the evolving threats at this DevOps-SecOps intersection, highlighting that the threat landscape is maturing rapidly.

Section 3: The AI-Generated Code Risk Assessment Framework

To systematically address AI generated code business risk, you need a structured assessment framework. This moves you from reactive firefighting to proactive governance.

3.1 Immediate Operational Risks (Next 90 Days)

These are the fires already burning that threaten outages, data breaches, or compliance failures.

- Unreviewed Code in Production: AI-generated code that bypassed meaningful human review is live. It likely contains vulnerabilities, inefficiencies, or licensing issues.

- Active Data Leakage: Employees are using unvetted AI tools with sensitive data. Credentials, PII, and source code are being sent to external AI providers without logs or controls.

- IP on Compromisable Endpoints: Full codebases reside on developer laptops with varying security postures, creating a massive attack surface for data exfiltration.

- Tool Sprawl Without Visibility: No central registry exists of which teams use which AI coding assistants, making incident response and policy enforcement impossible.

3.2 Strategic Business Risks (6-18 Months)

These are accruing liabilities that will impact product roadmaps, team morale, and financial performance.

- Accumulated Comprehension Debt: The codebase becomes a “black box” that only the AI “understands.” Feature development slows, bug rates increase, and senior developer burnout rises as they become the only effective reviewers.

- Vendor Lock-in & Toolchain Collapse: Over-dependence on a single AI provider’s models and idioms creates architectural lock-in. If the provider changes pricing, deprecates features, or has a security incident, your development process grinds to a halt.

- Erosion of Engineering Culture: The role of the “builder” engineer—who understands systems deeply—is devalued, leading to identity loss and attrition among your most valuable senior staff. “Shippers” who prioritize output over quality accelerate technical debt.

- Legal & Licensing Liabilities: AI tools can generate code that plagiarizes licensed open-source components without proper attribution. Companies face potential copyright infringement lawsuits and may fail compliance audits.

3.3 Conducting Your Risk Audit: A Checklist

Use this checklist to gauge your organization’s exposure.

Governance & Policy:

- Do you have a formally documented policy (e.g., akin to “Company Policy AI-2026-03”) governing AI coding assistant use?

- Does the policy explicitly forbid input of sensitive data (credentials, PII, source code) into public AI tools?

- Are there approved vs. banned tool lists, and are they communicated and enforced?

- Have you addressed code ownership and licensing for AI-assisted work, including duplication filtering requirements?

Security & Data:

- Can you audit which AI tools have been used and on what code/data?

- Have you implemented technical controls (proxies, DLP) to prevent data exfiltration to unauthorized AI APIs?

- Is AI-generated code tagged or identifiable in your repository for prioritized security scanning?

- Have you assessed the prompt injection security of any internal AI applications?

Development Process:

- Is AI-generated code subject to a mandatory, rigorous human review process different from standard PR reviews?

- Do you track metrics on review time for AI vs. human-written code to quantify comprehension debt?

- Have you trained developers on the specific pitfalls of reviewing AI code (e.g., checking for hardcoded values, authZ gaps, sloppy error handling)?

- Do you have a secure, approved method for developers to use AI tools without downloading entire codebases locally?

Section 4: Mitigation Strategies and Implementation

Mitigating AI generated code business risk requires a multi-layered approach combining policy, technology, and process change.

4.1 Establishing AI Coding Governance: The Policy Layer

Your policy is the foundation. It should be practical, not prohibitive.

- Create an Acceptable Use Policy (AUP): Model it on emerging standards like the referenced “Company Policy AI-2026-03.” Key mandates should include: a ban on inputting sensitive corporate or customer data into non-contracted AI services; a requirement to use enterprise accounts (like GitHub Copilot Business) that offer data protection guarantees; and rules for license compliance and duplication filtering.

- Maintain an Approved Tools Registry. Centralize procurement and security review of AI coding tools. Only tools that commit to data privacy (no training on your inputs), offer audit logs, and integrate with your SSO and DLP systems should be approved.

- Define Code Ownership and Licensing. State clearly that code written using company-provisioned AI tools is company IP. Mandate that developers verify the provenance and license of any AI-suggested code blocks longer than a few lines to avoid introducing licensing conflicts.

4.2 Technical Controls for Security and Data Loss Prevention

Policy alone is insufficient. You need enforceable technical guardrails.

- Deploy a Cloud-Based, Secure Development Environment: The single most effective control to mitigate the “local laptop” risk is to provide a powerful, AI-enabled cloud development environment (like GitHub Codespaces, Gitpod, or managed cloud VMs). This keeps code off endpoints and allows you to control tooling, network egress, and data flows centrally.

- Implement API-level Data Loss Prevention (DLP): Use a secure web gateway or specialized DLP tool to block or log requests to public AI API endpoints (OpenAI, Anthropic) from corporate networks unless from authorized service accounts. Tools like Nightfall AI or built-in features in enterprise browsers can scan for and redact sensitive data before it reaches an AI prompt.

- Tag and Scan AI-Generated Code: Integrate a pre-commit hook or CI step that uses tools like Cycode or SpectralOps to detect patterns of AI-generated code. Route these commits to specialized security scan pipelines with stricter rules. Prioritize them in SAST tools and mandate additional manual review steps.

4.3 Process Integration: The Human-in-the-Loop Review

Revamp your code review process to account for comprehension debt.

- Mandate "AI-Generated" Labels: Require developers to tag any PR containing significant AI-generated code. This signals to reviewers to shift their mindset from “does this work?” to “do I understand how this works, and is it secure?”

- Implement Pair Reviewing for AI Code: Critical or complex AI-generated modules should require review by two senior engineers—one focusing on functional correctness and one on security implications. This distributes the comprehension load.

- Create AI Code Review Checklists: Equip developers with specific questions to ask:

- Are there any hardcoded secrets, URLs, or credentials?

- Is error handling robust, or does it fail open?

- Are authentication and authorization checks present and correct?

- Does this code introduce new dependencies or licenses?

- Is the algorithm efficient, or is it “AI slop” that will scale poorly?

4.4 Tooling Comparison: Choosing the Right Guardrails

Not all security and governance tools are equal in addressing AI-specific risks. The table below compares approaches.

| Tool / Approach | Primary Function | Pros for AI Risk Mitigation | Cons / Gaps |

|---|---|---|---|

| Enterprise AI Coding Suites (GitHub Copilot Business, Amazon CodeWhisperer Professional) | AI code generation with enterprise controls. | Data from prompts is not used for model training; provides admin dashboards for usage; integrates with IDE. | Does not prevent "shadow AI" use of other tools; security of generated code is still the user’s responsibility. |

| Cloud Development Environments (GitHub Codespaces, Gitpod) | Hosted, containerized dev environments. | Keeps code off local laptops; environment tooling is controlled and auditable; integrates with VSCode extensions. | Can be more expensive; requires cultural shift; potential latency concerns. |

| API Security / DLP Gateways (Netskope, Zscaler, Palo Alto Networks) | Monitor and control cloud API traffic. | Can block or audit calls to unauthorized AI APIs; can integrate data pattern matching to redact secrets. | May be bypassed by local apps or via personal devices; requires fine-tuning to avoid blocking legitimate use. |

| Code Security Scanners with AI Awareness (Cycode, Snyk, Checkmarx) | SAST, SCA, and secret scanning. | Some now claim to identify AI-generated code patterns and associated risks; integrates into CI/CD. | May produce false positives/negatives on "AI slop"; a reactive, not preventive, control. |

| Internal AI Proxy / Gateway (Self-built using OpenAI’s API, Helicone) | Routes all internal AI API calls through a central proxy. | Enforces usage policies, logs all prompts/completions, manages API keys, enables caching. | Requires in-house development and maintenance; adds complexity. |

The most robust strategy combines elements from multiple rows: an enterprise AI suite for primary use, a CDE to contain code, a DLP gateway to catch policy violations, and a specialized scanner in CI/CD.

Section 5: Case Studies and Real-World Scenarios

5.1 Case Study: The FinTech Data Leak

Scenario: A mid-sized FinTech company encouraged developers to “use AI to boost productivity” but provided no guidelines or approved tools. A developer, tasked with debugging a transaction processing script, copied a log file containing 100 recent transactions (with customer names, account numbers, and amounts) and pasted it into a free-tier ChatGPT session to “ask for help finding the bug.”

Impact: The customer PII was now in OpenAI’s systems, potentially used for model training and visible to their employees for abuse monitoring. This violated GDPR and CCPA. The breach was discovered months later during an internal audit when the developer admitted the practice. The cost included regulatory fines, mandatory customer notification, credit monitoring services, and severe reputational damage.

Mitigation Path (Retrospective): The company implemented a three-step control: 1) A clear policy banning PII/PCI data in external AI tools. 2) A technical block on the most common AI website domains at the firewall, with an exception process. 3) The provision of a secure, internal sandbox with a controlled Azure OpenAI instance for similar tasks, with logging and data retention policies aligned with regulations.

5.2 Case Study: The E-commerce Platform Outage

Scenario: An e-commerce company’s development team used AI heavily to build a new inventory management microservice. Under pressure to meet a Black Friday deadline, they accepted large swaths of AI-generated code with cursory reviews. The code worked in pre-production but contained subtle “AI slop”: inefficient database queries and poor connection pooling logic.

Impact: On Cyber Monday, under real load, the service latency spiked, database connections were exhausted, and the service crashed. The outage lasted 4 hours during peak sales, resulting in millions in lost revenue. The post-mortem revealed the team didn’t fully understand the AI-generated data access layer, turning a 30-minute debugging task into a multi-hour crisis. The impact on software engineer jobs was also significant, as team morale plummeted.

Mitigation Path (Retrospective): The company introduced an “AI-Generated Module” checklist for production readiness, including mandatory load testing and performance profiling for any service where >30% of the code was AI-assisted. They also started tracking “Mean Time to Understand” (MTTU) for bug fixes in AI-generated vs. human code to make the comprehension debt visible to management.

Case Study Retrospective: Lessons Learned from AI Code Risks

- FinTech Data Leak: Clear policies banning sensitive data, technical blocks on unauthorized AI domains, and providing secure internal AI sandboxes are crucial.

- E-commerce Platform Outage: Mandatory load testing and performance profiling for AI-assisted services, along with tracking ‘Mean Time to Understand’ (MTTU) for AI-generated code, are essential for preventing outages and managing comprehension debt.

Section 6: The Future Landscape and Evolving Threats (2026 and Beyond)

The risk landscape is dynamic. Based on Q1 2026 trends from the OWASP GenAI project and industry reports, several evolution paths are clear.

- Specialized AI Security Testing Tools: The market will see tools designed specifically to fuzz-test AI-generated code, simulate prompt injection attacks on AI-assisted development environments, and detect model drift in code-generation quality.

- Regulatory Pressure: As data leaks and incidents multiply, expect sectoral regulators (for finance, healthcare) to issue specific guidance or rules on AI-assisted software development, similar to existing rules for secure SDLC.

- Rise of the "AI Security Engineer": A new specialization will emerge, blending application security, data privacy, and machine learning ops (MLOps) skills to design and audit secure AI coding toolchains.

- Licensing and IP Litigation: Landmark court cases will challenge the ownership and provenance of AI-generated code, forcing companies to adopt rigorous software composition analysis (SCA) and artifact provenance systems for all code, regardless of origin.

- Consolidation of the AI Toolchain: The current sprawl will become unmanageable. Organizations will demand unified platforms that combine secure AI coding, environment management, policy enforcement, and audit logging from a single vendor or integrated suite. This will resemble the evolution seen in AI agent frameworks.

Proactive organizations will start piloting tools in these areas now and will include AI code risk as a standing agenda item in their security and architecture review boards.

Section 7: Action Plan and Risk Mitigation Checklist

Moving from awareness to action requires a phased plan. Do not attempt to implement everything at once.

Phase 1: Immediate Containment (Weeks 1-2)

- Issue a Interim Policy Directive: Immediately communicate that no sensitive data (credentials, PII, source code) is to be entered into public AI tools until a formal policy is established. Designate a point of contact for questions.

- Conduct a Lightweight Risk Audit: Use the checklist in Section 3.3 to interview lead developers and security staff. Identify the most glaring gap (e.g., “we have no idea what tools are being used”).

- Enable Basic Logging: If using an enterprise AI tool like Copilot Business, turn on audit logs. If not, investigate network logs for traffic to major AI API endpoints to gauge shadow AI usage.

Phase 2: Foundation Building (Months 1-3)

- Draft and Socialize Formal Policy: Develop your “AI-2026-03” equivalent. Involve legal, security, and engineering leadership. Focus on data privacy, approved tools, and code ownership.

- Procure an Enterprise-Grade Tool: Select and roll out a single, approved enterprise AI coding assistant (e.g., GitHub Copilot Business) to provide a safe, sanctioned alternative.

- Launch a Developer Training Module: Create a 30-minute training on the risks of AI-generated code, focusing on comprehension debt and secure prompting practices. Make completion mandatory for engineers.

Phase 3: Advanced Controls and Integration (Quarter 2 and Beyond)

- Implement Technical DLP/Blocking: Configure network or endpoint controls to deter use of unapproved AI web services and APIs.

- Pilot a Cloud Development Environment (CDE): Select a team to trial a CDE to mitigate local code storage risks and evaluate impact on developer experience and security.

- Enhance CI/CD Security Gates: Integrate a security scanner with AI-code awareness and create a mandatory PR template that includes an “AI-Generated Code” checkbox, triggering a more rigorous review workflow.

Final Risk Mitigation Checklist

Before considering your AI code risk managed, ensure you can answer “Yes” to these critical questions:

- Governance: Is there a published, enforceable policy governing AI tool use, data handling, and code ownership?

- Tooling: Do you provide a secure, approved AI coding tool as a primary, attractive option for developers?

- Data Loss Prevention: Do you have technical controls (DLP, gateway, monitored APIs) to prevent sensitive data from leaving to unauthorized AI services?

- Code Security: Is AI-generated code identifiable and subjected to enhanced security scans and mandatory, thorough human review?

- IP Protection: Is the primary development environment structured (e.g., using CDEs) to prevent entire codebases from being stored on easily-lost or compromised endpoints?

- Visibility: Can you generate a report showing which teams/projects are using which AI tools and at what volume?

- Training: Have your developers been trained on the specific risks and review techniques for AI-generated code?

Key Takeaways for AI Generated Code Business Risk Mitigation

- Policy is Paramount: Establish clear, enforceable policies for AI tool usage, data handling, and code ownership before wide adoption.

- Technical Guardrails are Non-Negotiable: Implement DLP, secure cloud development environments (CDEs), and AI-aware security scanners to prevent data leaks and detect vulnerabilities.

- Human Oversight Remains Critical: Revamp code review processes to specifically address AI-generated code, focusing on comprehension, security, and efficiency.

- Stay Vigilant: The threat landscape is evolving; continuously monitor for new risks and adapt your mitigation strategies.

Conclusion and What to Do Next

The AI generated code business risk is not about stopping progress; it’s about managing the inevitable. The genie is out of the bottle—over half of all new code is already AI-assisted. The competitive disadvantage will not come from using AI, but from using it recklessly, leading to catastrophic breaches, paralyzing technical debt, and the loss of core engineering intelligence.

Frequently Asked Questions (FAQ)

What is the biggest immediate AI generated code business risk?

The biggest immediate risk is data exposure through “shadow AI.” Developers pasting proprietary code, credentials, or customer data into public AI tools like ChatGPT create direct, preventable data leaks. With 50% of organizations lacking policies to govern this, it’s a widespread and active threat to compliance and security.

How does “comprehension debt” differ from technical debt?

Technical debt is the cost of reworking quick-and-dirty code. Comprehension debt is the hidden cost of understanding AI-generated code that appears correct but is opaque. It accumulates silently as developers accept code they don’t fully grasp, leading to longer debug times later and creating a codebase that only the AI model effectively “understands,” which hinders long-term maintainability.

Can’t AI tools themselves secure the code they generate?

No, this is a dangerous myth. While LLM providers are adding security-oriented features, they cannot understand your specific application context, business logic, or architecture. AI-generated code is responsible for 81% of security blind spots, proving that AI does not replace the need for human application security expertise and targeted security testing.

What is “AI slop” in coding?

“AI slop” refers to code that is functionally “nearly right” but is inefficient, insecure, or poorly structured. It passes a basic test but fails under scale, edge cases, or security scrutiny. It’s the primary contributor to both technical and comprehension debt, as it looks deceptively acceptable but requires significant human refinement to be production-ready.

How do we prevent developers from downloading entire codebases to their laptops for AI use?

The most effective technical control is to provide a powerful, sanctioned alternative: a Cloud Development Environment (CDE) like GitHub Codespaces. By giving developers a full-featured, AI-equipped coding environment in the cloud, you eliminate the performance incentive to work locally and keep all source code on secure, controlled infrastructure.

What should be in a company policy for AI coding assistants?

A robust policy, like the referenced “AI-2026-03,” should include: a list of approved tools; a strict prohibition on inputting sensitive data into unapproved services; rules for code ownership and licensing compliance (including duplication filtering); and requirements for labeling and conducting enhanced reviews on AI-generated code before merging.

Are some AI coding tools safer than others?

Yes. Enterprise-tier tools (GitHub Copilot Business, Amazon CodeWhisperer Professional) contractually agree not to use your prompt data or code snippets to train their public models. They also provide administrative controls and audit logs. Public, free-tier tools typically reserve the right to use your inputs for training, making them unsafe for any proprietary or sensitive data.