Qwen3.5-Omni is Alibaba’s latest multimodal AI model released in , featuring hundreds of billions of parameters, 256k context length, and advanced audio-visual understanding capabilities across 10 languages.

| Released by | Alibaba |

|---|---|

| Release date | |

| What it is | Multimodal AI model with audio-visual capabilities |

| Who it’s for | Developers and enterprises needing multimodal AI |

| Where to get it | Alibaba Cloud platform |

| Price | Not yet disclosed |

- Qwen3.5-Omni scales to hundreds of billions of parameters with 256k context length support [1]

- The model achieves state-of-the-art results across 215 audio and audio-visual benchmarks [1]

- ARIA technology dynamically aligns text and speech units for improved conversational stability [1]

- Supports multilingual understanding and speech generation across 10 languages with emotional nuance [1]

- Released as proprietary software in , accessible through Alibaba’s platforms [8]

- Qwen3.5-Omni represents a significant architectural leap with Hybrid Attention Mixture-of-Experts framework

- The model processes over 10 hours of audio and 400 seconds of 720P video at 1 FPS

- ARIA technology addresses streaming speech synthesis instability through dynamic alignment

- Audio-Visual Vibe Coding enables direct programming from audio-visual instructions

- Proprietary release marks departure from Alibaba’s previous open-source model strategy

What is Qwen3.5-Omni

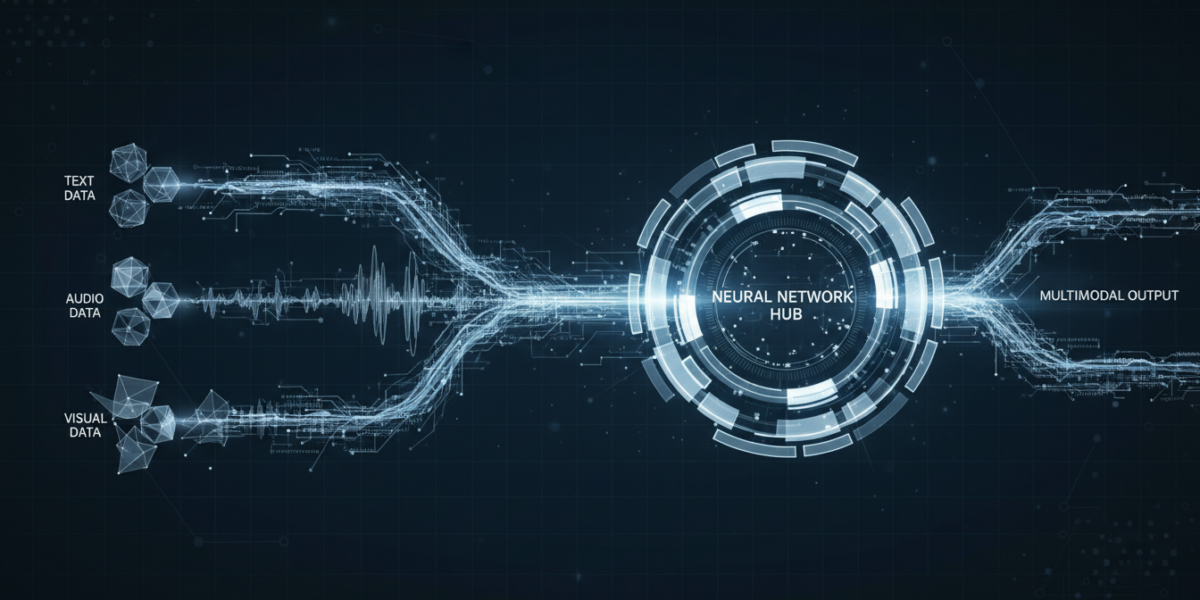

Qwen3.5-Omni is Alibaba’s multimodal AI model that processes text, audio, and visual content simultaneously. The model scales to hundreds of billions of parameters and supports a 256k context length [1]. Qwen3.5-Omni leverages a massive dataset comprising heterogeneous text-vision pairs and over 100 million hours of audio-visual content [1].

The model demonstrates robust omni-modality capabilities across multiple languages and interaction modes. Qwen3.5-Omni supports multilingual understanding and speech generation across 10 languages with human-like emotional nuance [1]. The system facilitates sophisticated interaction, supporting over 10 hours of audio understanding and 400 seconds of 720P video processing at 1 FPS [1].

What is new vs the previous version

Qwen3.5-Omni introduces three major capabilities over its predecessor Qwen3-Omni.

| Feature Category | Qwen3-Omni | Qwen3.5-Omni |

|---|---|---|

| Audio-Visual Captioning | Basic captioning | Controllable, structured captions with screenplay-level descriptions and automatic segmentation [2] |

| Real-time Interaction | Standard interaction | Semantic interruption, native turn-taking, end-to-end voice control over volume, speed, emotion, and voice cloning [2] |

| Speech Synthesis | Standard synthesis | ARIA technology for dynamic text-speech alignment and enhanced stability [1] |

| Programming Capability | Not available | Audio-Visual Vibe Coding for direct programming from audio-visual instructions [1] |

| Context Length | Not specified | 256k context length support [1] |

How does Qwen3.5-Omni work

Qwen3.5-Omni operates through a Hybrid Attention Mixture-of-Experts (MoE) framework for efficient processing.

- Architecture Foundation: The model employs a Hybrid Attention Mixture-of-Experts framework for both Thinker and Talker components, enabling efficient long-sequence inference [1]

- ARIA Integration: ARIA dynamically aligns text and speech units to address encoding efficiency discrepancies between text and speech tokenizers [1]

- Multimodal Processing: The system processes heterogeneous text-vision pairs and audio-visual content simultaneously through specialized attention mechanisms [1]

- Temporal Synchronization: The model generates script-level structured captions with precise temporal synchronization and automated scene segmentation [1]

- Language Support: Multilingual processing across 10 languages with emotional nuance recognition and generation capabilities [1]

Benchmarks and evidence

Qwen3.5-Omni-plus achieves state-of-the-art results across 215 audio and audio-visual understanding benchmarks.

| Performance Area | Result | Comparison | Source |

|---|---|---|---|

| Audio Tasks | State-of-the-art | Surpasses Gemini-3.1 Pro in key audio tasks | [1] |

| Audio-Visual Understanding | Competitive | Matches Gemini-3.1 Pro in comprehensive understanding | [1] |

| Benchmark Coverage | 215 subtasks | Across audio and audio-visual understanding, reasoning, and interaction | [1] |

| Context Processing | 256k tokens | Extended context length support | [1] |

| Video Processing | 400 seconds | 720P video at 1 FPS processing capability | [1] |

Who should care

Builders

Developers building multimodal applications benefit from Qwen3.5-Omni’s comprehensive audio-visual processing capabilities. The model’s support for Audio-Visual Vibe Coding enables direct programming from audio-visual instructions [1]. Builders can leverage the 256k context length for complex, long-form multimodal applications [1].

Enterprise

Enterprises requiring sophisticated audio-visual understanding gain access to controllable captioning and real-time interaction features. The model’s multilingual support across 10 languages with emotional nuance serves global business needs [1]. Enterprise users can access Qwen3.5-Omni through Alibaba’s cloud platform [8].

End Users

End users experience enhanced conversational AI through ARIA’s improved speech synthesis stability and prosody. The model supports comprehensive real-time interaction with semantic interruption and voice control capabilities [2]. Users can interact with the model through chatbot websites and Alibaba’s platforms [8].

Investors

Investors should note Alibaba’s strategic shift from open-source to proprietary models with Qwen3.5-Omni’s release [8]. The model’s state-of-the-art performance across 215 benchmarks positions Alibaba competitively in the multimodal AI market [1].

How to use Qwen3.5-Omni today

Access to Qwen3.5-Omni is limited to Alibaba’s proprietary platforms as of .

- Platform Access: Visit Alibaba’s chatbot websites or access through the Alibaba Cloud platform [8]

- Account Setup: Create an Alibaba Cloud account to access enterprise-level features and APIs

- Integration: Use Alibaba’s provided APIs and SDKs for application integration

- Configuration: Configure multimodal inputs including text, audio, and visual content through the platform interface

- Testing: Start with basic audio-visual understanding tasks before implementing complex multimodal workflows

Qwen3.5-Omni vs competitors

Qwen3.5-Omni competes directly with other multimodal AI models in the market.

| Feature | Qwen3.5-Omni | Gemini-3.1 Pro | GPT-4 Omni |

|---|---|---|---|

| Parameters | Hundreds of billions [1] | Not disclosed | Not disclosed |

| Context Length | 256k tokens [1] | Not specified | 128k tokens |

| Audio Performance | Surpasses Gemini-3.1 Pro [1] | Baseline | Not specified |

| Languages Supported | 10 languages [1] | Multiple languages | Multiple languages |

| Video Processing | 400 seconds at 720P [1] | Not specified | Not specified |

| Availability | Proprietary [8] | Proprietary | Proprietary |

Risks, limits, and myths

- Proprietary Access: Limited availability through Alibaba’s platforms may restrict adoption compared to open-source alternatives

- Streaming Stability: Despite ARIA improvements, streaming speech synthesis may still experience occasional instability in complex scenarios

- Resource Requirements: Hundreds of billions of parameters require substantial computational resources for deployment and inference

- Language Limitations: Support limited to 10 languages may not cover all global use cases

- Benchmark Generalization: Performance on 215 benchmarks may not translate to all real-world applications

- Pricing Uncertainty: Undisclosed pricing model creates uncertainty for enterprise adoption planning

- Platform Dependency: Reliance on Alibaba’s infrastructure may pose vendor lock-in risks for enterprises

FAQ

What is Qwen3.5-Omni and how does it differ from previous models?

Qwen3.5-Omni is Alibaba’s latest multimodal AI model with hundreds of billions of parameters and 256k context length, featuring advanced audio-visual capabilities and ARIA speech synthesis technology [1].

When was Qwen3.5-Omni released and is it open source?

Qwen3.5-Omni was released in as a proprietary model, marking Alibaba’s departure from open-source releases [8].

How many languages does Qwen3.5-Omni support?

Qwen3.5-Omni supports multilingual understanding and speech generation across 10 languages with human-like emotional nuance [1].

What is ARIA technology in Qwen3.5-Omni?

ARIA dynamically aligns text and speech units to address encoding efficiency discrepancies, significantly enhancing conversational speech stability and prosody with minimal latency impact [1].

How long can Qwen3.5-Omni process audio and video content?

Qwen3.5-Omni supports over 10 hours of audio understanding and 400 seconds of 720P video processing at 1 FPS [1].

What is Audio-Visual Vibe Coding in Qwen3.5-Omni?

Audio-Visual Vibe Coding is a new capability that enables direct programming based on audio-visual instructions, emerging as a unique feature in omnimodal models [1].

How does Qwen3.5-Omni compare to Gemini-3.1 Pro?

Qwen3.5-Omni surpasses Gemini-3.1 Pro in key audio tasks and matches it in comprehensive audio-visual understanding across 215 benchmarks [1].

Where can I access Qwen3.5-Omni?

Access to Qwen3.5-Omni is limited to chatbot websites and the Alibaba Cloud platform as a proprietary service [8].

What architecture does Qwen3.5-Omni use?

Qwen3.5-Omni employs a Hybrid Attention Mixture-of-Experts (MoE) framework for both Thinker and Talker components, enabling efficient long-sequence inference [1].

How much training data was used for Qwen3.5-Omni?

Qwen3.5-Omni was trained on a massive dataset comprising heterogeneous text-vision pairs and over 100 million hours of audio-visual content [1].

Glossary

- ARIA

- Dynamic alignment technology that synchronizes text and speech units to improve conversational speech stability and prosody

- Audio-Visual Vibe Coding

- Capability enabling direct programming and code generation based on audio-visual instructions rather than text

- Hybrid Attention Mixture-of-Experts (MoE)

- Architecture framework combining attention mechanisms with expert routing for efficient processing of large-scale models

- Omni-modality

- Ability to process and understand multiple input modalities including text, audio, and visual content simultaneously

- Thinker and Talker

- Architectural components in Qwen3.5-Omni where Thinker processes understanding and Talker handles generation tasks

Sources

- Qwen3.5-Omni Technical Report – arXiv

- Qwen3.5-Omni Technical Report – arXiv HTML

- Paper page – Qwen3.5-Omni Technical Report – Hugging Face

- Qwen3.6-Max-Preview: Smarter, Sharper, Still Evolving – Qwen AI

- Qwen3.5 – How to Run Locally – Unsloth Documentation

- Qwen (Qwen) – Hugging Face

- Qwen3.5 & Qwen3.6 Usage Guide – vLLM Recipes

- Qwen – Wikipedia