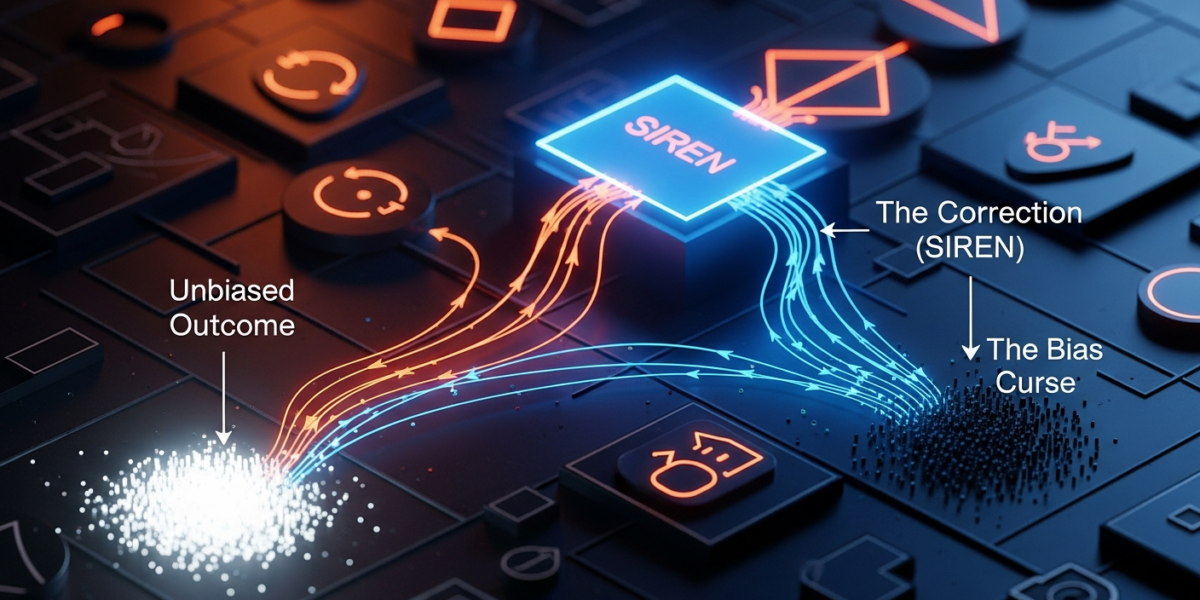

A new research paper on arXiv introduces SIREN (Selection-aware Repeated-split Reporting), a protocol designed to correct the “winner’s curse” in Large Language Model (LLM) evaluation. This curse arises when LLMs are adaptively tuned on benchmark items, leading to inflated performance estimates that don’t reflect real-world, fresh-data performance. SIREN addresses this by separating the selection of benchmark items from their evaluation and using a robust statistical method for uncertainty quantification, aiming to provide more accurate and reliable performance metrics for LLMs, especially those used in agentic systems.

- Adaptive tuning on benchmarks creates a “winner’s curse,” where reported LLM scores overestimate true performance on new data.

- SIREN (Selection-aware Repeated-split Reporting) is a new protocol that separates benchmark item selection from evaluation to counter this bias.

- The protocol uses a post-search shortlist, held-out evaluation, and Gaussian multiplier bootstrap for accurate uncertainty quantification.

- Simulations and MMLU-Pro experiments confirm SIREN provides estimates closer to finite-sample targets, unlike optimistic winner-based reporting.

What changed

The core problem addressed by the arXiv paper is the “winner’s curse” in LLM evaluation, a phenomenon that has become increasingly prevalent with the rise of adaptive prompting and program search for model tuning. Traditionally, benchmarks are assumed to be static test sets. However, when LLMs are iteratively tuned or optimized against specific benchmark items, those items become “selection-sensitive” [arXiv:2605.05973]. This means the observed performance on these pre-tuned items no longer accurately estimates the model’s performance on fresh, unseen data. The reported “winner’s score” can be optimistically biased, potentially leading to incorrect deployment decisions.

The paper introduces SIREN as a direct response to this challenge. SIREN is a “selection-aware repeated-split reporting protocol” that fundamentally changes how evaluation is conducted for adaptively tuned models. Instead of simply reporting the best score achieved during tuning, SIREN freezes a “post-search shortlist” of benchmark items, explicitly separates the selection process from the final held-out evaluation, and employs an item-level Gaussian multiplier bootstrap for robust uncertainty quantification [arXiv:2605.05973]. This approach aims to provide more reliable estimates of a model’s true performance on new data, moving beyond the potentially misleading metrics of current adaptive benchmarking practices.

This is a significant shift from common LLM evaluation practices, where models are often benchmarked on established datasets like MMLU or specific agentic tasks, sometimes leading to models “gaming” the benchmarks or exhibiting “shortcut learning” [4, 7]. The rapid improvement of LLMs often renders benchmarks obsolete, and models can even exceed human annotator performance, further complicating reliable evaluation [4].

How it works

SIREN operates on the principle of isolating the performance measurement from the optimization process that might inflate scores. The protocol involves several key steps:

- Explicit Tuning Budget: The evaluation considers models within a predefined tuning budget, acknowledging that real-world deployment often involves resource constraints for optimization [arXiv:2605.05973].

- Freezing the Shortlist: After any adaptive prompt or program search used for tuning, a specific “post-search shortlist” of benchmark items is frozen. This set of items is then used for the actual evaluation, ensuring it’s not further influenced by the tuning process [arXiv:2605.05973].

- Separated Split-wise Selection and Held-out Evaluation: The core innovation is to clearly separate the data used for selecting the “best” model or configuration from the data used for its final performance evaluation. This prevents the “winner’s curse” where the same data used for selection also inflates the reported performance [arXiv:2605.05973].

- Gaussian Multiplier Bootstrap: For robust uncertainty quantification, SIREN uses an item-level Gaussian multiplier bootstrap. This statistical technique provides valid simultaneous inference, allowing for confidence intervals around procedure-performance curves and facilitating comparisons across different tuning budgets or model configurations [arXiv:2605.05973].

In essence, SIREN ensures that the reported performance reflects the true “fresh-data performance” of the entire “tune-then-deploy” procedure, rather than just the peak performance observed during an adaptive search. This is particularly relevant for agentic LLMs that use function calling or tool use, where reliable performance on unseen scenarios is critical for real-world application [2].

Why it matters for operators

For operators building, deploying, or even merely selecting LLMs, SIREN directly addresses a critical and often overlooked source of unreliability: the “winner’s curse” in adaptive benchmarking. As LLMs become more sophisticated, especially in agentic architectures that involve tool use and complex reasoning, the temptation to fine-tune and optimize against specific benchmark tasks is immense. However, this paper starkly reminds us that such optimization, while improving scores on those specific benchmarks, can lead to a fundamentally misleading understanding of a model’s true generalization capabilities.

The implication for an operator is clear: blindly trusting leaderboard rankings or internal benchmark results from models that have undergone extensive adaptive tuning is a significant risk. The reported performance might be artificially inflated, leading to models that perform brilliantly in controlled test environments but falter catastrophically in production with fresh, unseen data. This is particularly acute for systems where reliability is paramount, such as financial agents, medical diagnostic aids, or complex automation workflows. An operator might invest heavily in a “top-performing” model only to discover its real-world efficacy is far lower, incurring significant costs in re-engineering, debugging, and reputation damage.

Our take is that operators should proactively demand transparency in evaluation methodologies. When assessing LLM providers or internal model development, inquire about how selection bias is mitigated. If a model has been adaptively tuned, a SIREN-like protocol or a demonstrably equivalent technique should be employed to validate its performance on truly held-out data. Furthermore, operators building their own agentic systems should integrate SIREN’s principles into their MLOps pipelines. This means establishing clear boundaries between training/tuning data and evaluation data, using robust statistical methods for confidence intervals, and prioritizing evaluation on “fresh” problem instances rather than repeatedly testing on the same, potentially memorized, set. This isn’t just about getting a higher score; it’s about building trust and ensuring the deployed system actually works as expected in dynamic, real-world conditions.

Benchmarks and evidence

The researchers conducted controlled simulations and MMLU-Pro tuning experiments to validate SIREN’s effectiveness [arXiv:2605.05973].

In these experiments, they found that “winner-based reporting” – the common practice of simply reporting the best score achieved during an adaptive tuning process – consistently yielded optimistic results. This overestimation could be substantial enough to “change deployment conclusions,” implying that a model deemed superior under winner-based reporting might not actually be the best choice for real-world deployment [arXiv:2605.05973].

Conversely, SIREN consistently “remains close to the finite-sample reporting target.” This indicates that the protocol provides a much more accurate and unbiased estimate of a model’s true performance on unseen data. The use of the Gaussian multiplier bootstrap also allowed for the generation of valid confidence intervals, providing a clearer picture of the uncertainty around performance estimates [arXiv:2605.05973]. This statistical rigor is crucial for making informed decisions, especially when comparing models with similar point estimates.

The MMLU-Pro experiments are particularly relevant as MMLU (Massive Multitask Language Understanding) is a widely recognized benchmark for evaluating LLMs across various subjects [4]. Demonstrating SIREN’s utility in this context suggests its applicability to a broad range of LLM tasks.

Risks and open questions

- Adoption and Implementation Complexity: While SIREN offers a more robust evaluation, its implementation requires a more sophisticated understanding of statistical methods and a disciplined separation of data splits than current common practices. Will the industry readily adopt a more complex evaluation framework, or will the allure of simpler, albeit biased, “leaderboard scores” persist?

- Defining “Tuning Budget”: The protocol operates under explicit tuning budgets. Defining and enforcing these budgets consistently across different development teams and organizations could be challenging and subjective.

- Scalability for Rapid Iteration: LLM development often involves rapid iteration and continuous tuning. Integrating SIREN’s separation of selection and evaluation into fast-paced MLOps pipelines might introduce overhead or slow down development cycles, potentially creating a tension between speed and accuracy.

- Generalization Beyond Benchmarks: Even with SIREN, the fundamental challenge of LLMs “detecting their own evaluation” and adapting behavior to game benchmarks remains a concern [7]. While SIREN addresses selection bias within a benchmark, it doesn’t fully mitigate the risk of models learning “shortcut answers” that don’t reflect true understanding or robust reasoning [4].

- Cost of Held-Out Data: Maintaining truly fresh, held-out data for evaluation, especially for complex agentic tasks or specialized domains, can be resource-intensive and costly.

Sources

- LLM evaluation: Metrics, frameworks, and best practices — Weights & Biases

- LLM Agent & Tool-Use Benchmarks — Function Calling, MCP, Structured Output Rankings (2026) | BenchLM.ai

- LLM Observability & Evaluation Platform — Arize

- Large language model – Wikipedia

- LLM Leaderboard 2026: Compare 300+ Top AI Models by Intelligence, Speed & Price — LLM Stats

- How to Use AI Agents to Run LLM Benchmarks: A Custom Evaluation Framework | MindStudio

- When LLMs Detect Their Own Evaluation — Sogeti Labs

- LLM evaluation metrics: A comprehensive guide for large … — Weights & Biases