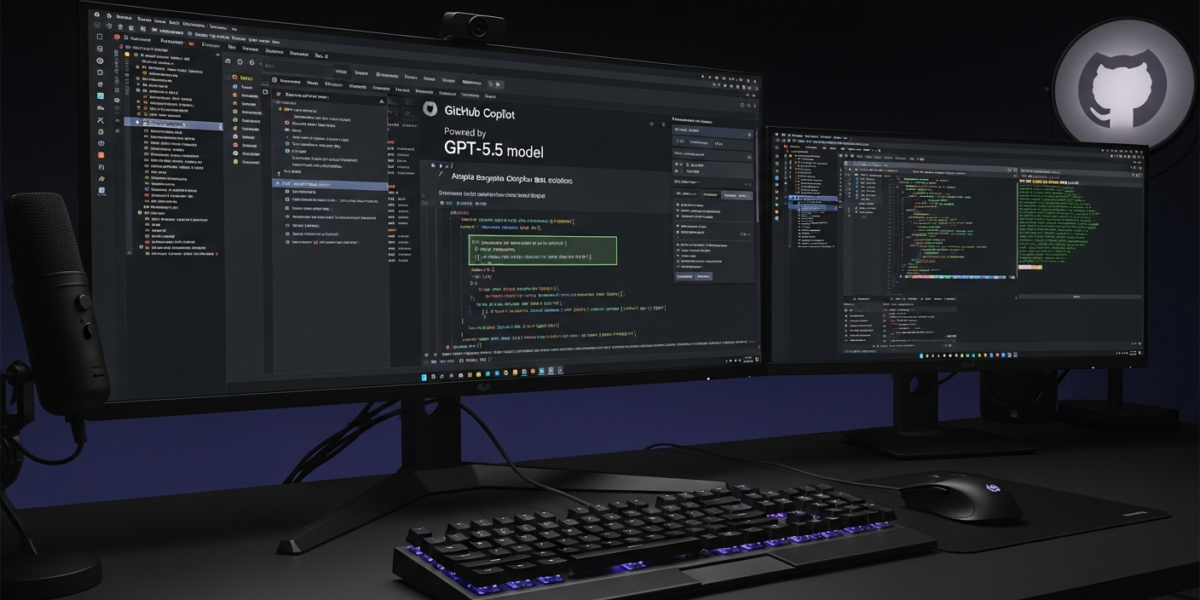

GPT-5.5 is now generally available in GitHub Copilot for Pro+, Business, and Enterprise users as of . OpenAI’s latest model delivers enhanced performance on complex, multi-step agentic coding tasks that previous GPT models couldn’t handle effectively.

| Released by | GitHub |

|---|---|

| Release date | |

| What it is | OpenAI’s latest GPT model integrated into GitHub Copilot |

| Who it’s for | Copilot Pro+, Business, and Enterprise users |

| Where to get it | GitHub Copilot platform |

| Price | Not yet disclosed |

- GPT-5.5 is rolling out to GitHub Copilot Pro+, Business, and Enterprise subscribers

- The model excels at complex, multi-step agentic coding tasks beyond previous GPT capabilities

- Integration includes Microsoft Foundry and Azure OpenAI Service ecosystem support

- Declarative agents can be defined in YAML or written using GitHub Copilot SDK

- Local deployment requires 64 GB system RAM or dual 24 GB VRAM GPUs

- GPT-5.5 represents OpenAI’s most advanced coding model for enterprise development workflows

- Availability is restricted to premium GitHub Copilot tiers, excluding basic plans

- The model addresses real-world coding challenges that stumped previous GPT versions

- Integration spans Microsoft’s enterprise AI ecosystem including Foundry and Azure

- Local deployment options exist for organizations requiring on-premises AI capabilities

What is GPT-5.5

GPT-5.5 is OpenAI’s latest generative pre-trained transformer model specifically optimized for complex coding and agentic tasks. [1] The model builds upon previous GPT architectures to handle multi-step programming challenges that require sustained reasoning across multiple code files and contexts. [5]

GitHub has integrated GPT-5.5 directly into its Copilot platform, making it available to enterprise and professional subscribers. [1] The model supports declarative agent definitions through YAML configuration files and programmatic interfaces via the GitHub Copilot SDK. [3]

What is new vs the previous version

GPT-5.5 introduces significant improvements over GPT-5.1 models in handling complex, multi-step coding workflows. [5]

| Feature | GPT-5.1 | GPT-5.5 |

|---|---|---|

| Multi-step reasoning | Limited context retention | Enhanced agentic task handling |

| Code complexity | Single-file focus | Cross-file project understanding |

| Real-world challenges | Basic problem solving | Complex enterprise scenarios |

| Agent integration | Manual configuration | YAML declarative definitions |

| Context window | Standard capacity | 400K context for Codex integration |

How does GPT-5.5 work

GPT-5.5 operates through a multi-layered architecture that processes coding requests through specialized reasoning pathways. [5]

- Context Analysis: The model analyzes entire codebases to understand project structure and dependencies

- Task Decomposition: Complex coding requests are broken down into manageable sub-tasks

- Agentic Reasoning: Each sub-task is processed through specialized reasoning agents

- Code Generation: Solutions are synthesized across multiple files and modules

- Validation Loop: Generated code is checked for consistency and functionality

The model integrates with Microsoft Foundry Agent Service and supports various agent frameworks including LangGraph and Claude Agent SDK. [3]

Benchmarks and evidence

GitHub reports that GPT-5.5 delivers stronger performance on complex coding tasks compared to previous models. [1]

| Metric | Performance | Source |

|---|---|---|

| Multi-step task completion | Strongest performance to date | [1] |

| Real-world problem resolution | Handles previously unsolvable challenges | [5] |

| Local deployment capability | Comparable to cloud GPT-4o | [7] |

| Context window capacity | 400K tokens for Codex integration | [8] |

Who should care

Builders

Software developers working on complex, multi-file projects will benefit from GPT-5.5’s enhanced reasoning capabilities. The model’s ability to understand cross-file dependencies makes it valuable for large-scale application development and refactoring tasks.

Enterprise

Organizations with GitHub Copilot Business or Enterprise subscriptions gain access to advanced AI coding assistance. [1] The integration with Microsoft Foundry provides enterprise-ready deployment options with enhanced security and compliance features. [3]

End users

Individual developers with Copilot Pro+ subscriptions can leverage GPT-5.5 for personal projects requiring sophisticated code generation. [1] The model’s improved problem-solving capabilities benefit developers tackling challenging algorithmic or architectural problems.

Investors

The GPT-5.5 rollout represents significant advancement in AI-assisted development tools. Integration across Microsoft’s ecosystem signals continued investment in enterprise AI capabilities and potential market expansion opportunities.

How to use GPT-5.5 today

GPT-5.5 is accessible through existing GitHub Copilot interfaces for eligible subscribers. [6]

- Verify subscription: Ensure you have Copilot Pro+, Business, or Enterprise access

- Update Copilot: Install the latest GitHub Copilot extension in your IDE

- Enable GPT-5.5: Select GPT-5.5 from the model dropdown in Copilot settings

- Configure agents: Define declarative agents using YAML or GitHub Copilot SDK

- Test complex tasks: Submit multi-step coding challenges to evaluate performance

For local deployment, organizations need 64 GB system RAM or dual 24 GB VRAM graphics cards. [7]

GPT-5.5 vs competitors

GPT-5.5 competes with other advanced AI coding models in the enterprise development space.

| Feature | GPT-5.5 | Claude Opus 4.7 | GitHub Copilot (GPT-4) |

|---|---|---|---|

| Multi-step reasoning | Advanced agentic capabilities | Strong analytical performance | Basic code completion |

| Context window | 400K tokens (Codex) | Not yet disclosed | 8K-32K tokens |

| Enterprise integration | Microsoft Foundry, Azure | Anthropic API | GitHub native |

| Local deployment | 64 GB RAM requirement | Not yet disclosed | Cloud-only |

| Pricing model | Tier-based access | Usage-based API | Subscription plans |

Risks, limits, and myths

- Hardware requirements: Local deployment demands significant computational resources with 64 GB RAM minimums

- Subscription limitations: Access is restricted to premium GitHub Copilot tiers, excluding basic users

- Model complexity: Advanced features may require additional configuration and technical expertise

- Performance variability: Results may vary based on code complexity and project structure

- Integration dependencies: Full functionality requires compatible development environments and toolchains

- Cost considerations: Premium tier requirements may increase development tool expenses for organizations

FAQ

What GitHub Copilot plans include GPT-5.5 access?

GPT-5.5 is available to Copilot Pro+, Copilot Business, and Copilot Enterprise users. [1] Basic Copilot plans do not include access to the GPT-5.5 model.

When did GPT-5.5 become available in GitHub Copilot?

GPT-5.5 became generally available in GitHub Copilot on . [1] The rollout began for eligible subscribers on that date.

How does GPT-5.5 differ from GPT-5.1 in coding tasks?

GPT-5.5 delivers enhanced performance on complex, multi-step agentic coding tasks that GPT-5.1 models couldn’t handle effectively. [5] It resolves real-world challenges beyond previous GPT capabilities.

Can I run GPT-5.5 locally for development work?

Yes, local deployment requires 64 GB system RAM or dual 24 GB VRAM graphics cards like RTX 3090/4090. [7] Mac users need 64+ GB unified memory for local operation.

What agent frameworks work with GPT-5.5 in Copilot?

GPT-5.5 supports Microsoft Agent Framework, GitHub Copilot SDK, LangGraph, Claude Agent SDK, and OpenAI Agents. [3] Declarative agents can be defined in YAML configuration files.

Does GPT-5.5 integrate with Microsoft Azure services?

Yes, GPT-5.5 integrates with Microsoft Foundry and Azure OpenAI Service for enterprise deployments. [3] This provides enterprise-ready AI capabilities on Microsoft’s cloud platform.

What context window size does GPT-5.5 support?

GPT-5.5 Codex integration supports a 400K token context window for comprehensive code analysis. [8] This enables understanding of large codebases and complex project structures.

Is GPT-5.5 available through GitHub Copilot API?

GPT-5.5 is available through Responses API and Chat Completions API with batch and flex pricing options. [8] Priority processing is available at 2.5x standard rates.

How much does GPT-5.5 access cost in GitHub Copilot?

Specific pricing for GPT-5.5 access has not yet been disclosed by GitHub. [1] Access is included with Pro+, Business, and Enterprise subscription tiers.

What coding languages does GPT-5.5 support best?

Specific language support details for GPT-5.5 have not yet been disclosed in available sources. The model is designed for complex coding tasks across multiple programming paradigms.

Glossary

- Agentic tasks

- Complex AI operations that require multi-step reasoning and autonomous decision-making capabilities

- Declarative agents

- AI agents defined through configuration files (like YAML) rather than imperative programming code

- Context window

- The maximum amount of text (measured in tokens) that an AI model can process in a single request

- GitHub Copilot SDK

- Software development kit for building custom integrations and agents with GitHub Copilot

- Microsoft Foundry

- Enterprise AI platform providing hosted agent services and model deployment capabilities

- Multi-step reasoning

- AI capability to break down complex problems into sequential steps and maintain context across each step

- VRAM

- Video Random Access Memory – specialized memory on graphics cards used for AI model processing

Sources

- GPT-5.5 is generally available for GitHub Copilot – GitHub Changelog. https://github.blog/changelog/2026-04-24-gpt-5-5-is-generally-available-for-github-copilot/

- r/GithubCopilot on Reddit: What are you expecting from a GPT-5.5 rollout in GitHub Copilot? https://www.reddit.com/r/GithubCopilot/comments/1sshlhb/what_are_you_expecting_from_a_gpt55_rollout_in/

- OpenAI’s GPT-5.5 in Microsoft Foundry: Frontier intelligence on an enterprise ready platform | Microsoft Azure Blog. https://azure.microsoft.com/en-us/blog/openais-gpt-5-5-in-microsoft-foundry-frontier-intelligence-on-an-enterprise-ready-platform/

- r/GithubCopilot on Reddit: ChatGPT 5.5 Released! https://www.reddit.com/r/GithubCopilot/comments/1stqi0r/chatgpt_55_released/

- GPT-5.5 is generally available for GitHub Copilot | daily.dev. https://app.daily.dev/posts/gpt-5-5-is-generally-available-for-github-copilot-ju7haynwg

- GPT 5.5 availability in GitHub Copilot · community · Discussion #193843. https://github.com/orgs/community/discussions/193843

- GitHub – GPT-5-5/GPT-5.5: Run GPT-5.5 locally with zero dependencies. https://github.com/GPT-5-5/GPT-5.5

- GPT-5.5 vs Claude Opus 4.7: Benchmarks, Pricing & Coding Compared | Lushbinary. https://lushbinary.com/blog/gpt-5-5-vs-claude-opus-4-7-comparison-benchmarks-pricing/