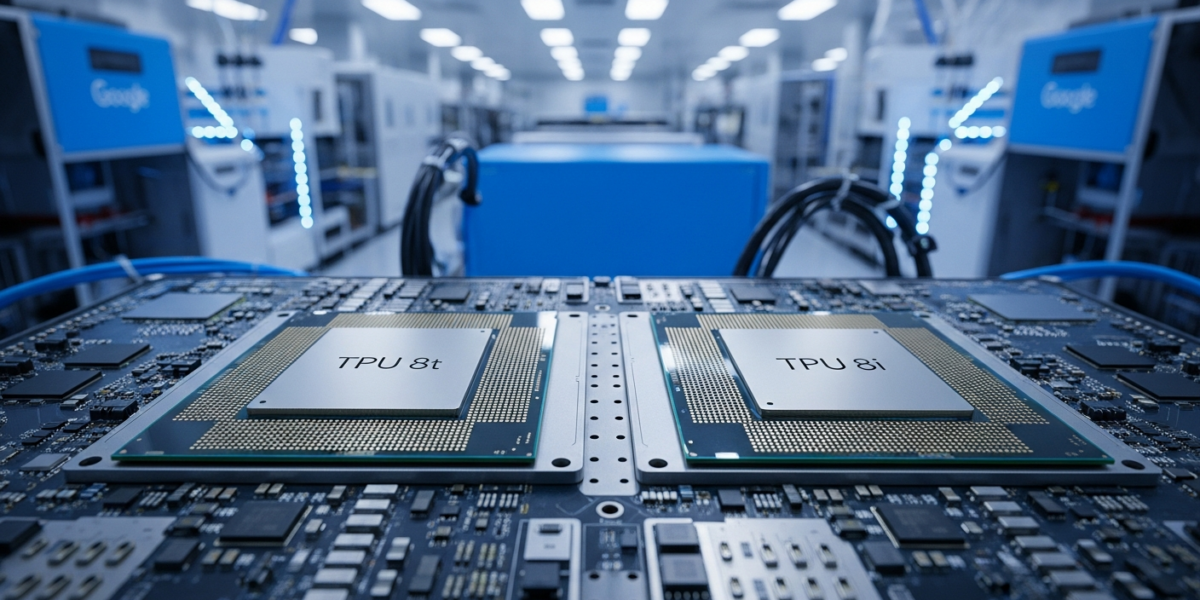

Google launched TPU 8t and TPU 8i on , marking its eighth generation of custom AI chips. These processors feature separate architectures optimized for training and inference workloads in the emerging agentic era of artificial intelligence.

| Released by | Google Cloud |

|---|---|

| Release date | |

| What it is | Eighth-generation TPUs with separate training and inference processors |

| Who it is for | AI developers and enterprises building agent systems |

| Where to get it | Google Cloud |

| Price | Not yet disclosed |

- Google announced TPU 8t and TPU 8i as specialized eighth-generation tensor processing units for AI agents

- TPU 8t focuses on training massive AI models while TPU 8i optimizes real-time inference workloads

- Both chips support existing frameworks including JAX, MaxText, PyTorch, and SGLang for developer compatibility

- The processors address divergent infrastructure requirements for pre-training, post-training, and real-time serving

- Google designed these TPUs specifically for powering Gemini-based agents and third-party developer applications

- Google split its eighth-generation TPU into two specialized processors for the first time

- TPU 8t targets training workloads while TPU 8i optimizes inference and real-time serving

- Both processors support popular AI frameworks used by third-party developers

- The chips address infrastructure divergence between training and serving AI agent systems

- Google positions these TPUs as purpose-built for the emerging agentic era of AI

What are TPU 8t and TPU 8i

TPU 8t and TPU 8i are Google’s eighth-generation tensor processing units designed as separate processors for training and inference workloads respectively. Google Cloud announced these specialized AI chips on to address the unique computational demands of AI agent systems [1].

The TPU 8t focuses on training massive AI models that power intelligent agents. This processor handles the computationally intensive pre-training and post-training phases required to develop sophisticated AI systems [8].

The TPU 8i optimizes real-time inference and serving workloads for deployed AI agents. This chip delivers the low-latency responses needed when AI agents interact with users and systems in production environments [6].

Both processors support existing AI frameworks including JAX, MaxText, PyTorch, and SGLang. This compatibility ensures third-party developers can integrate the new TPUs without rewriting their existing codebases [4].

What is new vs the previous version

The eighth generation introduces specialized architectures for the first time, splitting training and inference into separate processors.

| Feature | Previous TPU Generations | TPU 8t/8i |

|---|---|---|

| Architecture | Single unified processor design | Two specialized processors for training and inference |

| Target workloads | General-purpose AI computation | Agentic era applications with distinct training/serving needs |

| Optimization focus | Balanced performance across tasks | Task-specific optimization for training (8t) and inference (8i) |

| Framework support | Standard AI frameworks | Enhanced support for JAX, MaxText, PyTorch, SGLang |

How do TPU 8t and TPU 8i work

The TPU 8t and TPU 8i operate through distinct architectural approaches optimized for their respective computational tasks.

- TPU 8t training optimization: The processor accelerates matrix operations and gradient computations required during model training phases

- TPU 8i inference specialization: The chip prioritizes low-latency tensor operations needed for real-time AI agent responses

- Framework integration: Both processors interface with popular AI frameworks through optimized drivers and libraries

- Workload distribution: Google Cloud automatically routes training tasks to TPU 8t and inference requests to TPU 8i based on workload characteristics

Benchmarks and evidence

Google has not yet disclosed specific performance benchmarks for TPU 8t and TPU 8i processors.

| Metric | TPU 8t | TPU 8i | Source |

|---|---|---|---|

| Training throughput | Not yet disclosed | Not applicable | [1] |

| Inference latency | Not applicable | Not yet disclosed | [1] |

| Power efficiency | Not yet disclosed | Not yet disclosed | [1] |

| Memory bandwidth | Not yet disclosed | Not yet disclosed | [1] |

Who should care

Builders

AI developers building agent systems benefit from specialized processors that optimize training and inference workloads separately. The TPU 8t accelerates model development cycles while TPU 8i ensures responsive agent interactions in production [4].

Machine learning engineers gain access to processors designed specifically for the computational patterns of AI agents. Both TPUs support existing frameworks, minimizing migration effort from current development workflows [4].

Enterprise

Companies deploying AI agent systems can optimize costs by using TPU 8t for periodic model training and TPU 8i for continuous inference serving. This separation allows more efficient resource allocation across different phases of AI system operation [8].

Organizations building custom AI agents gain access to Google’s latest tensor processing technology. The processors support third-party frameworks, enabling integration with existing enterprise AI infrastructure [4].

End users

Users of AI agent applications may experience improved response times and capabilities as developers leverage TPU 8i’s inference optimizations. The specialized architecture enables more sophisticated real-time AI interactions [6].

Investors

The TPU 8t and TPU 8i represent Google’s strategic response to the growing AI agent market. This specialized approach differentiates Google Cloud’s AI infrastructure offerings in an increasingly competitive landscape [7].

How to use TPU 8t and TPU 8i today

Google has not yet disclosed specific availability details or access procedures for TPU 8t and TPU 8i processors.

- Monitor Google Cloud announcements: Watch for availability updates through official Google Cloud channels

- Prepare existing frameworks: Ensure your AI projects use supported frameworks like JAX, MaxText, PyTorch, or SGLang

- Review current TPU usage: Analyze your training and inference workloads to identify optimization opportunities

- Contact Google Cloud sales: Inquire about early access programs or beta testing opportunities

TPU 8t/8i vs competitors

Google’s specialized training and inference processors compete against unified AI chip architectures from other vendors.

| Feature | Google TPU 8t/8i | NVIDIA H100 | AMD MI300X |

|---|---|---|---|

| Architecture approach | Separate training and inference processors | Unified GPU architecture | Unified GPU architecture |

| Specialization | Task-specific optimization | General-purpose AI acceleration | General-purpose AI acceleration |

| Framework support | JAX, MaxText, PyTorch, SGLang | CUDA ecosystem, PyTorch, TensorFlow | ROCm, PyTorch, TensorFlow |

| Target workloads | AI agent systems | Large language models, general AI | Large language models, HPC |

Risks, limits, and myths

- Vendor lock-in risk: TPU-optimized code may not transfer easily to other AI accelerators

- Framework limitations: Performance benefits may vary significantly across different AI frameworks

- Cost uncertainty: Pricing structure for separate training and inference processors remains undisclosed

- Availability constraints: Google has not announced widespread availability timelines for either processor

- Myth – Universal superiority: Specialized processors excel in target workloads but may underperform in general-purpose tasks

- Myth – Immediate availability: The processors require Google Cloud infrastructure and are not standalone products

FAQ

- What is the difference between TPU 8t and TPU 8i?

- TPU 8t specializes in training AI models while TPU 8i optimizes inference and real-time serving workloads for AI agents.

- When will TPU 8t and TPU 8i be available?

- Google announced the processors on but has not disclosed specific availability dates.

- What frameworks work with Google’s eighth generation TPUs?

- Both TPU 8t and TPU 8i support JAX, MaxText, PyTorch, and SGLang frameworks for developer compatibility.

- How much do TPU 8t and TPU 8i cost?

- Google has not yet disclosed pricing information for either TPU 8t or TPU 8i processors.

- Can I use TPU 8t for inference workloads?

- While possible, TPU 8t is optimized for training tasks and TPU 8i delivers better performance for inference workloads.

- What makes these TPUs designed for the agentic era?

- The processors address the distinct computational requirements of AI agent systems that need both intensive training and responsive real-time inference.

- Are TPU 8t and TPU 8i available outside Google Cloud?

- No, both processors are custom Google chips available exclusively through Google Cloud infrastructure services.

- How do TPU 8t and TPU 8i compare to NVIDIA H100 chips?

- Google’s TPUs use specialized architectures for training and inference while NVIDIA H100 provides unified GPU acceleration across all AI workloads.

- What AI applications benefit most from TPU 8t and TPU 8i?

- AI agent systems, conversational AI, and applications requiring both model training and real-time inference optimization benefit most from these specialized processors.

- Do I need both TPU 8t and TPU 8i for my AI project?

- Projects involving both model development and production deployment benefit from using TPU 8t for training and TPU 8i for serving.

Glossary

- Agentic era

- The current phase of AI development focused on autonomous agents that can perform complex tasks and make decisions independently

- Inference

- The process of using a trained AI model to make predictions or generate outputs from new input data

- JAX

- Google’s machine learning framework that provides automatic differentiation and just-in-time compilation for numerical computing

- MaxText

- Google’s framework for training large language models efficiently on TPU hardware

- Post-training

- The phase after initial model training that includes fine-tuning, alignment, and optimization for specific tasks

- Pre-training

- The initial phase of training large AI models on vast datasets to learn general patterns and representations

- SGLang

- A structured generation language framework for efficient serving of large language models

- Tensor Processing Unit (TPU)

- Google’s custom application-specific integrated circuit designed specifically for machine learning workloads

Sources

- Our eighth generation TPUs: two chips for the agentic era — https://blog.google/innovation-and-ai/infrastructure-and-cloud/google-cloud/eighth-generation-tpu-agentic-era/

- We’re launching two specialized TPUs for the agentic era. — https://blog.google/innovation-and-ai/infrastructure-and-cloud/google-cloud/tpus-8t-8i-cloud-next/

- Google Cloud Next 2026: News and updates — https://blog.google/innovation-and-ai/infrastructure-and-cloud/google-cloud/next-2026/

- Google unveils two new TPUs designed for the “agentic era” – Ars Technica — https://arstechnica.com/ai/2026/04/google-unveils-two-new-tpus-designed-for-the-agentic-era/

- Google Cloud Unveils 8th-Gen TPUs: Specialized Architectures for the Agentic Era — https://www.c-sharpcorner.com/news/google-cloud-unveils-8thgen-tpus-specialized-architectures-for-the-agentic-era

- Google Unveils Two New AI Chips For the ‘Agentic Era’ – Slashdot — https://tech.slashdot.org/story/26/04/22/1746252/google-unveils-two-new-ai-chips-for-the-agentic-era

- In Latest Shot At Nvidia, Google Unveils Two Chips For The Agentic Era | ZeroHedge — https://www.zerohedge.com/ai/google-unveils-two-chips-agentic-era

- TPU 8t and TPU 8i technical deep dive | Google Cloud Blog — https://cloud.google.com/blog/products/compute/tpu-8t-and-tpu-8i-technical-deep-dive