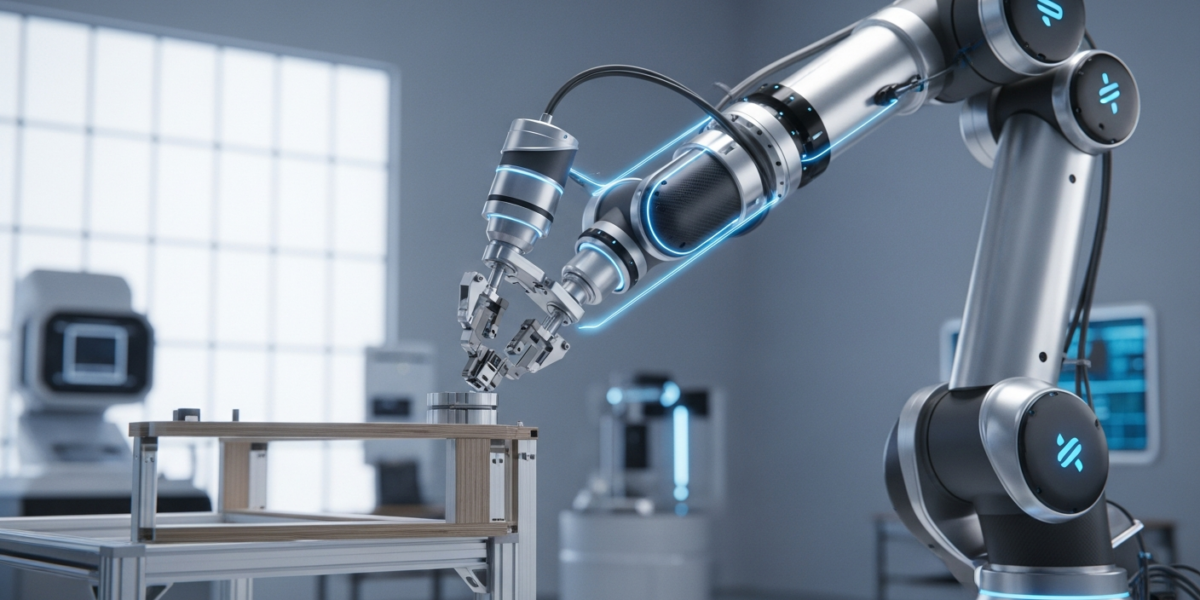

HDFlow, a new hierarchical planning framework introduced on , addresses critical limitations in AI-driven robot planning for complex, long-horizon tasks. It combines a high-level diffusion model to strategically generate sequences of subgoals with a low-level rectified flow model for rapid, smooth trajectory execution. This hybrid approach aims to overcome the computational demands and lack of principled hierarchical decomposition often seen in single-paradigm generative planners, offering a more efficient and robust solution for tasks like robotic assembly and navigation.

- HDFlow uses a two-tier AI architecture: diffusion for strategic high-level subgoal planning and rectified flow for efficient low-level trajectory generation.

- It tackles the computational inefficiency of iterative denoising in traditional diffusion models for real-time robotic control.

- The framework has been validated on challenging furniture assembly tasks in both simulation and real-world scenarios, outperforming existing state-of-the-art methods.

- HDFlow demonstrates generalizability across diverse long-horizon locomotion and manipulation benchmarks.

What changed

Prior generative models, particularly those based on diffusion, have shown promise in generating behavior plans for robots tackling long-horizon tasks with sparse rewards. However, these methods often struggle with two key issues: a lack of inherent hierarchical decomposition and significant computational demands due to their iterative denoising processes, which hinder real-time execution. Structured variants like Hierarchical Diffusion for Offline Decision Making and Potential-Based Diffusion Motion Planning have attempted to introduce hierarchy or compose constraints, but HDFlow represents a distinct evolution [1].

HDFlow introduces a novel architectural split. Instead of a single generative model attempting to solve the entire problem, it delegates strategic, high-level planning to a diffusion model and the rapid, precise execution of those plans to a rectified flow model. This separation of concerns allows the system to leverage the exploratory power of diffusion for abstract problem-solving while exploiting the speed and efficiency of ordinary differential equation (ODE)-based trajectory generation offered by rectified flow models. This dual-model approach is a significant departure from monolithic generative planners, offering a more principled framework for hierarchical decomposition and improved computational performance.

How it works

HDFlow operates on a hierarchical principle, breaking down complex, long-horizon tasks into manageable stages. The framework consists of two primary components:

- High-level Diffusion Planner: This component is responsible for generating a sequence of strategic subgoals. It operates in a learned latent space, allowing it to abstract away low-level details and focus on the overarching task structure. Diffusion models are adept at generating diverse and high-quality samples by iteratively denoising a random input towards a desired distribution. In HDFlow, this capability is leveraged to explore potential sequences of intermediate states or actions that guide the robot towards the final objective. This is crucial for tasks that require significant strategic foresight, such as disassembling a complex machine or navigating an unknown environment [2].

- Low-level Rectified Flow Planner: Once the high-level planner provides a subgoal, the low-level rectified flow planner takes over. Rectified flow models, related to flow matching models, are known for their ability to generate trajectories efficiently by solving ordinary differential equations (ODEs) [5]. This means they can produce smooth, dense, and executable paths between the robot’s current state and the specified subgoal much faster than iterative denoising processes. This efficiency is critical for real-time robotic control, where rapid response to environmental changes and precise movement generation are paramount.

The interplay between these two planners is key. The diffusion model provides the “what to do next” at a strategic level, while the rectified flow model provides the “how to do it” at an operational level. This allows HDFlow to maintain a global understanding of the task while executing local movements with high fidelity and speed. The learned latent space for subgoal generation enables the high-level planner to operate on a compressed, more abstract representation of the environment, further enhancing efficiency.

Why it matters for operators

For operators in robotics, manufacturing, and automation, HDFlow represents a tangible step towards more robust and deployable AI-driven systems for complex tasks. The core challenge in long-horizon robotics is bridging the gap between high-level strategic planning and low-level motor control. Current generative AI approaches, while powerful, often fall short on either computational efficiency for real-time deployment or the ability to naturally decompose multi-step problems.

HDFlow’s hierarchical design directly addresses these pain points. By separating strategic subgoal generation (diffusion) from rapid trajectory execution (rectified flow), it offers a blueprint for systems that can both think abstractly and act decisively. This means:

- Faster Development Cycles: Engineers can potentially train and fine-tune high-level strategic policies independently of low-level motion primitives, or vice-versa. This modularity could significantly reduce the iteration time for developing new robotic applications, especially in areas like custom assembly or dynamic logistics.

- Improved Real-Time Performance: The use of ODE-based rectified flow for low-level planning is a critical detail. It implies faster inference times compared to iterative denoising, which is essential for robots operating in dynamic environments where milliseconds matter. This could unlock new applications in high-speed sorting, adaptive manufacturing, or human-robot collaboration where latency is a bottleneck.

- Enhanced Generalizability: The demonstration of HDFlow’s performance on diverse tasks, from furniture assembly to locomotion and manipulation, suggests a path towards more general-purpose robot intelligence. Operators currently face a landscape of highly specialized AI models; a framework that can adapt to different task domains without extensive re-engineering offers a significant competitive advantage.

However, operators should be wary of the “generalizability” claim without understanding the scope. While HDFlow performs well across diverse benchmarks, real-world deployment often introduces unforeseen edge cases and domain shifts. The true test will be its performance in novel, unstructured environments beyond the training data. The immediate actionable insight is to explore how this hierarchical decomposition could be applied to existing, computationally intensive planning problems within your operations, particularly those involving multi-stage physical manipulation or navigation. Consider pilot projects that leverage this dual-model thinking to offload complex strategic decisions from real-time execution engines.

Benchmarks and evidence

HDFlow’s capabilities were evaluated on four challenging furniture assembly tasks, both in simulation and real-world environments. The research indicates that HDFlow significantly outperforms state-of-the-art methods in these scenarios. Additionally, the framework’s generalizability was showcased on two long-horizon benchmarks that encompass a variety of locomotion and manipulation tasks. While specific numeric benchmarks are not detailed in the abstract, the claim of “significantly outperforms” suggests a measurable improvement over existing techniques in terms of task completion rate, efficiency, or robustness.

Risks and open questions

- Latent Space Robustness: The high-level diffusion planner operates in a learned latent space. The quality and robustness of this latent representation are crucial. If the latent space fails to capture critical task semantics or environmental nuances, the high-level planner could generate suboptimal or unachievable subgoals, leading to downstream failures.

- Inter-Planner Communication: The seamless handoff and communication between the diffusion planner and the rectified flow planner are vital. Any mismatch in their understanding of the state or desired subgoal could lead to jerky movements, missed objectives, or safety issues in real-world deployments.

- Computational Overhead: While rectified flow is efficient, the combined computational cost of running both a diffusion model and a rectified flow model in real-time, especially for very long-horizon tasks with frequent replanning, remains a practical concern for resource-constrained robotic platforms.

- Real-World Generalization: Although tested in real-world furniture assembly, the complexity and variability of industrial or domestic environments can far exceed benchmark conditions. The framework’s ability to handle unexpected obstacles, sensor noise, and dynamic changes without extensive retraining is an open question.

Sources

- Refining Compositional Diffusion for Reliable Long-Horizon Planning — https://arxiv.org/html/2605.03075

- Annotation of long-horizon task decomposition | Labelvisor — https://www.labelvisor.com/annotation-of-long-horizon-task-decomposition/

- GitHub – bansky-cl/diffusion-nlp-paper-arxiv: Auto get diffusion nlp papers in Axriv. More papers Information can be found in another repository “Diffusion-LM-Papers”. · GitHub — https://github.com/bansky-cl/diffusion-nlp-paper-arxiv

- GitHub – ai-boost/awesome-harness-engineering: Awesome list for AI agent harness engineering: tools, patterns, evals, memory, MCP, permissions, observability, and orchestration. · GitHub — https://github.com/ai-boost/awesome-harness-engineering

- LeapAlign: Post-Training Flow Matching Models at Any Generation Step by Building Two-Step Trajectories — https://arxiv.org/html/2604.15311