Starcloud, a startup focused on deploying AI data centers in orbit, has achieved a $1.1 billion valuation after raising $170 million in funding. This milestone highlights accelerating investment and competition in space-based computing infrastructure, driven by the demand for low-latency global AI capabilities.

TL;DR

- Orbital data centers place computing power in space to reduce latency and support global AI workloads.

- Starcloud reached unicorn status with $170M in new funding, despite currently operating only one satellite with a single GPU.

- Major players like SpaceX and Blue Origin are also pursuing off-planet data infrastructure.

- This could redefine global AI scalability, connectivity, and energy use.

- Position yourself in satellite tech, AI optimization, or infrastructure investing.

Key takeaways

- Orbital AI data centers are going mainstream, backed by serious funding.

- Starcloud leads in visibility, but SpaceX and Blue Origin have stronger execution assets.

- Low-latency global compute will unlock new applications in AI, IoT, and real-time analytics.

- Now is the time to build relevant skills, explore partnerships, or invest in enabling technologies.

What Are Orbital AI Data Centers?

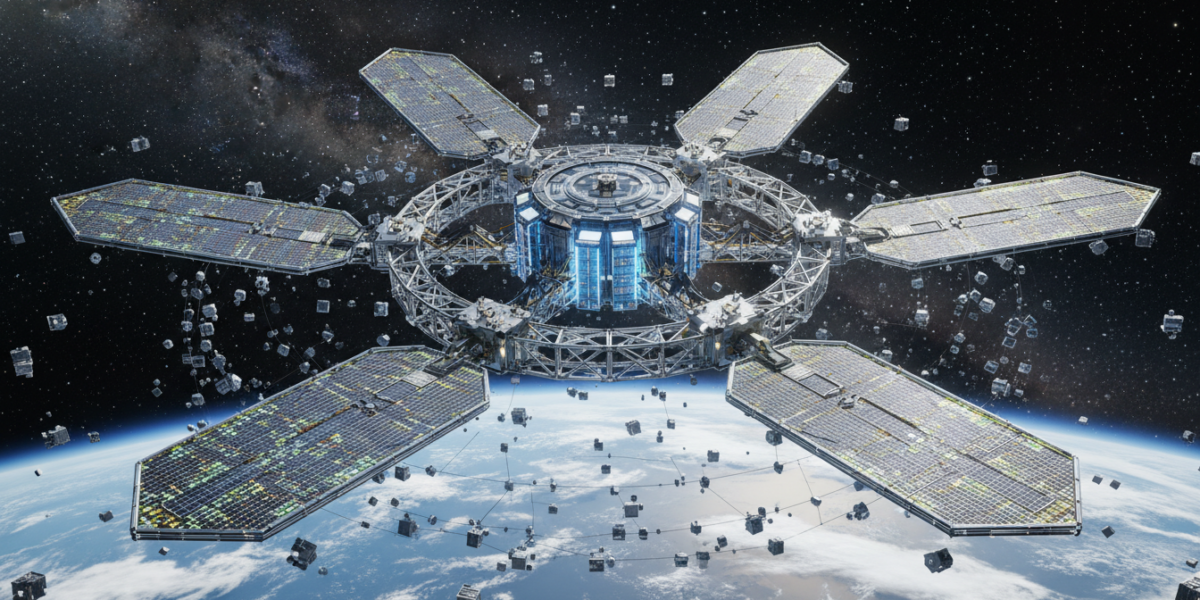

Orbital AI data centers are compute and storage facilities deployed in space, designed to deliver high-speed data processing with minimal delay across the globe. Unlike terrestrial data centers, they use satellite networks to serve distributed users with near-instant response times.

Why this matters: Latency matters in AI. Applications like autonomous systems, real-time analytics, and global financial trading require split-second decisions. Orbital data centers bypass ground-based internet routing, cutting latency significantly.

Who should care: AI engineers, infrastructure investors, satellite technology professionals, and anyone building latency-sensitive applications.

Why This Is Happening Now

Three forces are driving this shift:

- AI’s insatiable compute demand: Training and inference are outgrowing Earth-based power and cooling capacity.

- Global low-latency needs: Industries from finance to defense require real-time data processing everywhere.

- Cheaper access to space: Reusable rockets and modular satellites have lowered deployment costs.

Starcloud’s valuation—largely based on future potential, not current capacity—shows that investors are betting big on this future.

How Orbital AI Data Centers Work

These systems rely on:

- Satellite constellations: Networks of small satellites, each equipped with GPUs or specialized AI chips.

- Inter-satellite links: Laser communication between satellites for fast data transfer.

- Ground stations: Earth-based hubs that connect orbital compute to terrestrial networks.

Power comes from solar panels; cooling is handled by radiative cooling in space—no air conditioning needed.

Real-World Examples: Who’s Building What

| Company | Approach | Current Status |

|---|---|---|

| Starcloud | Plans for 88,000-satellite network | 1 satellite, 1 GPU deployed |

| SpaceX | Leveraging Starlink infrastructure | Early R&D phase |

| Blue Origin | Focusing on heavy-lift deployment | Concept stage |

Starcloud is the most vocal, but the big players have deeper launch resources and existing orbital infrastructure.

Implementation Tools & Vendors

To build or use orbital compute, you’ll encounter:

- Satellite manufacturers: Airbus, Lockheed Martin, and new entrants like Astranis.

- Launch providers: SpaceX, Rocket Lab, Blue Origin.

- AI hardware: NVIDIA, Cerebras, and space-rated GPUs.

- Ground segment: KSAT, Amazon AWS Ground Station.

Costs and ROI Considerations

- Deployment cost: High upfront—each satellite launch can run millions.

- Operational cost: Lower energy expense, but maintenance requires autonomous systems.

- ROI drivers: Premium pricing for low-latency services, and new markets like in-space analytics.

Who wins: Early investors in scalable orbital infrastructure, and companies that lease compute capacity for high-value applications.

Risks and Pitfalls

- Technical risk: Satellite failure, radiation-induced errors, communication downtime.

- Regulatory hurdles: Spectrum licensing, space traffic management, orbital debris rules.

- Market risk: High capital burn with uncertain adoption timelines.

Don’t believe the hype: This isn’t about replacing all Earth data centers. It’s a complement for latency-critical, globally distributed workloads.

Myths vs. Facts

- Myth: Orbital data centers will solve all AI’s energy problems.

Fact: They avoid terrestrial cooling costs, but launch emissions and space debris are new concerns. - Myth: This is only for billion-dollar companies.

Fact: As capacity grows, access will trickle down to startups via cloud-like leasing models.

FAQ

How soon until orbital AI data centers are operational at scale?

Starcloud aims for a multi-thousand satellite network by 2028–2030, but technical and regulatory hurdles could delay that.

Can I rent orbital compute today?

Not yet. Current capability is minimal—think R&D, not commercial service.

What industries will use this first?

Defense, finance, and telecom—anywhere low latency provides a decisive edge.

What This Means for You

If you’re in tech

- Learn about satellite communication protocols and radiation-hardened computing.

- Explore AI model optimization for low-bandwidth, high-latency environments.

If you’re an investor

- Watch companies in satellite servicing, orbital robotics, and space-based compute leasing.

- Avoid overvalued pure-play startups—prioritize those with launch partnerships.

If you’re a builder

- Consider how your product could leverage ultra-low-latency global compute.

- Start small: prototype with high-altitude balloons or ground-based edge networks.

Key Takeaways

- Orbital AI data centers are going mainstream, backed by serious funding.

- Starcloud leads in visibility, but SpaceX and Blue Origin have stronger execution assets.

- Low-latency global compute will unlock new applications in AI, IoT, and real-time analytics.

- Now is the time to build relevant skills, explore partnerships, or invest in enabling technologies.

Glossary

- Low-latency compute: Data processing with minimal delay, critical for real-time applications.

- Unicorn: A startup valued at over $1 billion.

- Inter-satellite link: Communication link between satellites, often using lasers.

- Radiation hardening: Designing electronics to withstand space radiation.