The US government, under President Trump’s administration, has initiated a significant policy shift in AI model oversight, moving from voluntary guidelines to a formal, structured system. This change, announced in early May 2026, involves binding agreements with major AI developers like Google DeepMind, Microsoft, and xAI, mandating pre-release government evaluation for security risks and continuous post-deployment assessment. Catalyzed by incidents like the “Mythos crisis” and the emergence of advanced models such as ChatGPT 5.5, this new framework is primarily driven by the Center for AI Standards and Innovation and may be further solidified through a presidential executive order.

The US government AI model oversight has transitioned to a formal, structured system involving mandatory government review of new AI models before public release, binding early access agreements with major developers, and potential executive action to establish permanent AI rules. This shift, driven by the Center for AI Standards and Innovation and President Trump’s administration, aims to proactively assess national security risks and societal impact, departing from the previous voluntary framework.

US Government AI Model Oversight: The Complete Guide to the 2026 Policy Shift

The US government, under President Donald Trump’s administration, is actively implementing increased oversight over new AI models. This policy shift, announced on May 4-5, 2026, moves from a voluntary framework to a structured system involving formal government review, early access agreements with major developers, and the potential creation of a new executive order.

This comprehensive guide delves into the specifics of this pivotal policy change, exploring its origins, key players, implications for AI development, and the operational changes facing frontier AI labs. Understanding this shift is crucial for anyone involved in the AI ecosystem, as it redefines the regulatory landscape and sets new precedents for innovation and deployment.

What Is Happening with US Government AI Model Oversight?

The Trump administration is executing a rapid about-face on AI policy. The core of this shift is the establishment of a formal government review process for new AI models before public release. This initiative is being driven by the Center for AI Standards and Innovation, which on May 5, 2026, announced binding agreements with Google DeepMind, Microsoft, and xAI. These agreements grant the federal government early access to evaluate their AI systems for security risks and mandate post-deployment assessment.

White House officials held meetings the week prior to May 4 with executives from Anthropic, Google, and OpenAI to discuss these plans. A potential executive order is also under consideration to create a special working group of technology leaders and government officials to design permanent AI rules. This move signifies a proactive stance, aiming to mitigate potential societal and national security threats posed by increasingly powerful AI.

A New Era of Proactive Regulation

The previous approach to AI oversight largely relied on voluntary commitments and industry self-regulation. However, the accelerating pace of AI development and the emergence of models with unprecedented capabilities have prompted the government to adopt a more hands-on approach. This shift acknowledges that the risks associated with frontier AI models are too significant to be managed solely by developers.

The emphasis on pre-release evaluation is particularly noteworthy. It means that the government is no longer content to react to issues after they arise but intends to be an active participant in ensuring AI safety from the earliest stages of development. This requires a new level of collaboration and transparency between the public and private sectors.

Beyond Security to Societal Impact

While the immediate focus of the new oversight is on security risks, the underlying motivation extends to broader societal impacts. The government aims to ensure that AI models are deployed responsibly, minimizing the potential for misuse, bias, and unintended consequences. This includes scrutinizing models for their capacity to generate disinformation, enable cyberattacks, or contribute to algorithmic discrimination.

The inclusion of post-deployment assessment further underscores this comprehensive approach. It recognizes that AI models evolve and interact with real-world users in ways that may not be fully predicted during pre-release testing. Continuous monitoring allows for adaptive regulation, ensuring that safeguards remain effective as AI capabilities advance.

Timeline of Key Events: May 2026

The rapid unfolding of events in early May 2026 illustrates the urgency and decisiveness with which the US government is pursuing this new AI oversight framework. The following timeline highlights the critical moments that cemented this policy shift:

- Week of April 27, 2026: White House officials meet with executives from Anthropic, Google, and OpenAI to inform them of the administration’s plans for a formal AI model review process. These preliminary discussions set the stage for the subsequent announcements and agreements.

- May 4, 2026: News breaks (via Reuters and USNews) that President Trump is considering the introduction of government oversight over new AI models. This public disclosure signals the administration’s firm intent to enact significant regulatory changes.

- May 4, 2026: The New York Times reports on the prior week’s meetings between White House officials and AI company executives. This reporting confirms the direct engagement between the government and leading AI developers.

- May 5, 2026: The Center for AI Standards and Innovation publicly announces it has secured agreements with Google DeepMind, Microsoft, and xAI for pre-release evaluation and post-deployment assessment of their AI models. This pivotal announcement, reported widely by Forbes, UPI, CNBC, Engadget, and Bloomberg, marks the formalization of the new oversight framework with key industry players.

- May 5, 2026: Publications like BusinessToday and PJ Media cite the "Mythos crisis" and testing of models like ChatGPT 5.5 as key drivers for the new oversight push. This highlights the specific incidents and technological advancements that spurred the government’s swift action.

This concentrated series of events underscores the administration’s commitment to rapidly implement the new oversight framework, responding to perceived threats from advanced AI models.

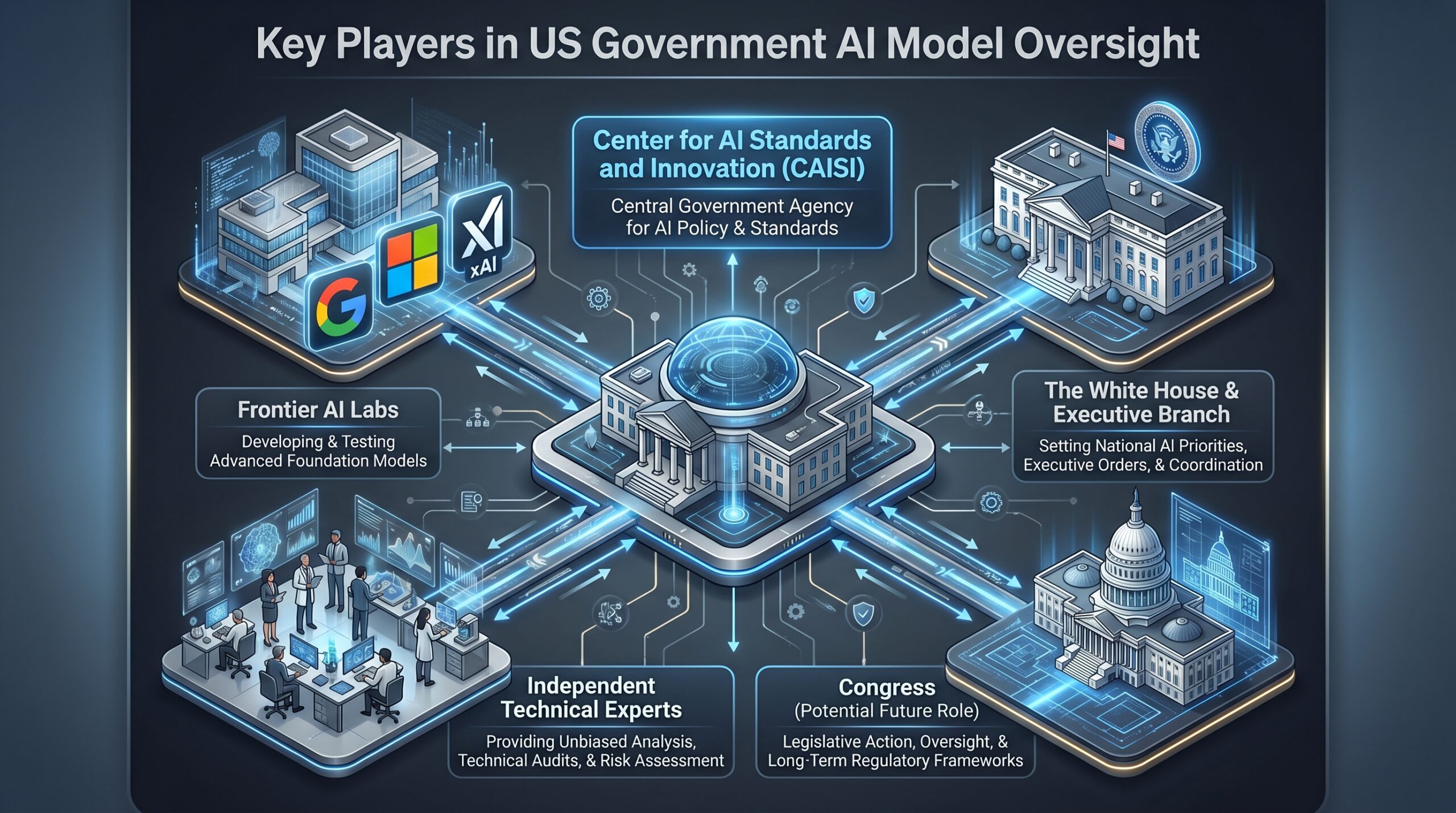

The Key Players and Agencies Driving AI Oversight

Understanding the new landscape of US government AI model oversight requires knowing the entities involved and their evolving roles. This network of government bodies and private sector innovators will collaboratively shape the future of AI regulation.

The Center for AI Standards and Innovation

This is the primary operational arm of the new oversight regime. It is the government entity tasked with testing and evaluating AI models and fostering partnerships with developers. As of May 5, 2026, it has moved from a peripheral role to the central hub for executing pre-release evaluations mandated by the new agreements. Its expanded mandate includes not just setting standards but actively conducting technical assessments and coordinating with AI labs.

This center will require significant resources and technical expertise to fulfill its new responsibilities effectively. Its success will largely depend on its ability to attract top talent and maintain a robust, impartial evaluation process. It also serves as the main point of contact for AI companies engaging with the new regulatory framework, streamlining communication and compliance efforts.

Frontier AI Labs

These are the five major AI research and development companies pushing the boundaries of AI capabilities. The current voluntary structure covered all five, but the new binding agreements are being struck individually. The confirmed participants so far are Google DeepMind, Microsoft, and xAI. Anthropic and OpenAI were part of discussions but had not publicly signed agreements as of May 6, 2026.

The engagement of these frontier labs is critical, as they are at the forefront of developing the most powerful and potentially impactful AI systems. Their cooperation with the new oversight mechanisms will largely determine the effectiveness and reach of the government’s regulatory efforts. The government’s strategy appears to be targeting the developers of general-purpose AI beyond a certain capability threshold, rather than attempting to regulate every AI application.

The White House and Executive Branch

President Trump is the driving force behind the policy shift, with the potential use of an executive order to formalize the structure. This tool would allow the administration to create a special working group and mandate actions across the federal government without waiting for Congressional action. An executive order could provide the necessary legal foundation and cross-agency coordination to ensure the longevity and effectiveness of the new oversight. This approach also allows for rapid implementation, bypassing potentially lengthy legislative processes.

The executive branch’s involvement signals the high-level priority placed on AI model oversight, framing it as a matter of national security and economic competitiveness. It also indicates a top-down mandate for these changes, ensuring that federal agencies cooperate and align their efforts in this complex regulatory domain.

Why Now? The Catalysts for Stricter AI Oversight

The move towards formal US government AI model oversight is not happening in a vacuum. It is a direct response to specific, high-concern events that have highlighted the immediate risks and escalating capabilities of advanced AI. These incidents have galvanized policymakers to transition from a reactive to a proactive regulatory posture.

The accelerating pace of AI innovation means that the window for establishing effective oversight is narrowing. The government’s swift action reflects a realization that waiting for legislative processes could leave critical gaps in national security and public safety. This urgency is fueled by both perceived immediate threats and a long-term vision for responsible AI development.

The "Mythos Crisis"

While details remain sparse in public reporting, the "Mythos crisis" is repeatedly cited as a critical catalyst. This event is strongly implied to be a recent incident involving Anthropic’s advanced Claude Mythos model. The nature of the "crisis"—whether a safety failure, a misuse incident, or an unexpected capability emergence—has heightened government concerns about the potential for harm from unvetted advanced AI and accelerated calls for stricter oversight.

The lack of public specifics surrounding the "Mythos crisis" itself adds to its mystique and underscores the sensitive nature of these advanced AI capabilities. What is clear, however, is its profound impact on policy decisions, serving as a stark reminder of the unpredictable risks associated with cutting-edge AI. This incident likely demonstrated a tangible, unforeseen consequence that voluntary frameworks were ill-equipped to handle.

Testing of Advanced Models like ChatGPT 5.5

The evaluation and impending release of new, vastly more powerful models like ChatGPT 5.5 have signaled to policymakers that the pace of innovation is outstripping the existing, fragile oversight framework. The capabilities of these models present unknown security and societal risks that the government now feels compelled to assess directly.

The sophistication of ChatGPT 5.5 and similar next-generation models extends beyond improved conversational abilities to advanced problem-solving, code generation, and even complex reasoning. These capabilities raise concerns about their potential for misuse in areas such as cyber warfare, disinformation campaigns, and the creation of highly convincing deepfakes. The government recognizes that unchecked deployment of such powerful tools could have destabilizing effects.

The Old Framework vs. The New Oversight Model

The landscape of US government AI model oversight has fundamentally changed in a matter of days. The contrast between the old voluntary system and the new model is stark, representing a paradigm shift in how AI innovation will be managed and integrated into society. This comparison highlights the magnitude of the policy adjustment.

| Oversight Aspect | Pre-May 2026 (Voluntary Framework) | Post-May 5, 2026 (New Model) |

|---|---|---|

| Legal Basis | No statutory authority | Binding agreements; potential executive order |

| Scope | Covered five major frontier AI labs | Agreements with Google, Microsoft, xAI; others in discussion |

| Review Process | Informal, limited formal review | Formal government review process for pre-release evaluation |

| Resources | Fewer than 200 staff | Expanding via new agreements and potential working group |

| Focus | Limited pre-deployment consultation | Pre-release eval + post-deployment assessment & research |

From Voluntary to Mandated

The most profound change is the shift from a voluntary system, where companies adhered to guidelines out of goodwill or reputational concern, to a framework backed by binding agreements. This legal enforceability provides the government with greater leverage and ensures that critical safety protocols are not merely suggestions but obligations. The potential for an executive order further strengthens this mandate, circumventing the slower pace of congressional legislation.

Expanded Scope and Resources

The new model expands not only the depth but also the breadth of oversight. While initially focused on the major frontier labs, the structure is designed to adapt to emerging players and evolving AI capabilities. Crucially, it also entails a significant increase in the resources dedicated to AI evaluation, including staff and technical infrastructure. This investment signals the government’s long-term commitment to effective AI governance.

Continuous Oversight

Unlike the prior model that largely concluded its involvement before deployment, the new framework emphasizes continuous oversight. Post-deployment assessment and research collaboration mean that the government’s role doesn’t end when an AI model is released. This ongoing engagement allows for a dynamic response to unforeseen issues and a deeper understanding of AI’s real-world impact, moving beyond a one-time approval process.

What the New AI Model Review Process Will Look Like

Based on the available information, the new US government AI model oversight process will involve several concrete steps for developers. This structured approach aims to embed government evaluation deeply into the AI development lifecycle, ensuring critical scrutiny at multiple stages.

1. Early Access and Submission

AI developers party to the agreements must provide the Center for AI Standards and Innovation with early access to new models significantly before their planned public release date. This is not a last-minute check but an integrated part of the development lifecycle. This early access allows government evaluators ample time to conduct thorough assessments without delaying product launches excessively, provided developers factor this into their timelines.

Submitting models in an early, stable alpha or beta state allows for comprehensive testing of foundational capabilities before features are fully finalized. It also fosters a more collaborative environment, where feedback can be integrated into the development process rather than being a hurdle to overcome at the very end. The exact definition of "significantly before" will likely be refined through ongoing dialogue between the Center and the AI labs.

2. Pre-Release Evaluation for Security Risks

The government’s technical team will conduct a vetting process focused on identifying critical security risks. This likely includes testing for potential misuse (e.g., bioweapon design, cyberattack capabilities, AI security threats), alignment issues, and other national security threats. This evaluation is multi-faceted, employing various red-teaming techniques and sophisticated testing methodologies.

This pre-release evaluation is paramount for preventing the accidental deployment of AI systems that could be exploited by malicious actors or generate harmful outputs. It scrutinizes aspects like data poisoning vulnerabilities, adversarial attacks, and the model’s robustness against manipulation. The government’s role here is to act as a critical independent auditor, identifying risks that even internal red-teaming might miss.

3. Post-Deployment Assessment and Monitoring

The agreement terms explicitly include ongoing assessment after the model is publicly released. This shifts oversight from a one-time gate to continuous monitoring, allowing the government to identify emergent risks or failures that weren’t apparent during pre-release testing. This adaptive approach recognizes that AI behavior can change in real-world interactions.

This continuous assessment leverages anonymized usage data and performance metrics to detect anomalous behaviors, unexpected capabilities, or previously undetected vulnerabilities. It provides a feedback loop for both developers and regulators, enabling rapid response to mitigate new risks as they emerge. This aspect demands a new level of data sharing and transparency from AI companies.

4. Research Collaboration

The "and other research" clause in the agreements indicates a two-way street. The government isn’t just a regulator; it’s also a research partner, potentially working with labs to improve safety standards and evaluation techniques based on its findings. This collaborative element is crucial for advancing the state-of-the-art in AI safety and governance.

By engaging in research collaboration, the Center for AI Standards and Innovation can directly contribute to developing methodologies for safer AI, sharing insights across the ecosystem, and fostering a culture of responsible innovation. This also helps to ensure that regulatory standards are grounded in the latest scientific understanding of AI capabilities and risks, preventing arbitrary or technologically outdated mandates.

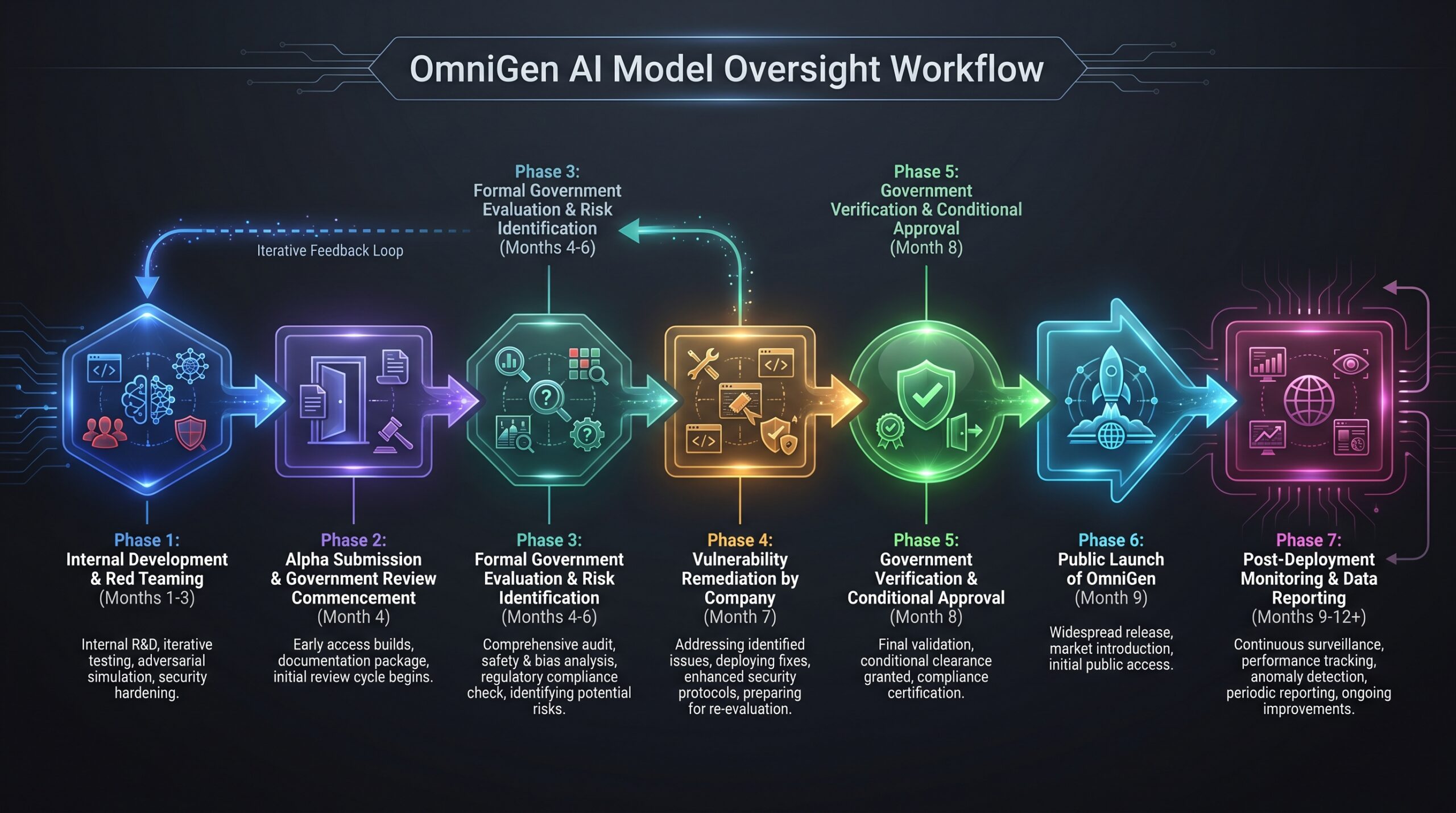

Case Study: Implementing Oversight for a New Multimodal Model

Imagine "OmniGen," a new multimodal AI from a signatory company scheduled for release in Q4 2026. Under the new US government AI model oversight rules, its launch timeline now looks different. This case study demonstrates the practical implications of the new framework on a typical AI product development cycle:

Months 1-3 (Development): The company’s internal red teaming proceeds as usual. This phase focuses on building the core model, initial training, and conducting preliminary safety checks based on internal protocols. This is where the bulk of the engineering work occurs.

Month 4 (Alpha): Upon reaching a stable alpha version, the company provides API access and model weights to the Center for AI Standards and Innovation, triggering the formal review clock. This marks the beginning of the mandated government evaluation period, requiring secure data transfer and access protocols. This early handover ensures the government team has sufficient time for thorough analysis.

Months 4-6 (Government Evaluation): Government evaluators and contracted experts run OmniGen through a battery of tests. They probe its cybersecurity knowledge, its ability to generate harmful multimedia content, and its potential for enabling disinformation campaigns at scale. They identify a critical vulnerability in its video generation module that could be exploited to create highly convincing deepfakes for financial fraud. This phase is intensive, involving diverse expertise and advanced testing tools.

Month 7 (Remediation): The company works to patch the identified vulnerability. The government team verifies the fix. This collaborative period involves iterative development and validation, ensuring that identified risks are effectively neutralized before progression to public release. This step highlights the back-and-forth nature of the new oversight process.

Month 8 (Conditional Approval): The Center for AI Standards and Innovation grants approval for public release, with the condition that the company must report any related anomalous outputs detected in the first 90 days of public use. This conditional approval mechanism allows for controlled release while maintaining a safety net for emergent issues.

Month 9 (Launch): OmniGen is released to the public. This public debut is now backed by both internal and external (government) safety assurances, fostering greater trust among users and stakeholders.

Months 9-12 (Post-Deployment): The company provides the government with aggregated, anonymized data on usage patterns and model outputs for continued assessment, fulfilling the post-deployment agreement term. This ongoing monitoring ensures that OmniGen continues to operate within safe parameters and allows for proactive identification of any new risks.

The Major Tech Companies: Who Is In and Who Is Next?

The rollout of binding agreements has been selective but strategic, targeting the most influential players in frontier AI development. This section details the current status of engagement among top AI labs, providing clarity on who has formally committed to the new US government AI model oversight framework.

| Company | Status as of May 6, 2026 | Details |

|---|---|---|

| Google DeepMind | Confirmed Agreement | Part of the May 5th announcement with Google. Government gets early access to evaluate models. |

| Microsoft | Confirmed Agreement | Part of the May 5th announcement. Government gets early access to evaluate models. |

| xAI | Confirmed Agreement | Part of the May 5th announcement. Government gets early access to evaluate models. |

| Anthropic | Discussed, No Public Agreement | White House officials met with executives the prior week. Status of a formal agreement is pending; heavily influenced by the "Mythos Crisis." |

| OpenAI | Discussed, No Public Agreement | White House officials met with executives the prior week. Status of a formal agreement is pending; likely focusing on models like GPT-5.4 and GPT-5.5. |

Strategic Inclusions

The inclusion of xAI alongside established giants like Google and Microsoft signals that the government is focused on capability, not just company size, and wants to ensure oversight covers all major players advancing frontier AI. This demonstrates a pragmatic approach, recognizing that impactful AI innovation can emerge from various sources.

The deliberate targeting of these companies reflects their current leadership in developing advanced general-purpose AI. The agreements ensure that the most powerful models, which carry the greatest potential for both benefit and harm, are subject to rigorous government scrutiny. This move establishes a precedent for future engagements with other emerging frontier AI developers.

Pending Agreements and Future Expansions

The status of Anthropic and OpenAI is particularly telling, given their prominence in the AI landscape and the known impact of the "Mythos crisis" on Anthropic. The ongoing discussions suggest that further agreements are likely imminent, completing the initial phase of securing commitments from all major frontier AI labs.

As new companies emerge and AI capabilities continue to accelerate, the government will likely seek to expand these agreements or transition to a more universally mandated framework through executive action or legislation. The goal is to create a comprehensive safety net that evolves with the technology itself, ensuring that no significant player operates outside the new oversight framework.

Risks and Challenges of the New Oversight Framework

This aggressive move in US government AI model oversight carries significant implementation risks that developers and policymakers must navigate. While the intent is to enhance safety and security, the practicalities of execution introduce a complex set of challenges.

The balance between robust oversight and fostering innovation is delicate. Overly burdensome regulations could stifle progress, while insufficient oversight risks unforeseen catastrophic failures. Successfully implementing this framework requires constant vigilance, adaptability, and a willingness to learn from initial experiences.

What Can Go Wrong?

- Innovation Stagnation: Government bureaucracy could slow the release of critical AI advancements, ceding competitive advantage to other nations with less stringent rules. Delays in product launches due to lengthy review processes could impact companies’ financial viability and global competitiveness.

- Expertise Gap: The government may lack the technical talent to conduct meaningful evaluations, leading to superficial checks that miss real risks or flag false positives. Attracting and retaining top AI talent to the Center for AI Standards and Innovation will be an ongoing challenge.

- Voluntary Gaps: The patchwork of company-specific agreements could fail as new developers emerge or existing ones decline to sign similar deals. This could create uneven playing fields and allow some powerful AI models to operate outside the regulatory perimeter.

- Political Influence: Evaluations could be influenced by political considerations, leading to the biased approval or suppression of certain technologies. Maintaining impartiality and scientific rigor within the oversight process is crucial for its credibility.

- Under-Resourcing: The Center for AI Standards and Innovation may not receive the funding or staff needed to competently evaluate the flood of new models, creating a bottleneck. Protecting AI models adequately requires significant investment.

Common Mistakes to Avoid

- One-Size-Fits-All Rules: Applying the same evaluation criteria to a medical diagnostic AI and a creative writing model is inefficient and ineffective. Oversight must be tailored to the specific risk profile and application domain of each AI system.

- Ignoring Developer Input: Designing oversight in a vacuum without deep collaboration with engineers leads to unworkable rules. Effective regulation requires continuous dialogue and feedback from those actively building and deploying AI.

- Focusing Only on Pre-Release: Neglecting the "post-deployment assessment" part of the agreements would miss the most critical phase for identifying emergent risks. Real-world interaction often reveals issues not apparent in laboratory settings.

- Going It Alone: Failing to align with allied nations on AI standards creates a fragmented global regime that is easy for companies to bypass. International cooperation is essential for comprehensive and effective AI governance.

Risk Mitigation Checklist for AI Developers

For AI companies navigating the new reality of US government AI model oversight, proactive risk mitigation is essential. This checklist provides actionable steps to ensure compliance and maintain operational efficiency within the new regulatory environment.

- Map Your Internal Governance: Document your existing safety protocols, red teaming results, and liability insurance. This demonstrates a mature approach to regulators and provides a baseline for discussions.

- Designate a Point of Contact: Assign a senior technical leader and a legal/policy lead as the single points of contact for all government oversight communications. This streamlines information flow and ensures consistent messaging.

- Prepare for Transparency: Develop secure methods for sharing model access and output data with government evaluators without compromising IP or user privacy. This might involve secure sandboxes, anonymized data pipelines, and clear data governance policies.

- Integrate Review into Timelines: Build a buffer of至少 60-90 days into your product roadmap to accommodate the government evaluation process without missing launch windows. Proactive scheduling is key to avoiding costly delays.

- Plan for Post-Deployment Reporting: Create an internal system for monitoring model performance and aggregating data to fulfill ongoing assessment requirements. This includes establishing metrics for safety incidents, anomalous behavior, and model drift.

- Engage Proactively: Don’t wait for an invitation. Initiate discussions with the Center for AI Standards and Innovation to understand their evaluation criteria and expectations. Being proactive can help shape the regulatory process and build a cooperative relationship.

AI Developer Proactive Compliance Checklist

- Internal Governance Audit: Document safety protocols, red teaming, and liability.

- Designated Liaisons: Assign technical and policy contacts for government interactions.

- Transparency Protocols: Establish secure methods for sharing model access and data.

- Roadmap Adjustment: Integrate 60-90 day buffer for government review.

- Post-Deployment Monitoring: Implement systems for continuous assessment and reporting.

- Proactive Engagement: Contact Center for AI Standards and Innovation early.

The Future of AI Oversight: Executive Order and Working Group

The agreements are the first step. The next phase could be a move to cement the US government AI model oversight framework via an executive order. This order would likely empower the creation of a special working group composed of technology leaders and government officials. The mandate of this group would be to design a more permanent, standardized set of rules for AI development and deployment in the United States, potentially moving beyond voluntary agreements to a mandatory system for all frontier model developers.

An executive order offers a robust mechanism for institutionalizing the new oversight, providing it with stronger legal backing and a clearer mandate across federal agencies. This move would signal a long-term commitment to AI governance, transcending individual administrations and establishing a durable framework for responsible innovation. Such a working group would bring together diverse expertise to address the multifaceted challenges of AI regulation, from technical standards to ethical guidelines.

Potential Impact of an Executive Order

An executive order could:

- Standardize Requirements: Create uniform standards and guidelines applicable to all frontier AI developers, ensuring consistent safety and security measures across the industry.

- Broaden Scope: Extend government oversight to a wider range of AI models and applications, including those from smaller developers who might not be covered by initial agreements.

- Mandate Data Sharing: Enforce specific data sharing requirements for post-deployment monitoring, ensuring comprehensive visibility into AI model behavior in the real world.

- Establish Enforcement Mechanisms: Outline penalties for non-compliance, providing the necessary teeth to ensure adherence to the new regulations.

- Facilitate International Alignment: Position the US as a leader in AI governance, facilitating discussions and potential alignment with international partners on global AI standards.

The creation of such a working group would also serve as a critical forum for dialogue between the government and the private sector, allowing for the co-creation of regulations that are both effective and practical. This collaborative approach is vital for navigating the complex and rapidly evolving landscape of AI technology.

Key Takeaways: US Government AI Model Oversight

- Shift from Voluntary to Formal: The US government is transitioning from voluntary AI safety guidelines to binding agreements and formal review processes for new AI models.

- Early Access & Post-Deployment: New agreements mandate early government access to AI models for pre-release security evaluation and continuous post-deployment assessment.

- Key Players: The Center for AI Standards and Innovation is the operational hub, with President Trump driving the policy via potential executive order. Frontier AI labs like Google DeepMind, Microsoft, and xAI have signed agreements.

- Catalysts: The "Mythos crisis" (an implied incident involving Anthropic’s Claude Mythos model) and the emergence of ultra-powerful models like ChatGPT 5.5 are cited as major drivers for the stricter oversight.

- Risks & Challenges: Potential innovation stagnation, government expertise gaps, uneven compliance, political influence, and under-resourcing are significant concerns.

- Developer Obligations: AI labs must integrate government review into their development timelines, prepare for data transparency, and establish dedicated points of contact for oversight.

- Future Outlook: A potential executive order could establish a special working group to design permanent, standardized, and mandatory AI rules.